Knowledge Hub

Insights &

Research

Deep dives into AI architecture, production ML systems, and engineering best practices.

Search

Topics

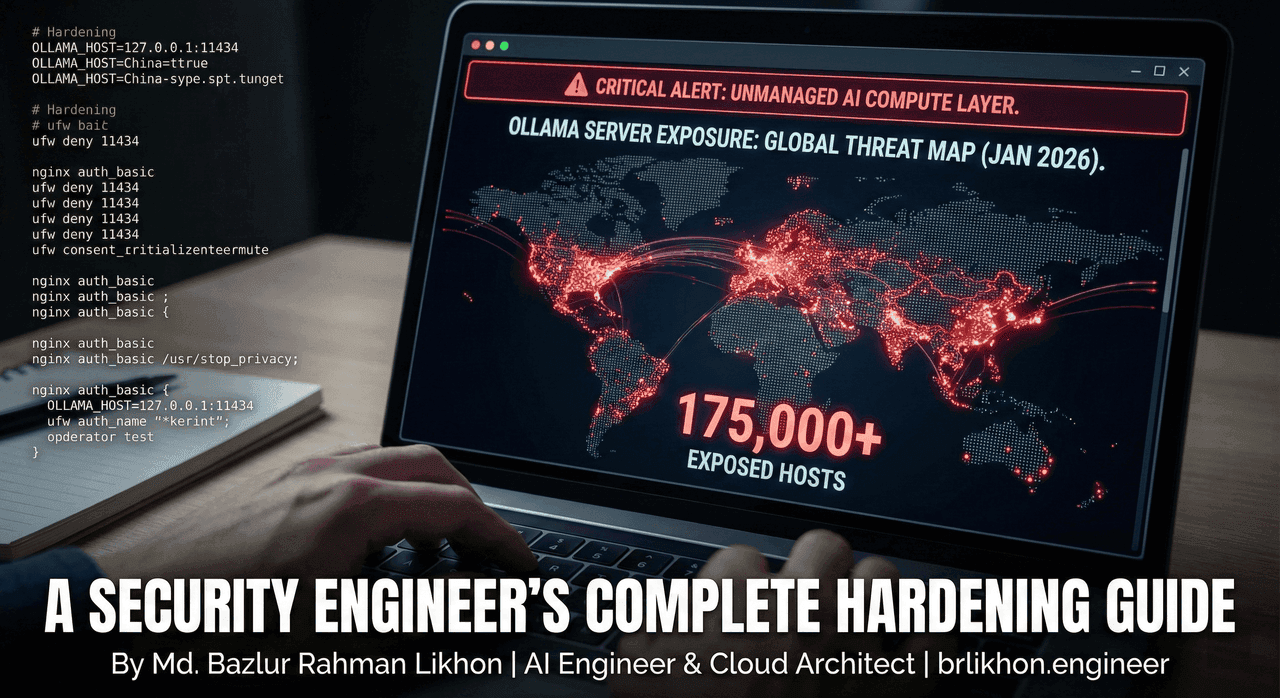

175,000 Ollama Servers Exposed: A Security Engineer's Complete Hardening Guide

175,000 Ollama servers are publicly exposed, enabling LLMjacking, data theft, and remote code execution at scale. This hands-on security guide explains how attackers exploit misconfigured Ollama deployments and provides a step-by-step, production-tested hardening checklist—firewalls, reverse proxies, Docker isolation, monitoring, and audits—used in real Fortune 500 AI environments.

Saudi Vision 2030 AI Implementation: The Complete Enterprise Guide

Saudi Vision 2030 is accelerating AI adoption at an unprecedented scale—but most enterprises struggle to move from strategy to production. This 2026 enterprise guide breaks down SDAIA regulations, proven Saudi AI case studies (Aramco, NEOM, STC), Arabic-first AI requirements, and a step-by-step implementation roadmap to build Vision 2030-aligned, compliant, ROI-driven AI systems.

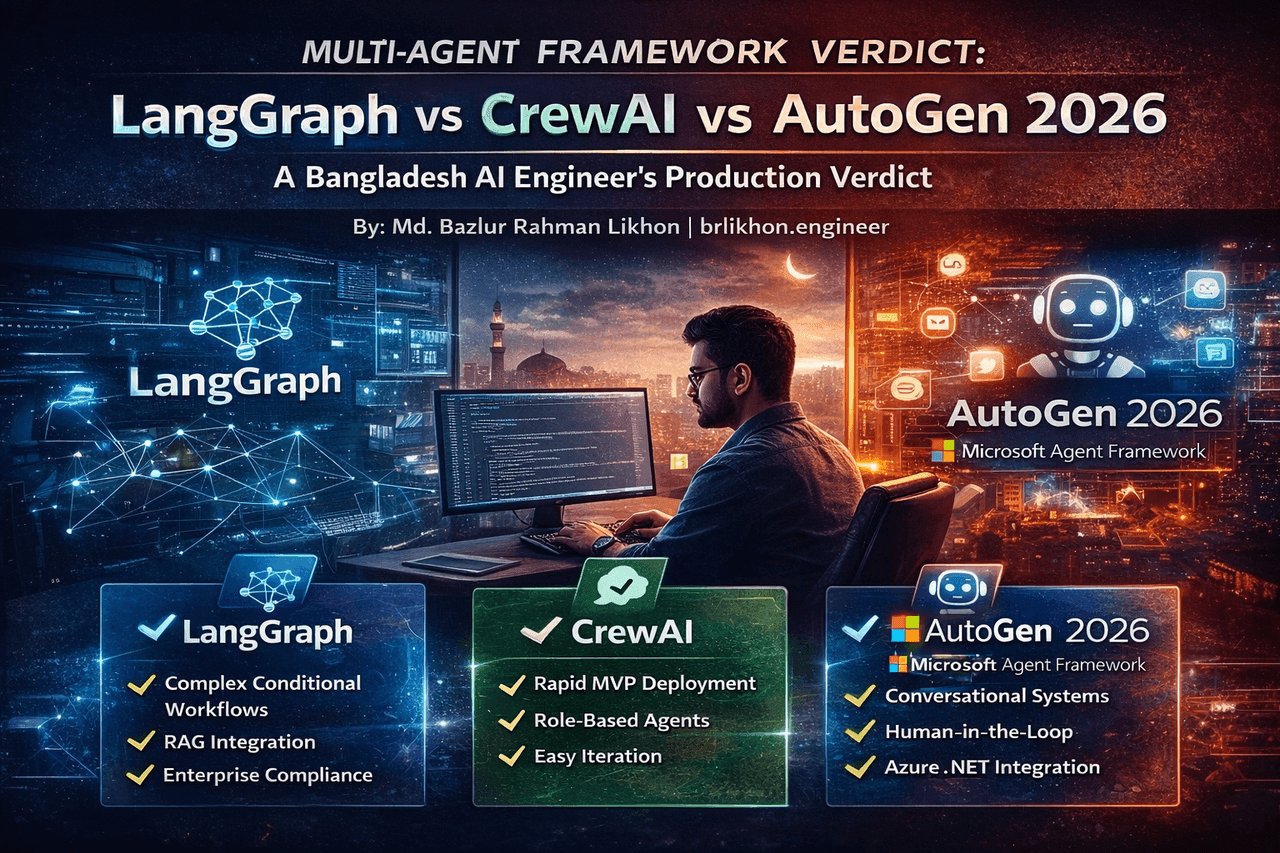

LangGraph vs CrewAI vs AutoGen 2026: A Bangladesh AI Engineer's Production Verdict

A production-tested comparison of LangGraph, CrewAI, and Microsoft Agent Framework (AutoGen) based on 18+ months of real enterprise deployments. This guide breaks down which multi-agent framework actually survives scale, compliance, and latency in 2026—and how to choose the right one for startups vs regulated enterprises.

Context Engineering: The New $300K/Year Career Path That's Replacing Prompt Engineering in 2026

Context engineering is replacing prompt engineering as the highest-leverage skill in AI systems—and salaries are exploding past $300K. This deep-dive explains what context engineering really is, why MCP, RAG, memory, and orchestration are now mandatory for production AI, and how developers and companies can capitalize on the biggest AI career shift of 2026.

Building AI Agent Networks in 2026: What Moltbook's 1.5M Agents Teach Us About Production Architecture

This deep-dive dissects Moltbook’s explosive growth and what it reveals about building production-grade AI agent networks in 2026. Drawing from real-world experiments and enterprise deployments, the article breaks down the heartbeat architecture, agent-to-agent coordination patterns, security failures, and scalability lessons that every serious AI team must understand before shipping multi-agent systems at scale.

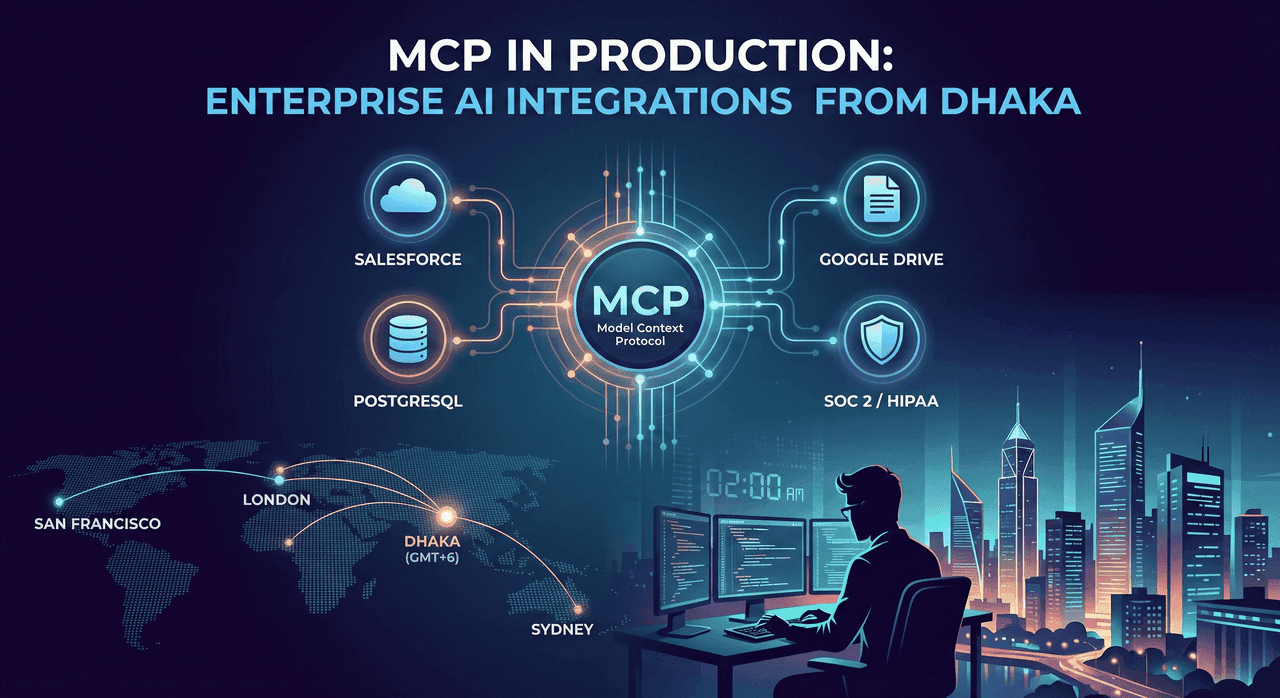

MCP in Production: How I Built 10 Enterprise Integrations from Bangladesh for US Clients

How I built 10 production MCP integrations for US enterprise clients from Bangladesh. Real code, costs, and lessons from $2M+ in deployed AI systems.

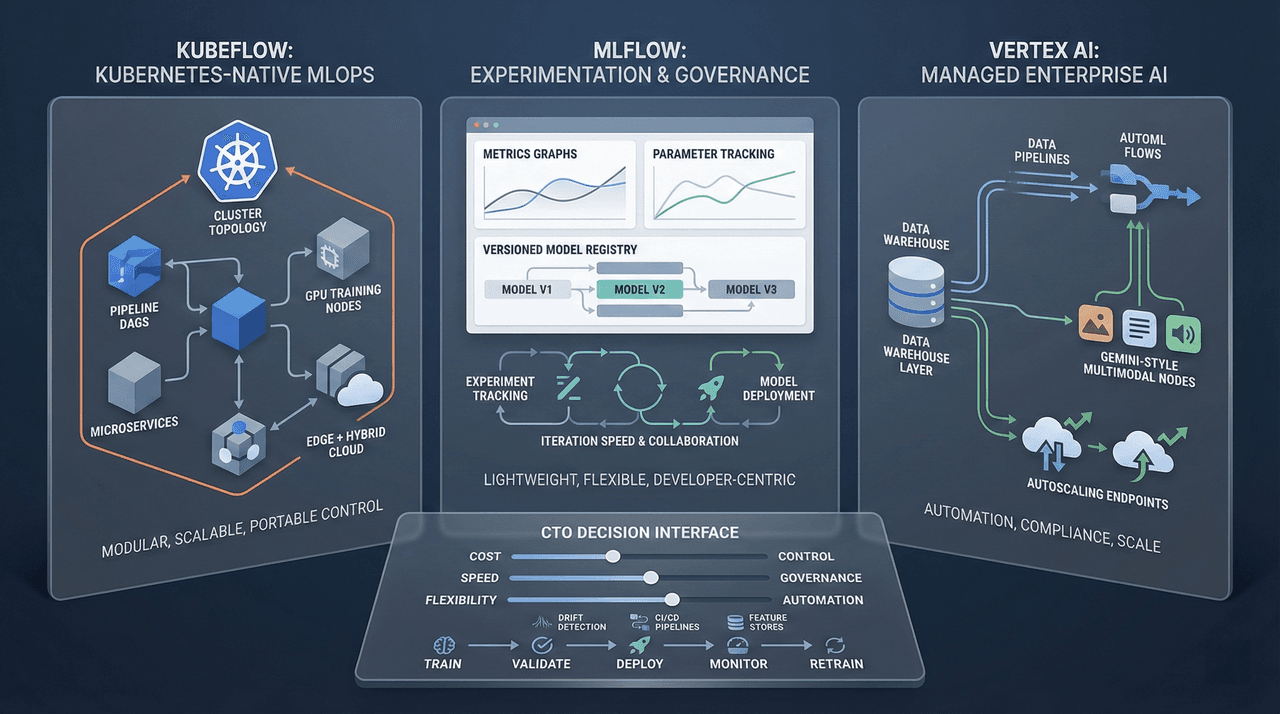

Kubeflow vs MLflow vs Vertex AI: The 2026 MLOps Platform Battle

A deep, production-grade comparison of Kubeflow, MLflow, and Google Vertex AI for enterprise MLOps in 2026. This guide analyzes architecture, pricing, scalability, governance, GenAI readiness, and real-world performance—helping CTOs and ML leaders select the right platform based on team maturity, regulatory constraints, and long-term total cost of ownership.

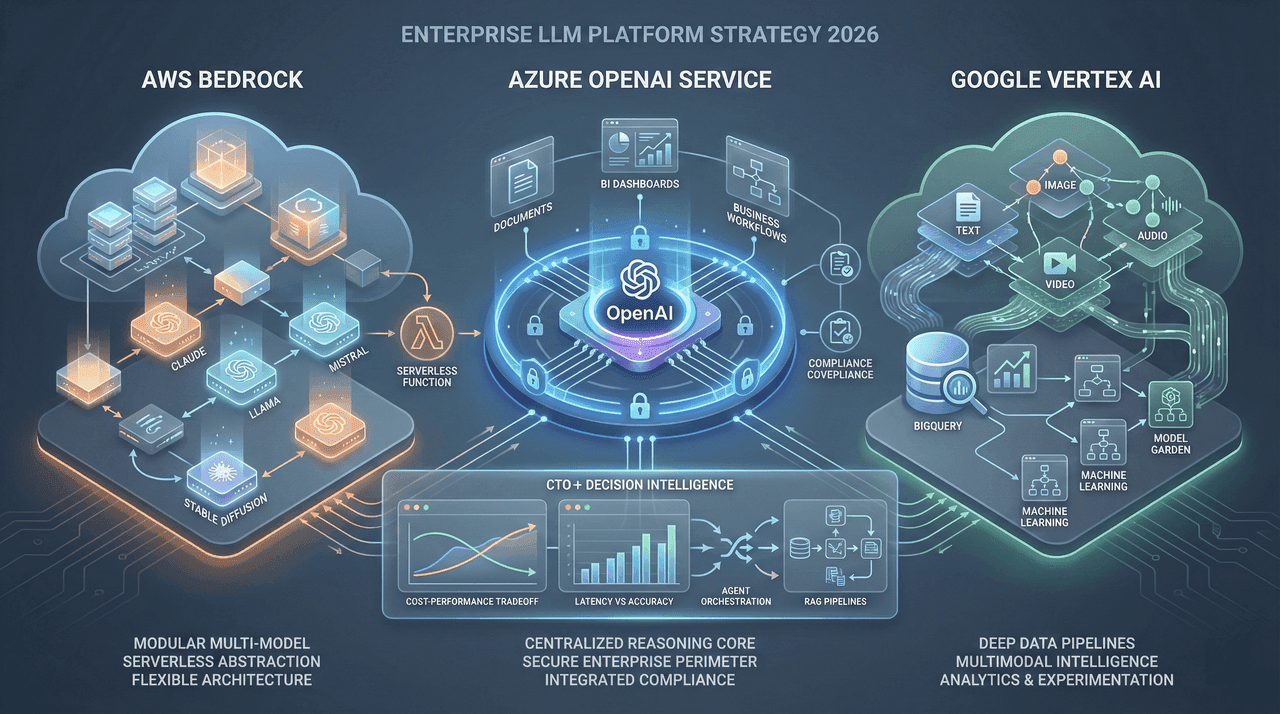

AWS Bedrock vs Azure OpenAI vs Vertex AI: Managed LLM Platforms 2026

A comprehensive, enterprise-grade comparison of AWS Bedrock, Azure OpenAI Service, and Google Vertex AI for 2026. This guide breaks down real-world pricing, performance, model availability, compliance, multi-agent support, and total cost of ownership—providing a clear decision framework to help CTOs and AI leaders choose the right managed LLM platform for production workloads.

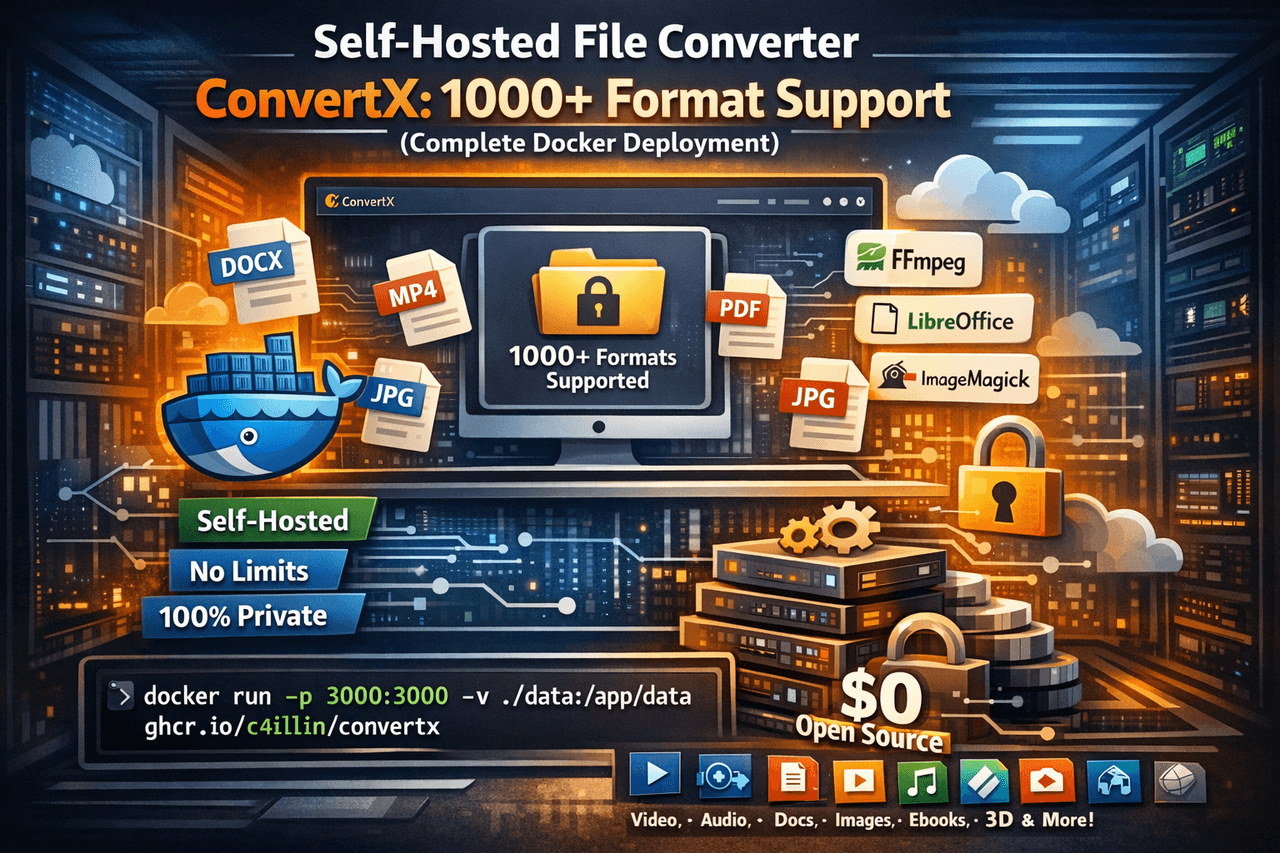

Self-Hosted File Converter: ConvertX's 1000+ Format Support (Complete Docker Deployment)

ConvertX is a self-hosted, open-source file conversion platform that replaces online converters with a single Docker-based service. Supporting 1000+ formats across documents, media, images, e-books, vector graphics, and 3D models, it delivers unlimited conversions, zero file size limits, and complete data privacy. This guide covers architecture, Docker deployment, security hardening, performance tuning, and real-world production use cases—everything you need to run your own private conversion hub.

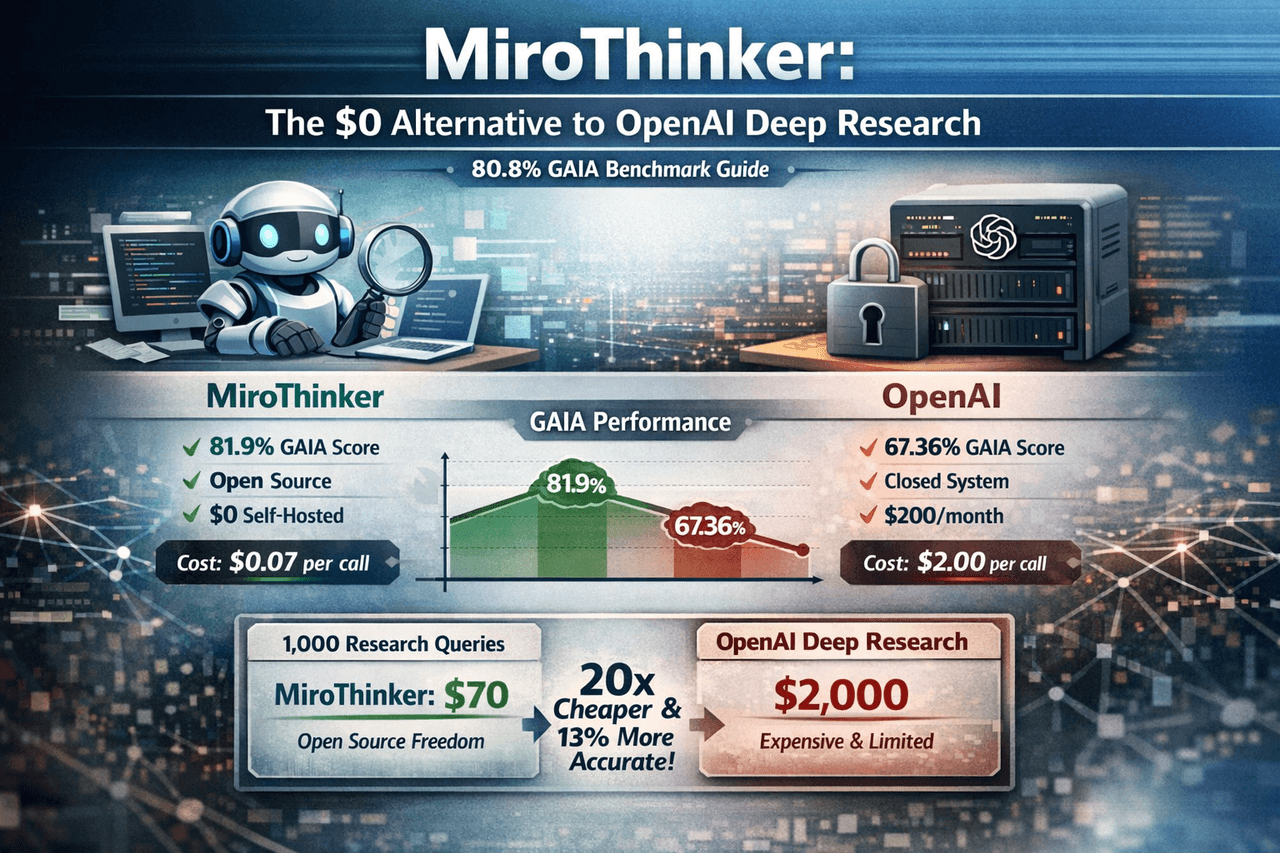

MiroThinker: The $0 Alternative to OpenAI Deep Research (80.8% GAIA Benchmark Guide)

MiroThinker is an open-source research agent that outperforms OpenAI Deep Research on the GAIA benchmark while costing up to 96% less. This in-depth guide breaks down its architecture, benchmarks, deployment options, and real-world cost economics—showing how to replace $200/month proprietary research tools with a transparent, self-hosted system you fully control.

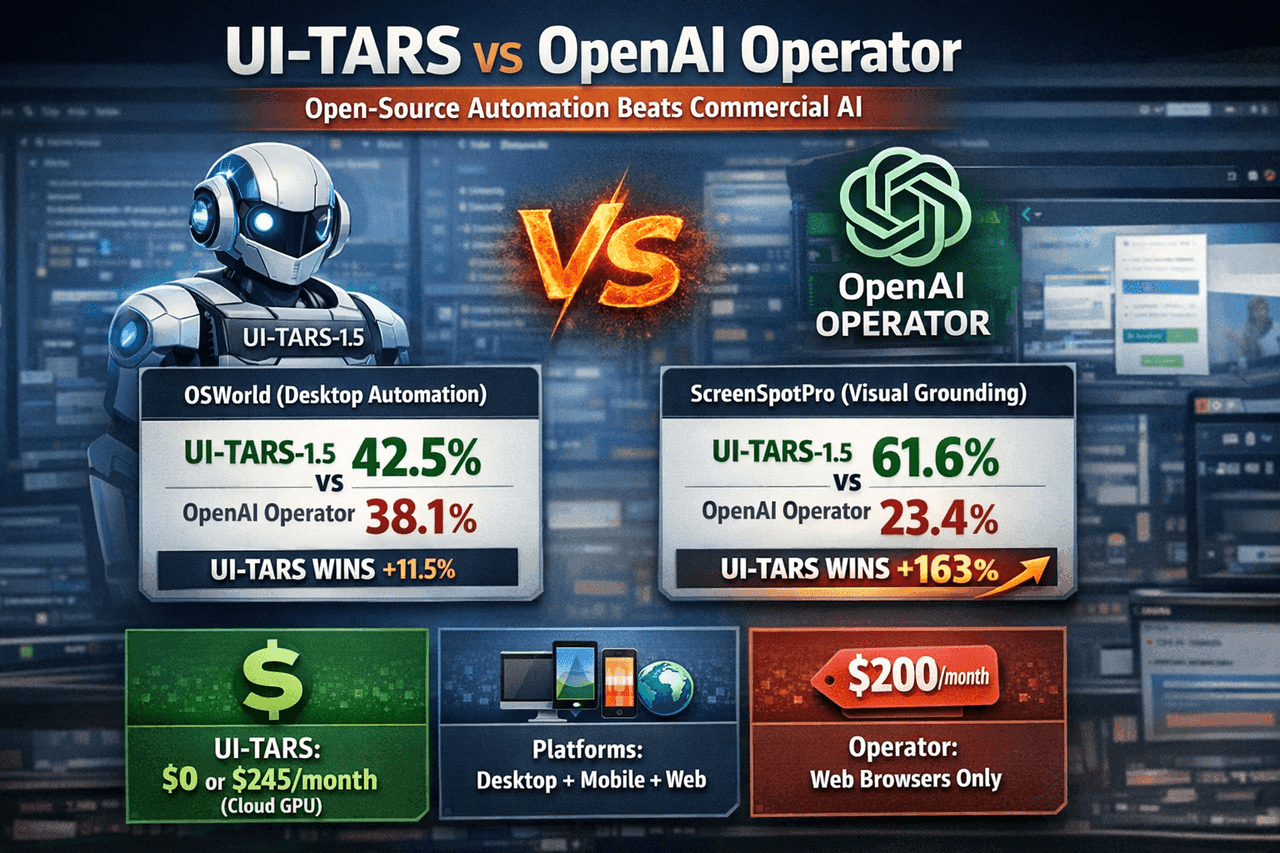

UI-TARS vs OpenAI Operator: Open-Source Desktop Automation Beats Commercial AI (Benchmark Analysis)

An evidence-driven, benchmark-level teardown of UI-TARS-1.5 vs OpenAI Operator, showing how an open-source, self-hosted GUI agent outperforms a $200/month commercial alternative in desktop automation, visual grounding, mobile support, and cost efficiency—with only narrow concessions on web-only tasks. This analysis cuts through marketing claims, exposes benchmark replication gaps, and delivers a pragmatic deployment playbook for engineers and enterprises building real automation at scale.

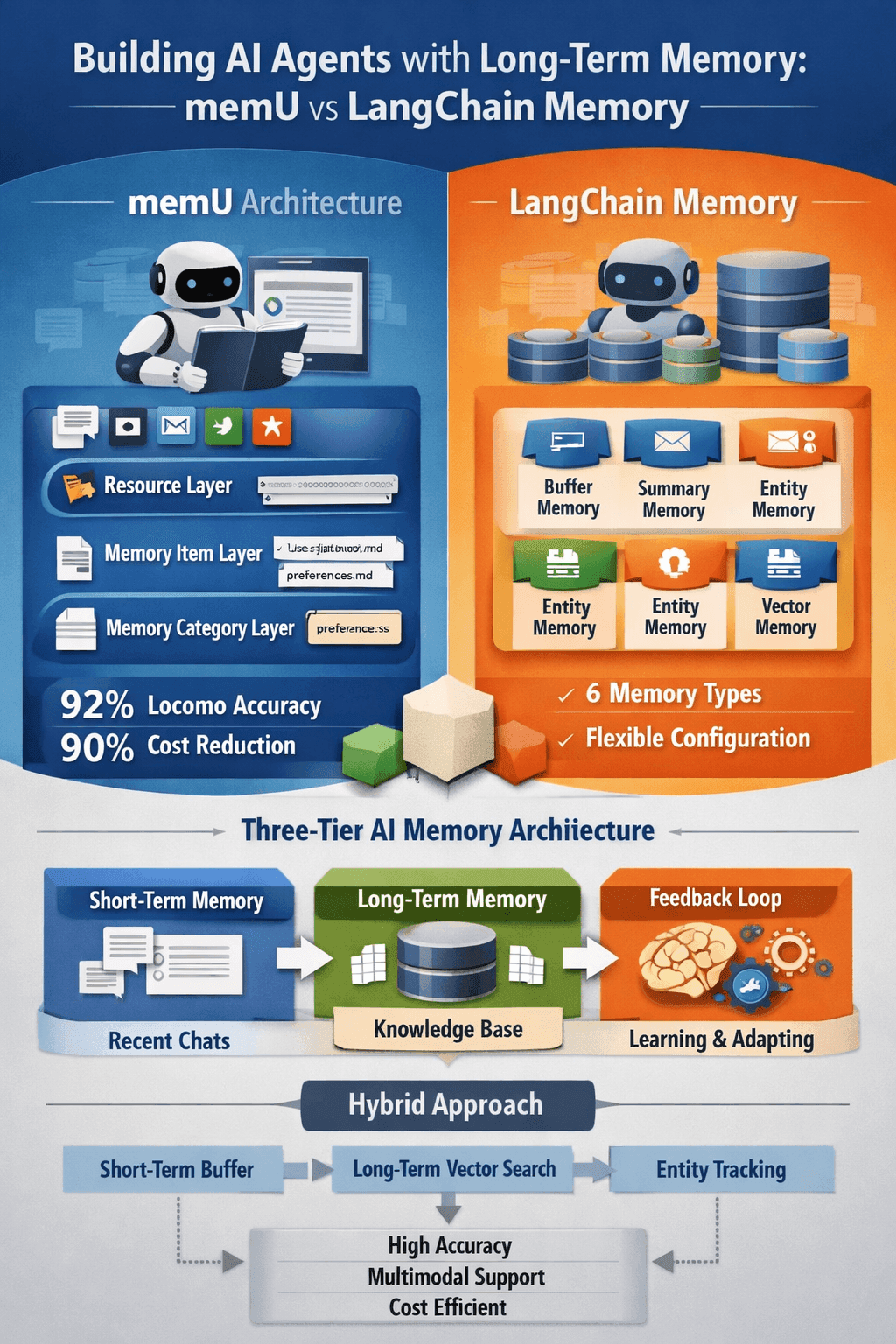

Building AI Agents with Long-Term Memory: memU vs LangChain Memory (Complete Architecture Guide)

A deep, production-grade comparison of AI agent memory architectures in 2026. This guide breaks down how memU and LangChain Memory work at a system level—covering accuracy benchmarks, cost trade-offs, multimodal support, and real-world deployment patterns—so you can choose the right long-term memory strategy for building AI agents that truly remember, learn, and evolve.

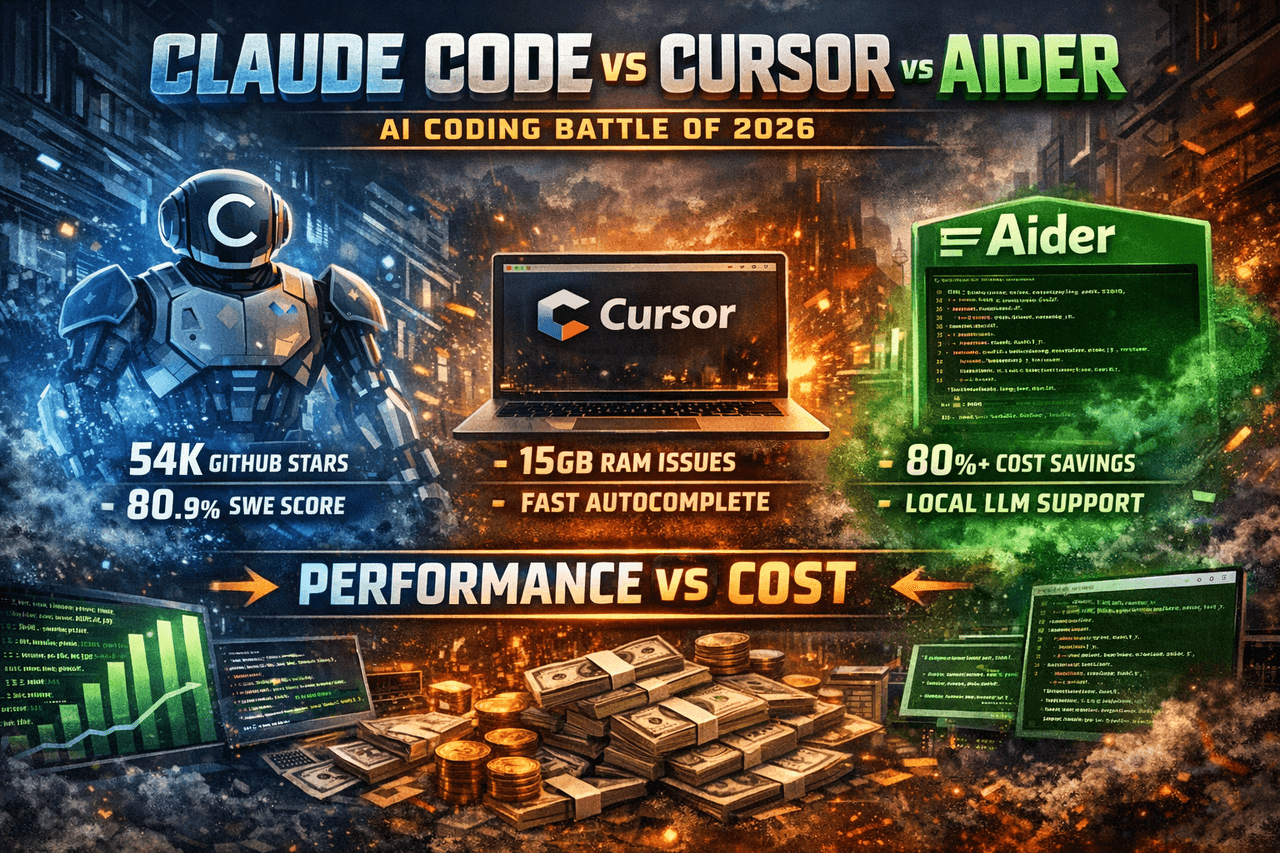

Claude Code vs Cursor vs Aider: The Terminal AI Coding Battle of 2026 (Complete Performance + Cost Breakdown)

A production-grade comparison of Claude Code, Cursor, and Aider in 2026. This deep dive cuts through hype and marketing to analyze real performance, SWE-bench results, memory behavior, pricing models, and enterprise viability. Based on 500+ developer reports and real-world benchmarks, it explains who should use what, when, and why—including hybrid workflows that outperform any single tool.

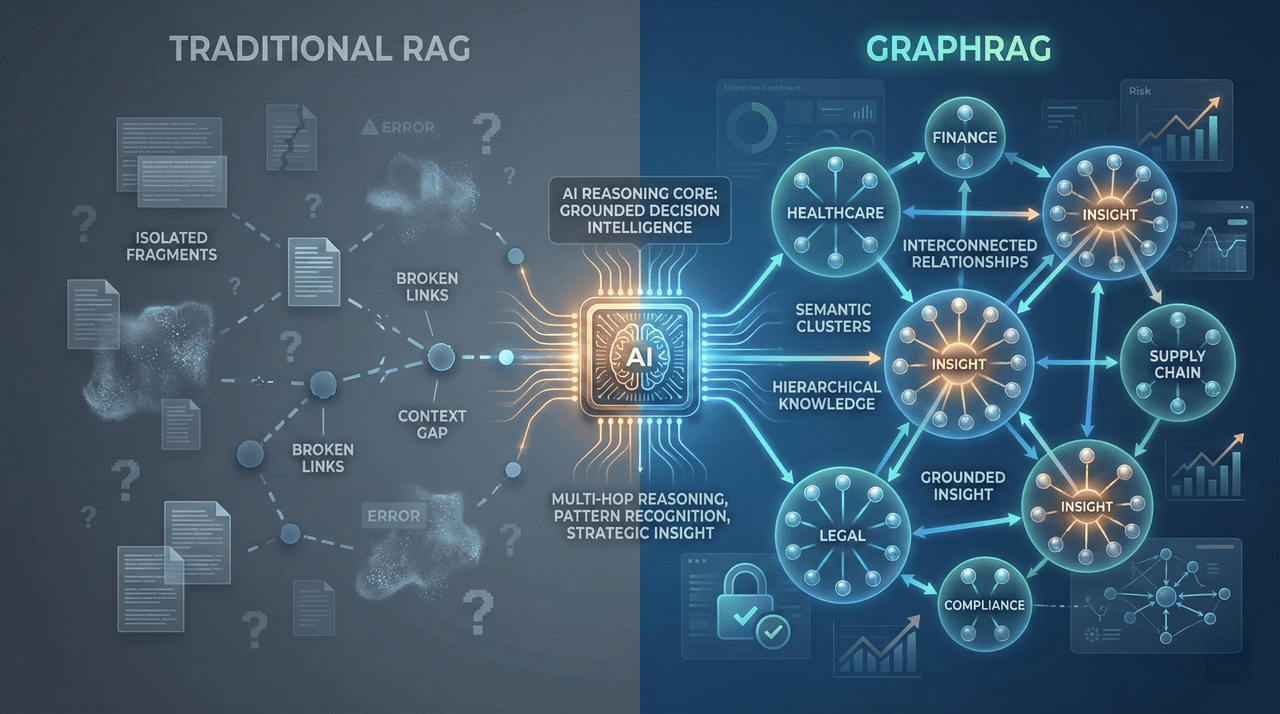

GraphRAG Explained: Next-Generation Knowledge Retrieval for Enterprise

A deep, enterprise-grade breakdown of GraphRAG and why traditional vector-based RAG systems silently fail on complex business questions. This guide explains how graph-based retrieval delivers 3–4× higher accuracy, enables multi-hop reasoning, reduces hallucinations, and unlocks strategic insights that embedding-only systems cannot—complete with real benchmarks, cost models, and deployment frameworks for 2026.

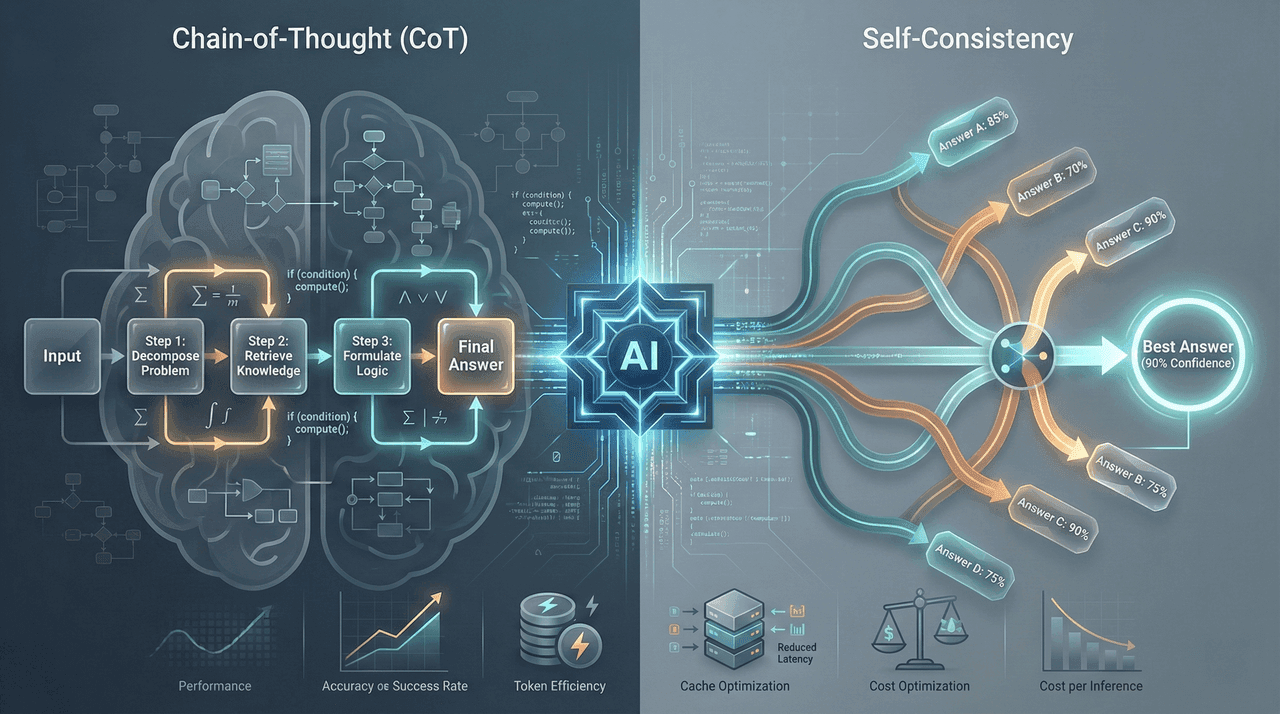

Advanced Prompt Engineering 2026: Chain-of-Thought + Self-Consistency

A comprehensive 2026 guide to advanced prompt engineering techniques, focusing on Chain-of-Thought and Self-Consistency. This article explains how to systematically improve LLM reasoning accuracy by 40–60%, reduce inference costs by up to 70%, and deploy production-grade prompting frameworks using real benchmarks, implementation templates, and enterprise-ready workflows.

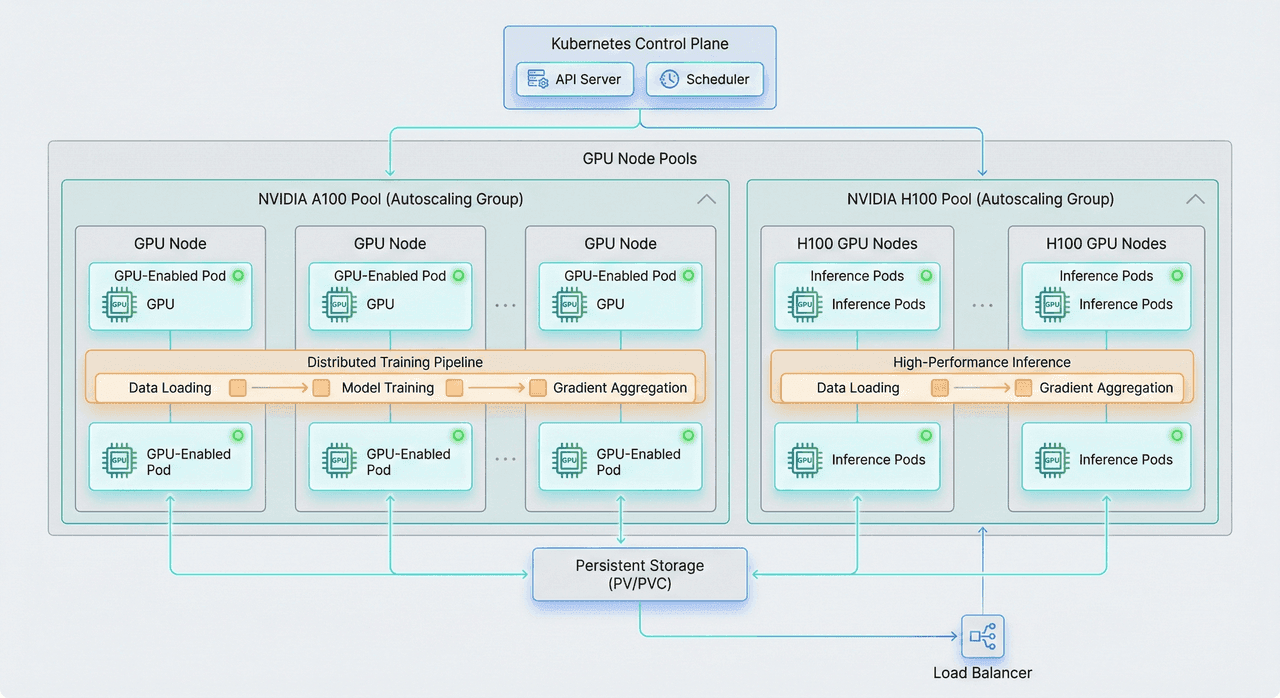

Kubernetes for AI Workloads: GPU Scheduling & Autoscaling Guide (2026)

A deep technical, production-grade guide to running AI and ML workloads on Kubernetes in 2026. This article covers GPU scheduling, MIG-based partitioning, Kueue and gang scheduling, DCGM-powered observability, multi-node distributed training, and intelligent autoscaling—showing how enterprises can increase GPU utilization from 20% to 70–80% while cutting infrastructure costs by up to 70%.

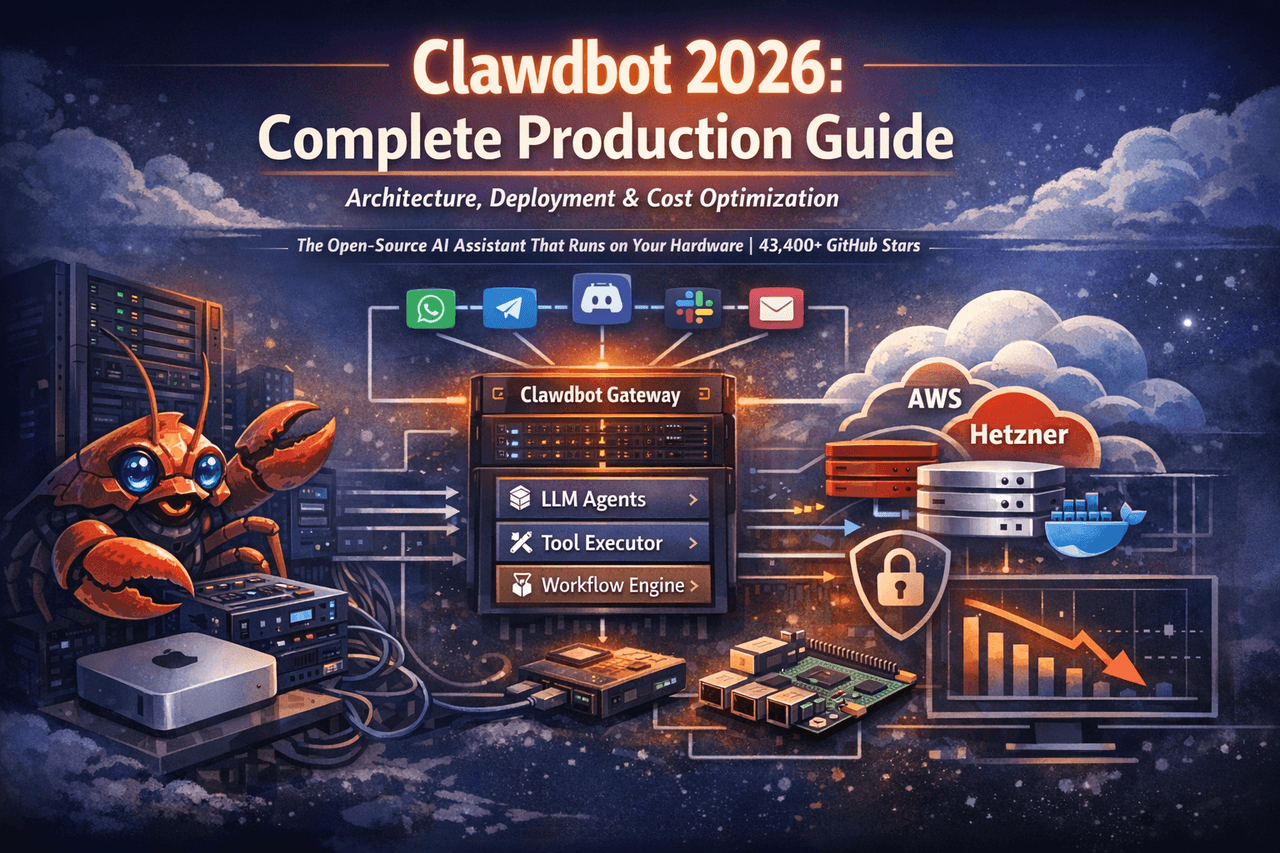

Clawdbot 2026: Complete Production Guide – Architecture, Deployment & Cost Optimization

Clawdbot is the first truly agentic, self-hosted AI assistant that runs entirely on your own hardware. This production-grade guide breaks down its real-world architecture, multi-channel integrations, security model, deployment strategies, and hard cost optimization data—based on 1,100+ live deployments. Written for CTOs, engineers, and technical decision-makers who need autonomy, privacy, and measurable ROI from AI automation.

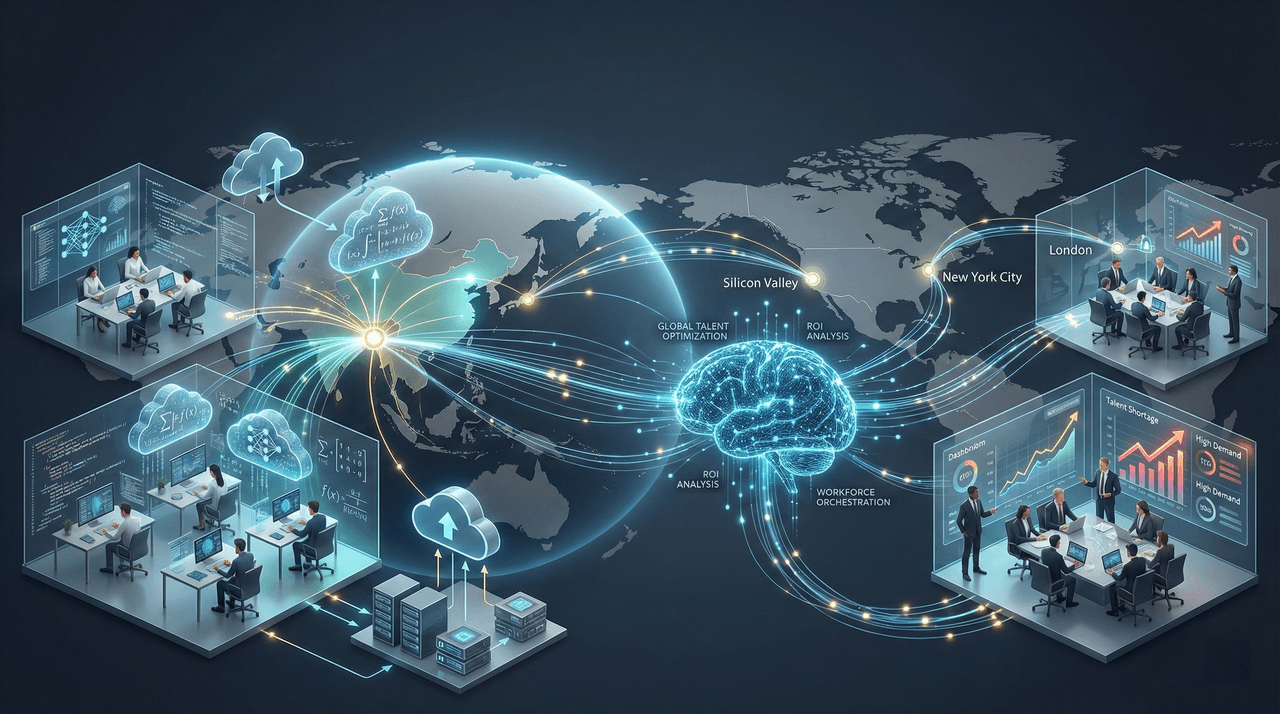

How to Hire AI Engineers in 2026: The Bangladesh Talent Advantage

A comprehensive, data-driven guide to hiring AI engineers in 2026, revealing why Bangladesh has emerged as the most strategic global talent hub. This article covers market dynamics, cost comparisons, skill requirements, vetting frameworks, onboarding playbooks, and retention strategies—showing how companies can achieve 68–77% cost savings without sacrificing quality or speed.

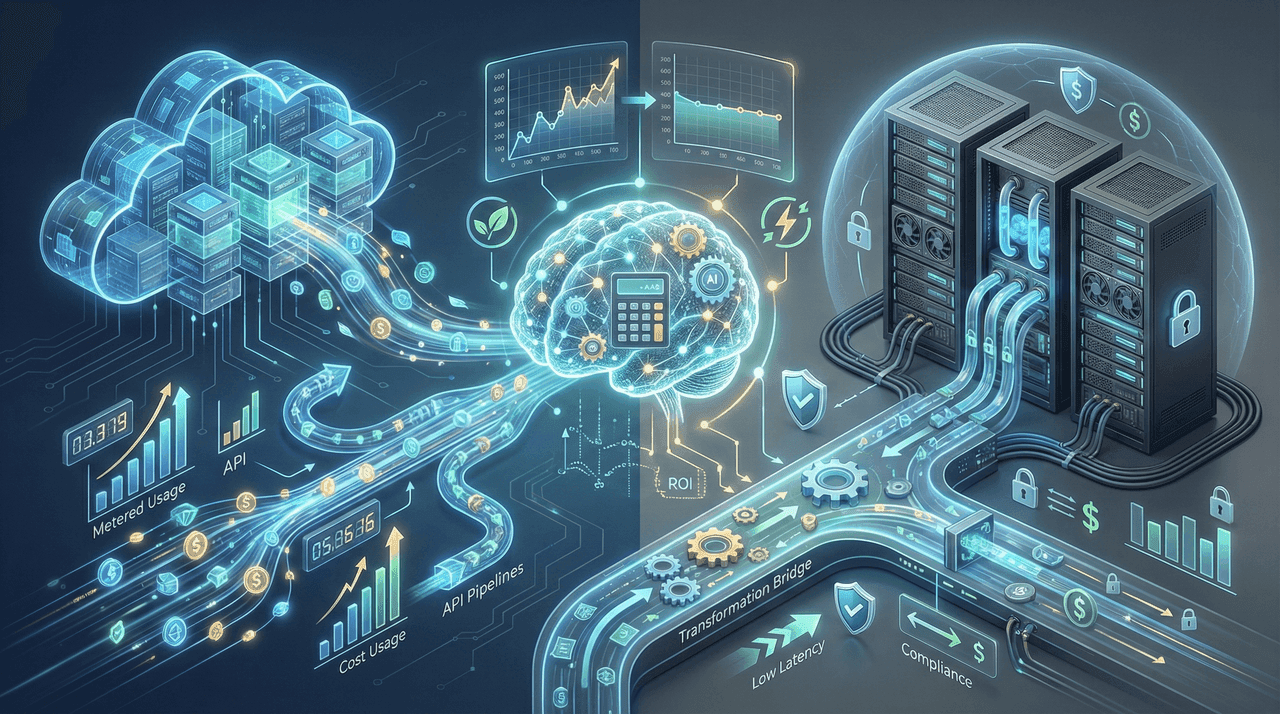

AI Cost Optimization 2026: Cloud to On-Prem Migration Calculator & ROI Analysis

A comprehensive, data-backed guide to AI cost optimization in 2026, comparing cloud APIs, on-prem GPU infrastructure, and hybrid deployments. This analysis includes real-world TCO models, break-even formulas, performance trade-offs, compliance considerations, and a practical migration calculator—helping enterprises reduce AI infrastructure costs by 40–70% while maintaining performance and governance.

LangChain vs LlamaIndex vs Haystack: The 2026 RAG Framework Battle

A data-driven, production-focused comparison of LangChain, LlamaIndex, and Haystack for enterprise RAG systems in 2026. This guide analyzes real-world performance, total cost of ownership, security and compliance readiness, developer experience, and scalability—helping CTOs and AI architects avoid costly framework mistakes and choose the right stack for production-grade retrieval systems.

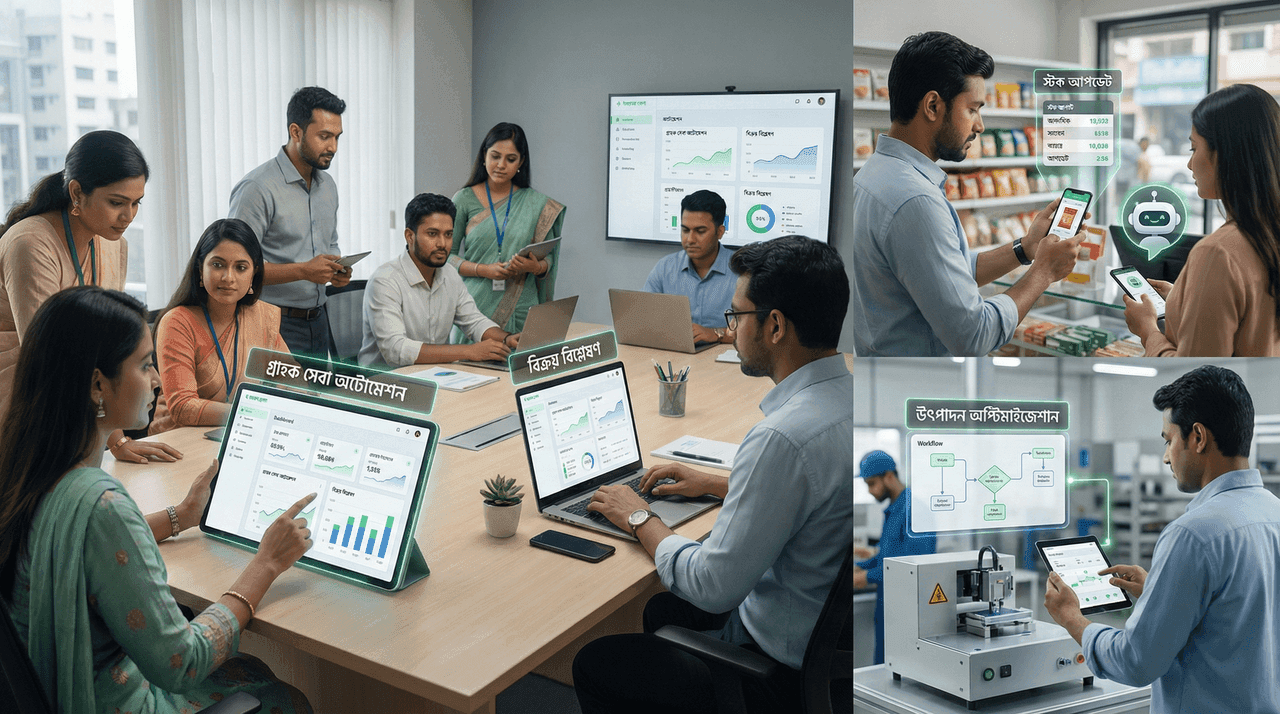

AI for Small Businesses in Bangladesh: Cost-Effective Solutions for Local Enterprises

AI adoption is no longer expensive or complex for small businesses in Bangladesh. This guide breaks down cost-effective AI solutions, real pricing in BDT and USD, and practical use cases—from customer service automation to marketing and operations—that deliver measurable ROI within months. Built on real-world implementations, it shows how Bangladeshi SMBs can use affordable AI and Bengali language processing to compete and scale without increasing headcount.

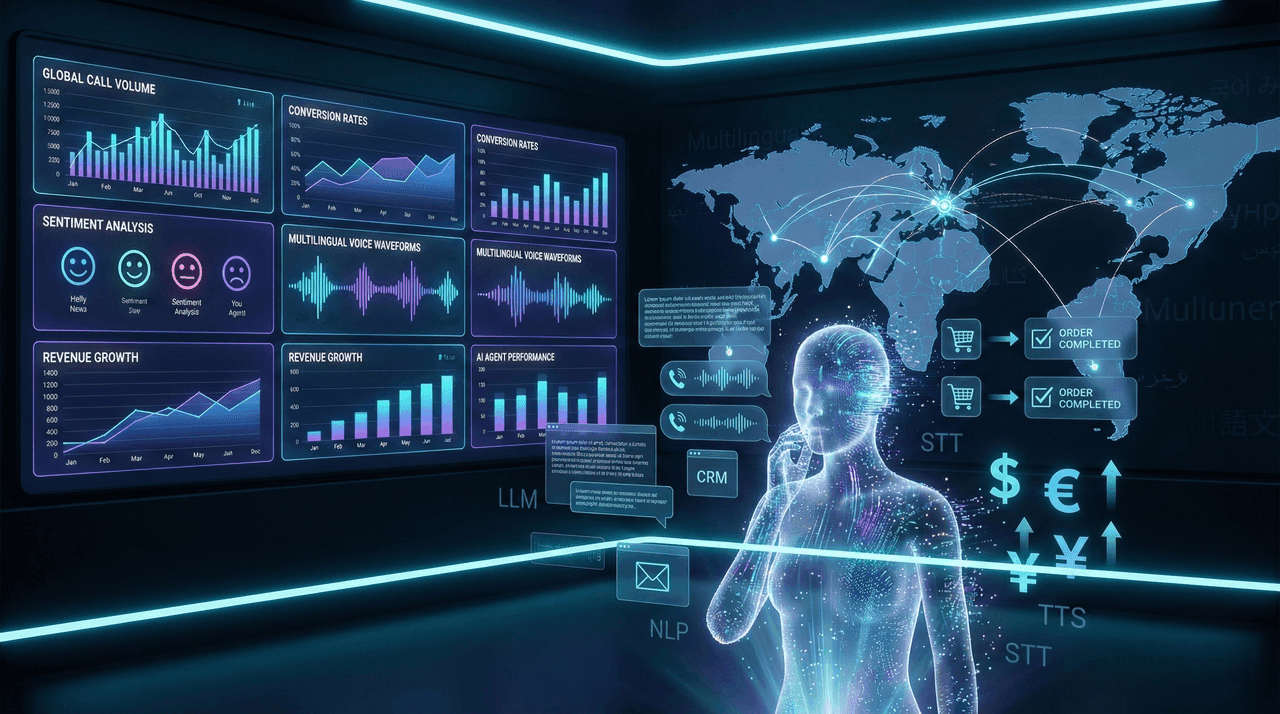

Building AI-Powered Call Centers: Complete Guide to Voice Commerce and Automated Sales Systems

A decision-grade, end-to-end guide to building AI-powered call centers and voice commerce systems in 2026. Covers real enterprise economics, technical architecture, platform comparisons, ROI benchmarks, and a proven 90-day deployment framework—focused on revenue growth, cost reduction, and multilingual scalability for global and South Asian markets.

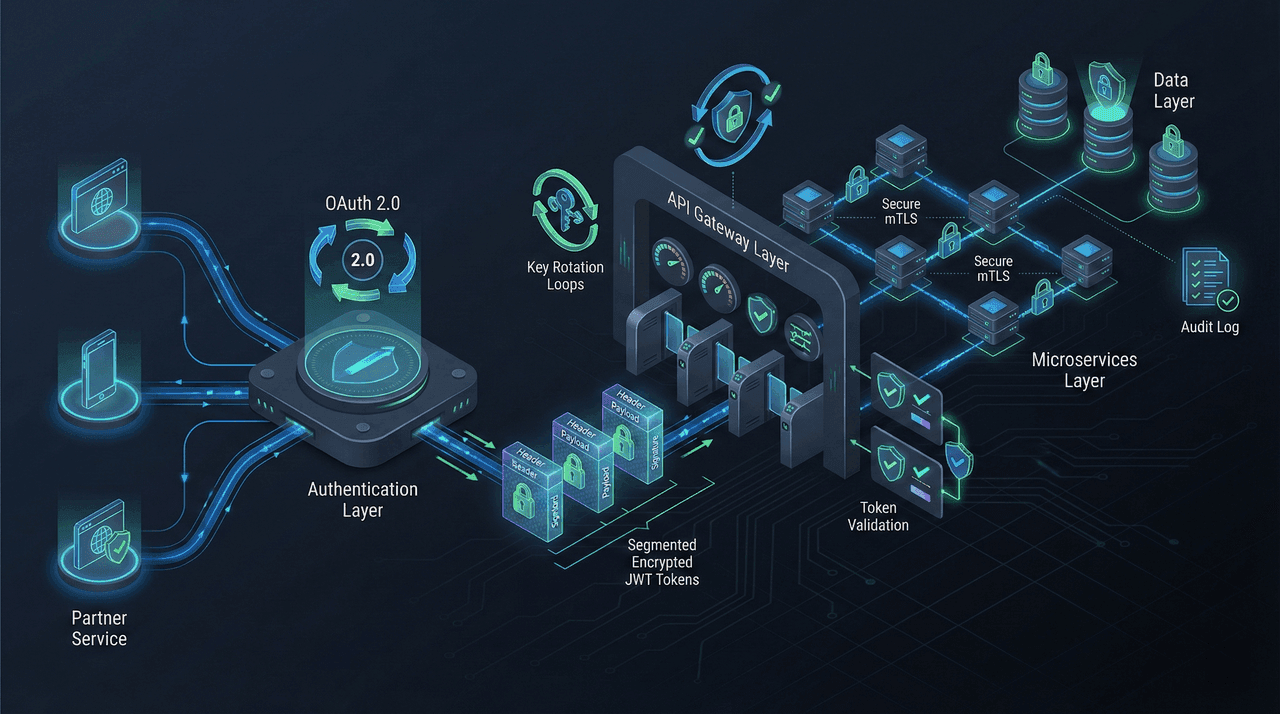

Building Production APIs with Authentication: OAuth 2.0, JWT, and API Key Management in 2026

A decision-grade, production-focused guide to API authentication in 2026. Learn how to correctly implement OAuth 2.0, JWT, API keys, and mTLS for enterprise-scale systems—covering token lifecycles, refresh rotation, zero-trust architecture, compliance requirements, and real-world failure patterns that cost organizations millions when done wrong.

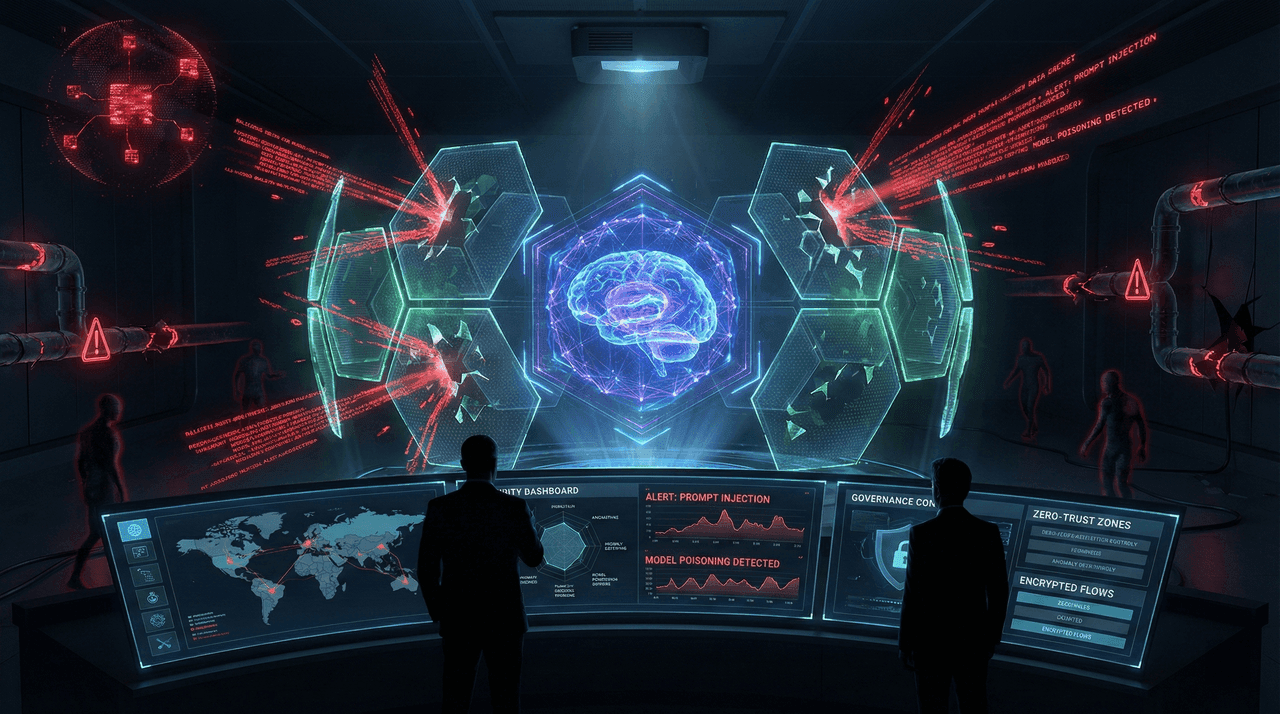

AI Security in 2026: Defending Against Prompt Injection, Model Poisoning, and Shadow AI

AI security is now a board-level risk. In 2026, prompt injection, model poisoning, and shadow AI are driving multimillion-dollar breaches that traditional security tools cannot detect. This guide provides a field-tested, enterprise-grade framework for defending AI systems, closing governance gaps, and achieving regulatory compliance before attackers exploit them.

Supply Chain Security for AI: SBOM, Dependency Management, and Model Provenance in 2026

Enterprise AI systems are increasingly compromised not by direct attacks, but by insecure supply chains. This guide delivers a 2026-ready framework for securing AI systems using SBOMs, dependency vulnerability management, and cryptographic model provenance—covering real-world attack vectors, DevSecOps automation, and compliance-aligned implementation strategies for CTOs and AI leaders.

Post-Quantum Cryptography Readiness: Preparing Your AI Systems for Quantum Threats in 2026

Quantum computing will break today’s AI encryption years before most enterprises are ready. This guide explains NIST’s post-quantum cryptography standards, the real “harvest now, decrypt later” threat, and a practical 2026 roadmap to secure AI models, data pipelines, APIs, and infrastructure before quantum attacks become unavoidable.

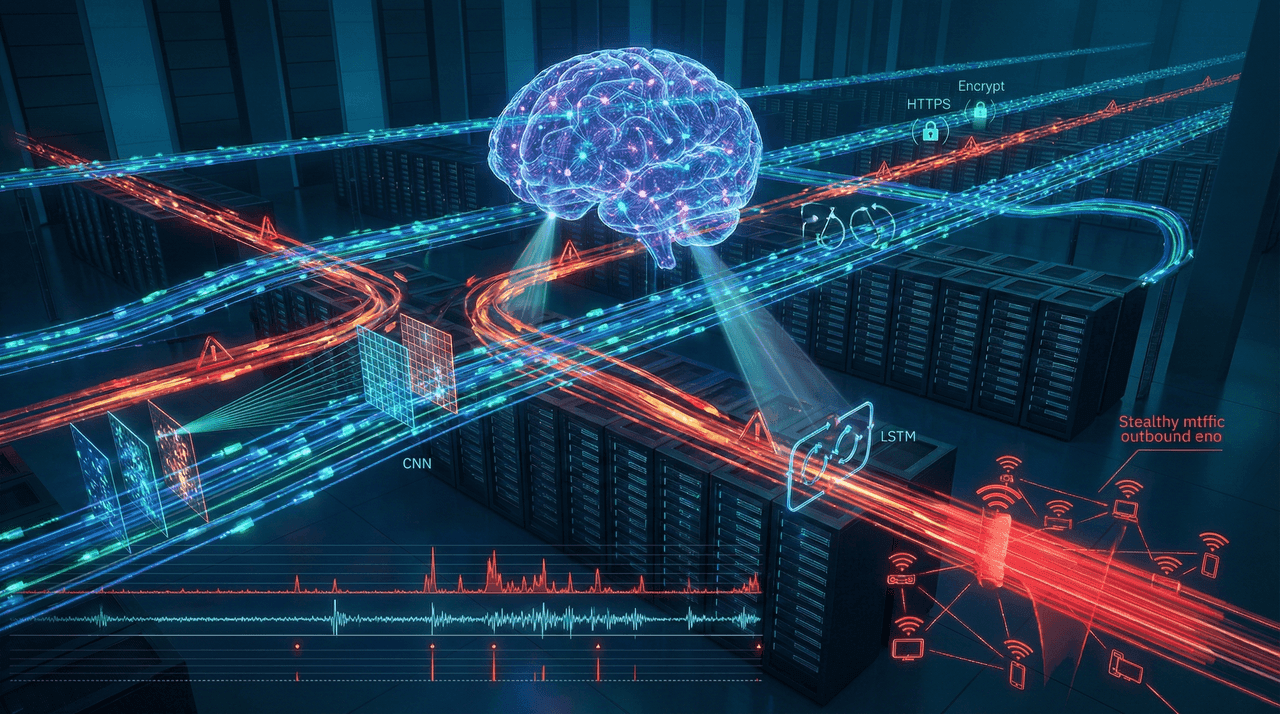

Real-Time Intrusion Detection Using AI: Building Network Security Systems with Deep Learning

AI-powered intrusion detection has become enterprise-critical in 2026. This guide explains how real-time IDS systems built with deep learning—especially hybrid CNN-LSTM architectures—detect modern threats with near-perfect accuracy, drastically reduce false positives, and outperform traditional signature-based defenses. It covers architectures, implementation frameworks, cost-benefit analysis, compliance implications, and decision strategies for deploying next-generation network security at scale.

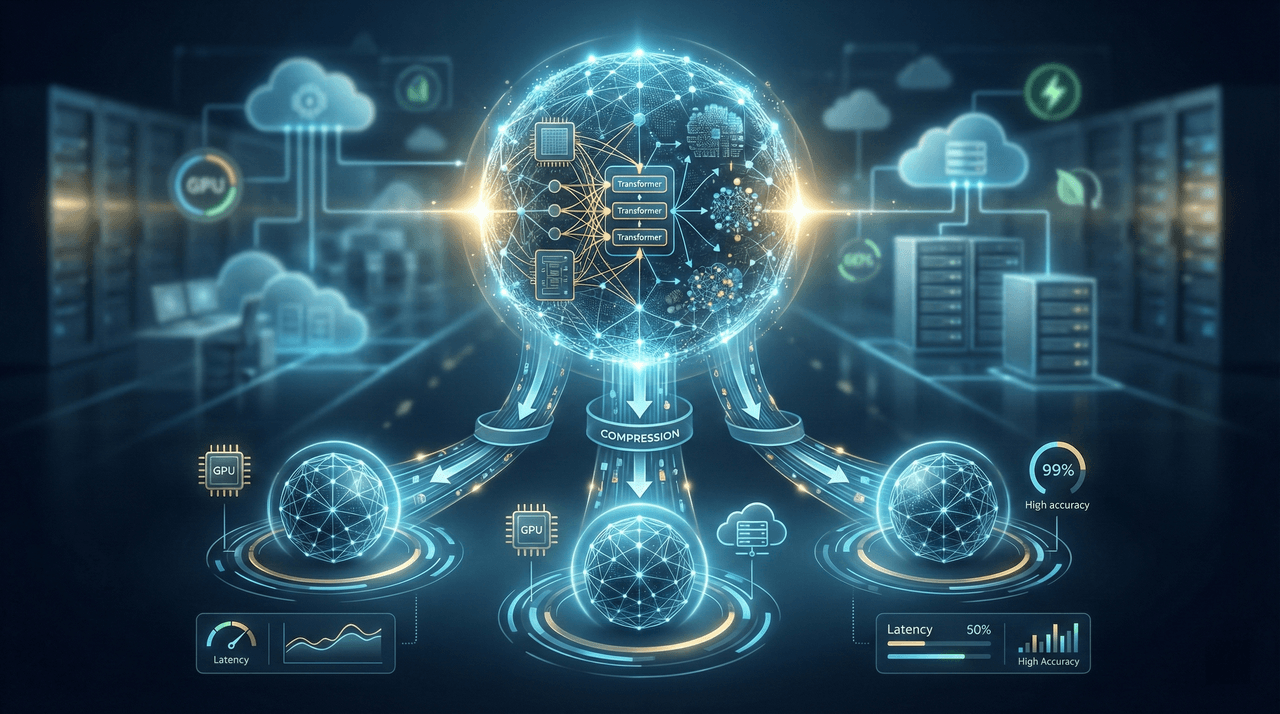

Transfer Learning and Model Distillation: Training Cost-Effective AI Models in 2026

In 2026, training AI models from scratch is no longer a badge of sophistication—it is a financial liability. This deep-dive guide explains how transfer learning, model distillation, and parameter-efficient fine-tuning enable enterprises to deploy production-grade AI models up to 20× cheaper and 10× faster than traditional custom training. Backed by real-world benchmarks, cost breakdowns, and implementation frameworks, this article provides a decision-grade roadmap for building high-performance AI systems without enterprise-scale budgets.

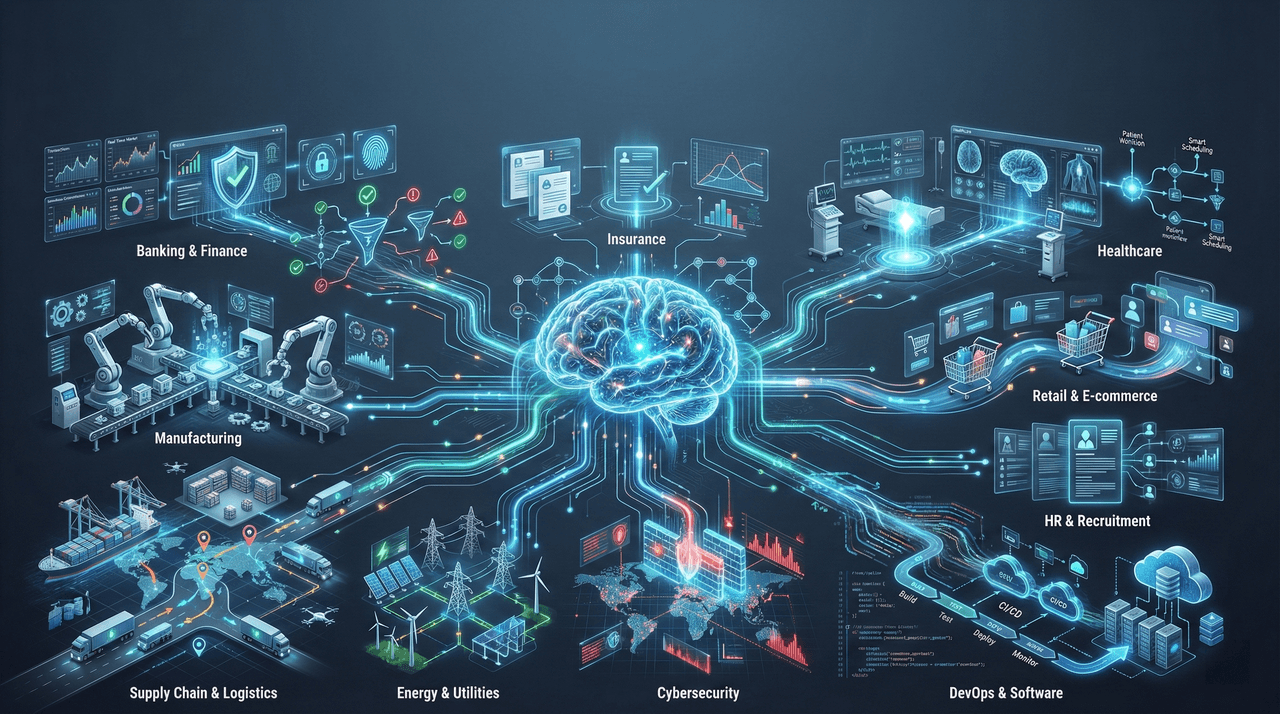

Real-World Agentic AI: 10 Production Use Cases Across Industries

A comprehensive, production-focused analysis of how agentic AI is transforming enterprise operations across ten industries in 2026. This guide examines real-world deployments, quantified ROI, architectural patterns, and governance frameworks that separate scalable success from failed pilots—helping executives move from experimentation to mission-critical automation with confidence.

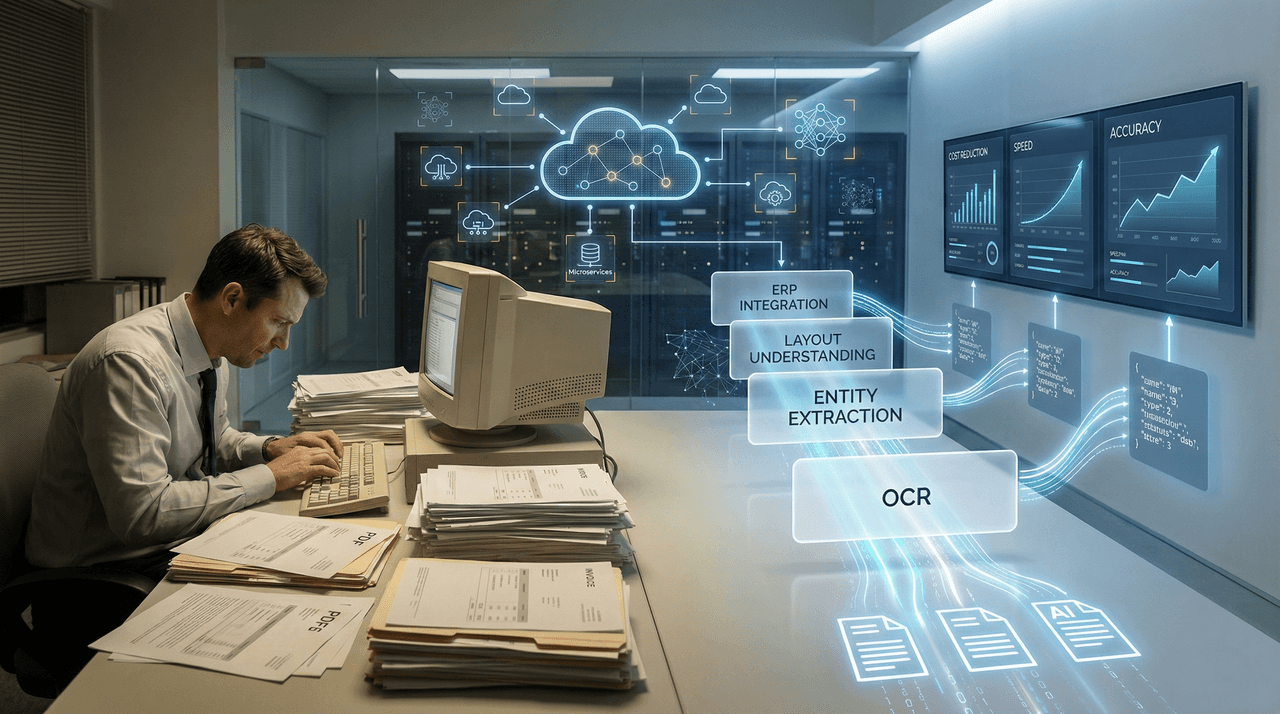

Document AI Processing at Scale: Building Enterprise-Grade PDF, OCR, and Text Extraction Pipelines

Enterprises processing tens of thousands of documents annually are quietly burning millions on manual workflows. This guide breaks down how modern Document AI—combining OCR, layout intelligence, and multimodal models—cuts processing costs by up to 80%, accelerates cycle times from weeks to hours, and delivers measurable ROI within the first year. Learn how to design, deploy, and scale an enterprise-grade document processing pipeline that actually survives production.

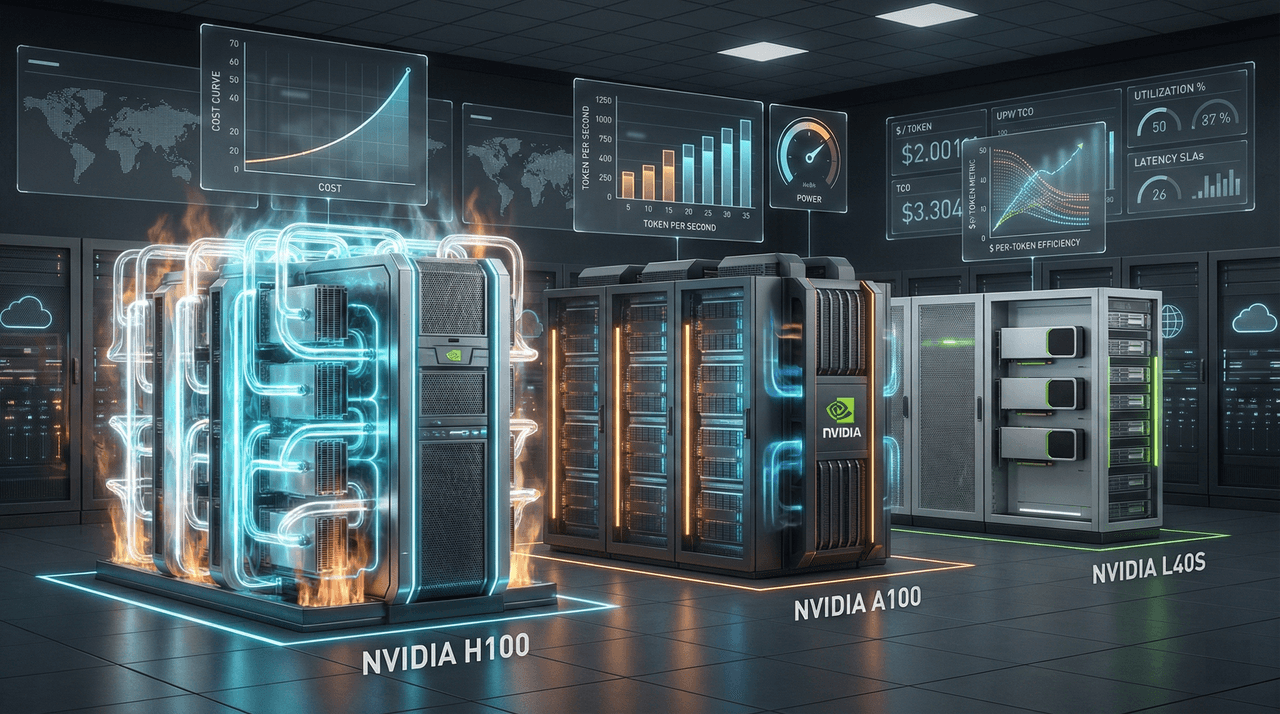

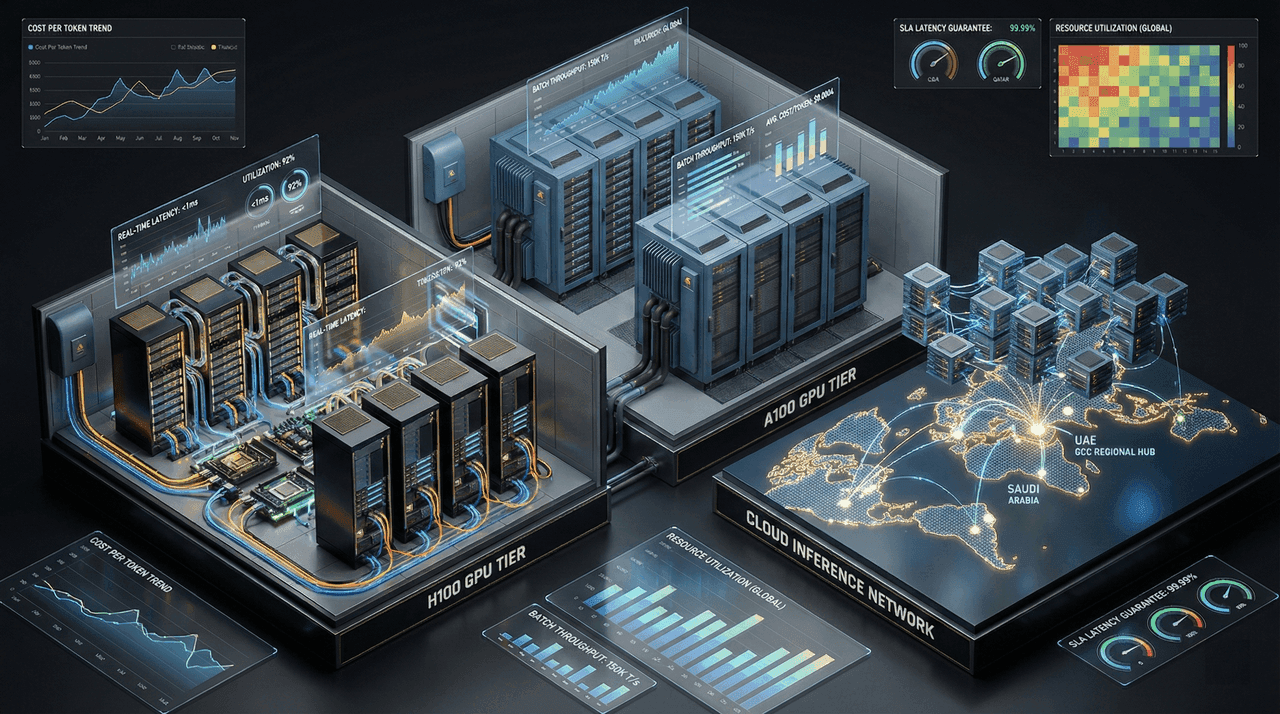

GPU Economics 2026: H100 vs A100 vs L40S – Complete Cost‑Performance Analysis for AI Workloads

Most AI teams overspend 30–50% on GPU compute by choosing the wrong hardware for the wrong workloads. This guide breaks down the real 2026 economics of NVIDIA H100, A100, and L40S—covering training, fine-tuning, and inference—to help engineering leaders optimize cost-per-token, total cost of ownership, and deployment strategy instead of chasing raw specs.

Synthetic Data Generation for AI Training: Complete Python Implementation Guide 2026

Synthetic data is no longer experimental—it is becoming core AI infrastructure. This guide delivers a production-grade framework for generating, evaluating, and deploying synthetic data using Python in 2026. Learn how enterprises replace slow, risky data collection with privacy-preserving synthetic pipelines, apply differential privacy for GDPR/HIPAA compliance, and validate real-world model utility using industry-grade metrics. Includes hands-on Python implementations, tool comparisons, and deployment architectures used by top-performing AI teams.

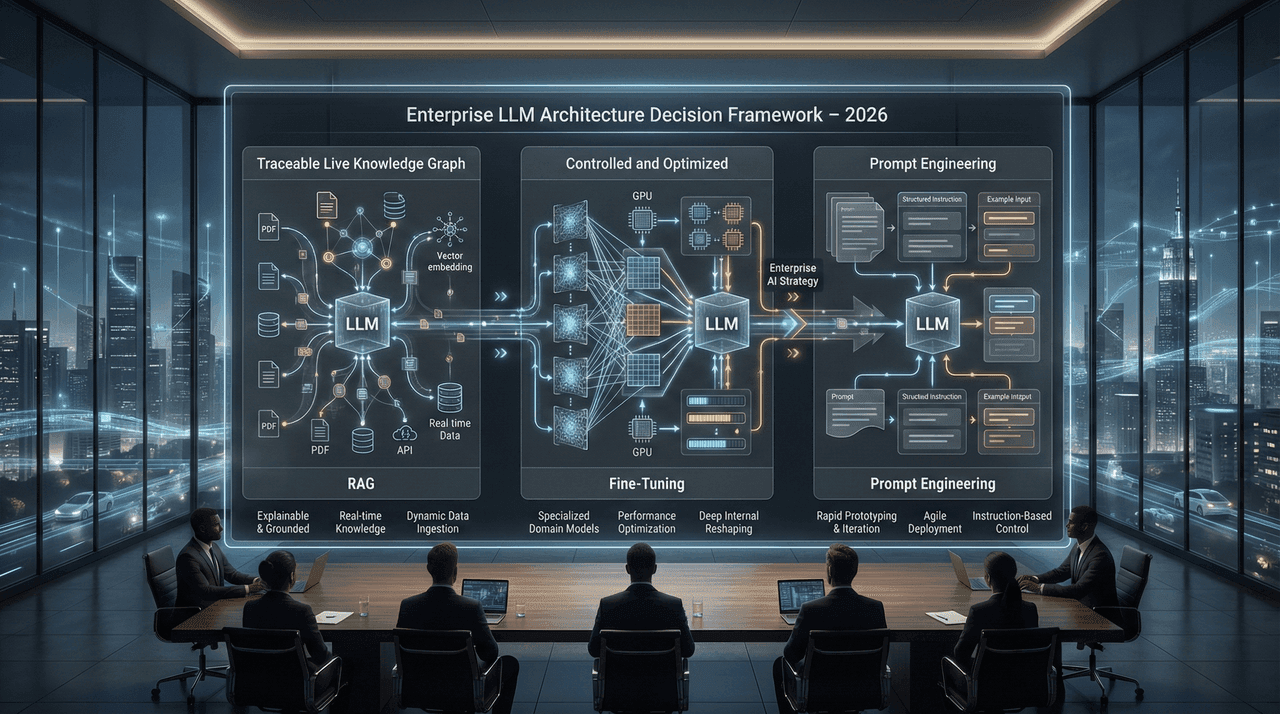

RAG vs Fine-Tuning vs Prompt Engineering: The 2026 Decision Framework for Enterprise LLM Applications

Choosing between RAG, fine-tuning, and prompt engineering is no longer a technical preference—it’s a strategic enterprise decision. This 2026 decision framework breaks down real-world trade-offs across cost, scalability, explainability, and regulatory risk, helping CTOs and architects select the right LLM architecture without costly missteps.

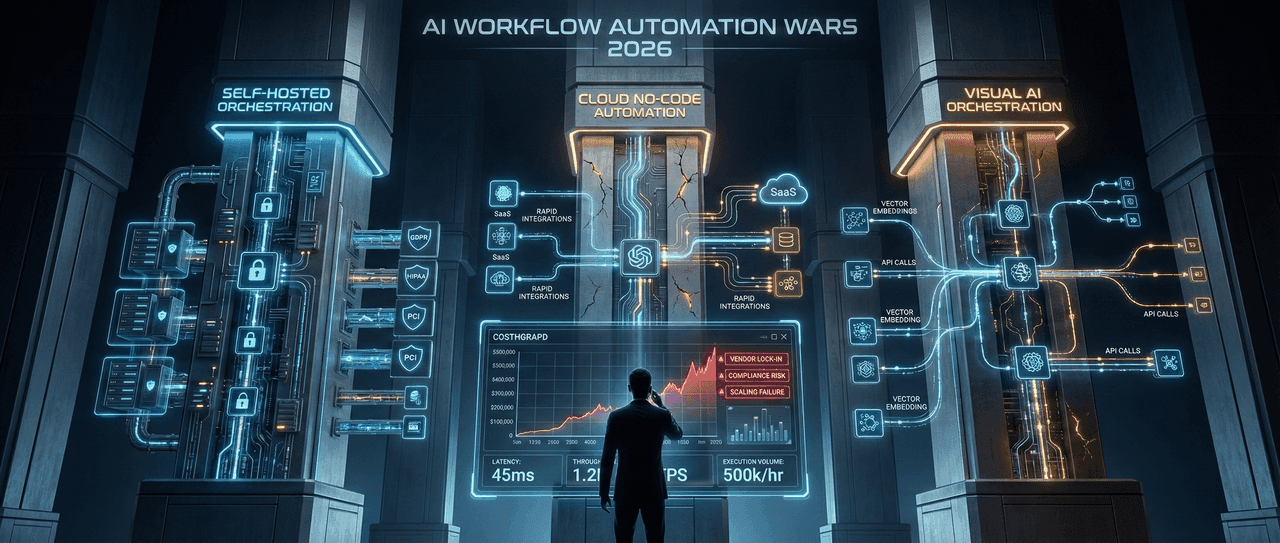

AI Workflow Automation Wars 2026: n8n vs Zapier vs Make for AI Engineers

A decision-grade comparison of n8n, Zapier, and Make for AI engineers in 2026. This analysis exposes the $500K enterprise mistake driven by poor workflow orchestration choices—breaking down real performance benchmarks, total cost of ownership, multi-agent AI architecture support, and compliance-driven deployment trade-offs for regulated and high-scale environments.

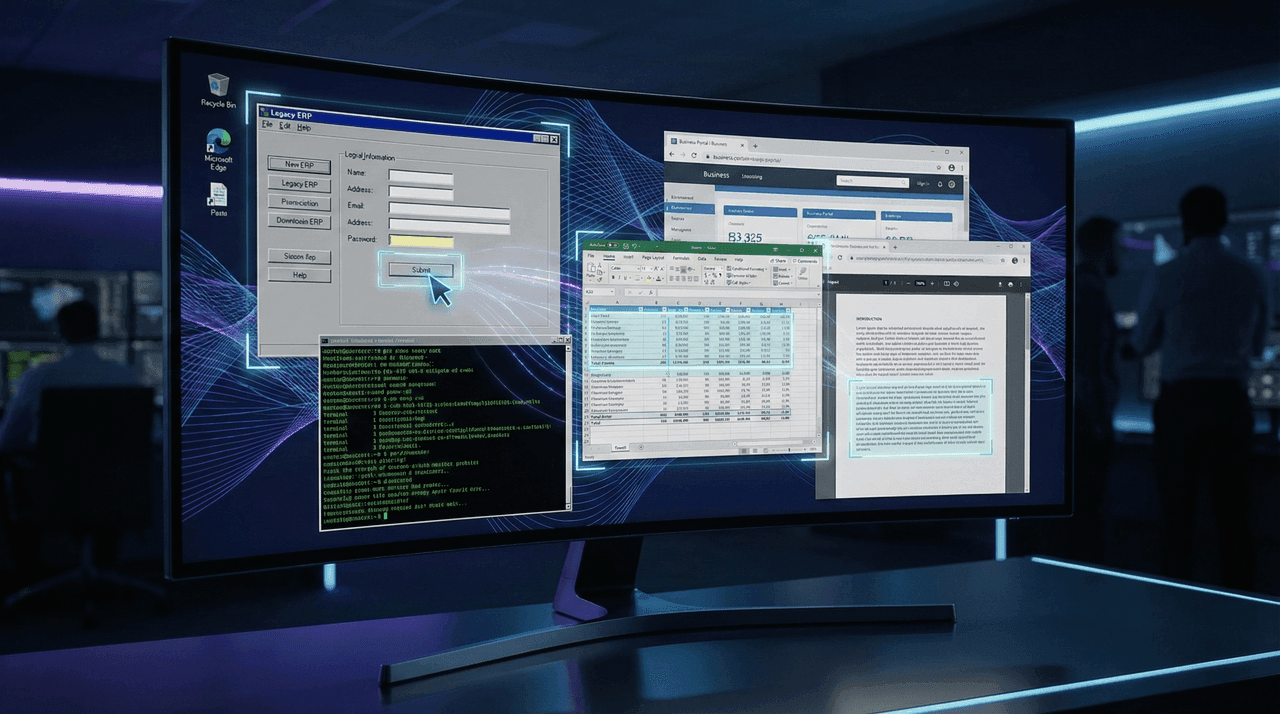

Claude Computer Use API: Building Autonomous Desktop Automation Agents (Complete Implementation Guide)

Claude’s Computer Use API enables autonomous AI agents to control real desktop environments—automating legacy systems, enterprise applications, and cross-application workflows without APIs or RPA tooling. This in-depth implementation guide explains how Computer Use works, where it outperforms traditional RPA, and how enterprises are achieving 60–75% cost reductions with production-grade Python agents, secure architectures, and scalable automation frameworks.

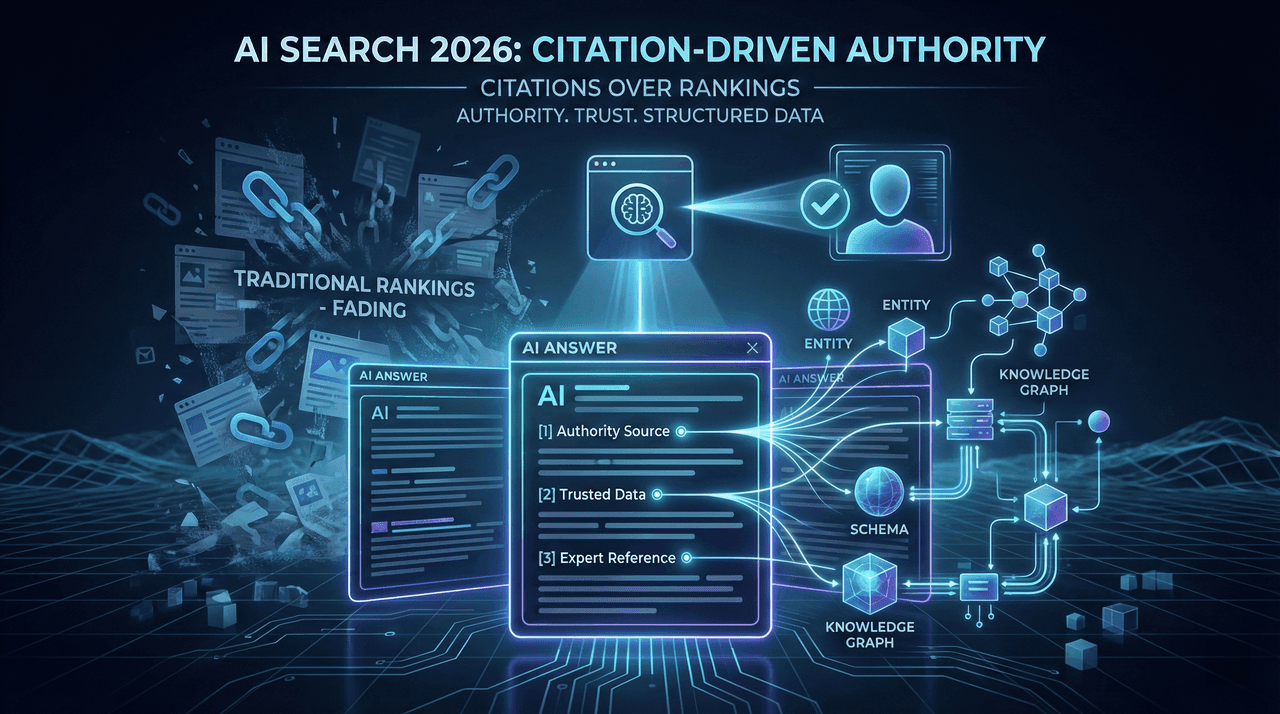

AI Search Optimization in 2026: Why Rankings No Longer Matter

Traditional SEO is no longer the primary visibility driver in AI-driven search. In 2026, rankings increasingly fail to produce traffic while AI systems surface answers directly—citing authoritative sources instead of sending clicks. This analysis explains why 37% of B2B SaaS companies lost traffic despite stable rankings, how ChatGPT, Perplexity, and Google AI Overviews select sources, and what enterprises must do to win citations, authority, and measurable ROI in AI-first search environments.

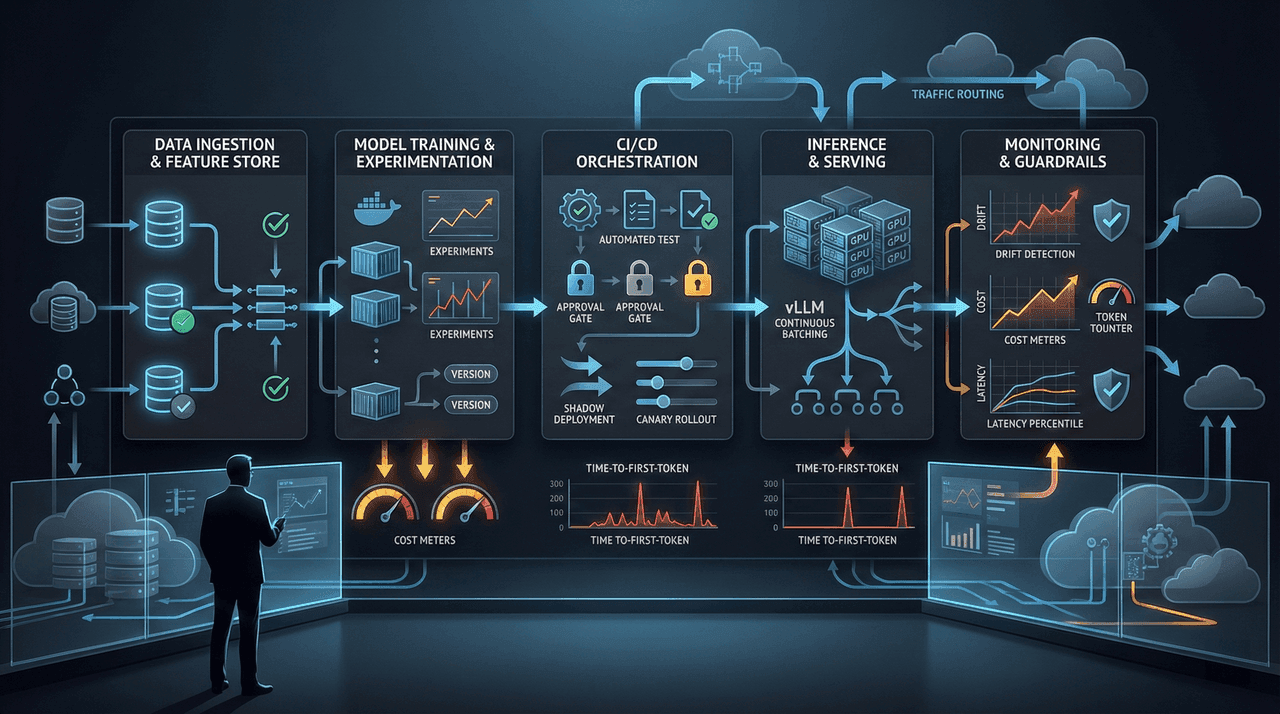

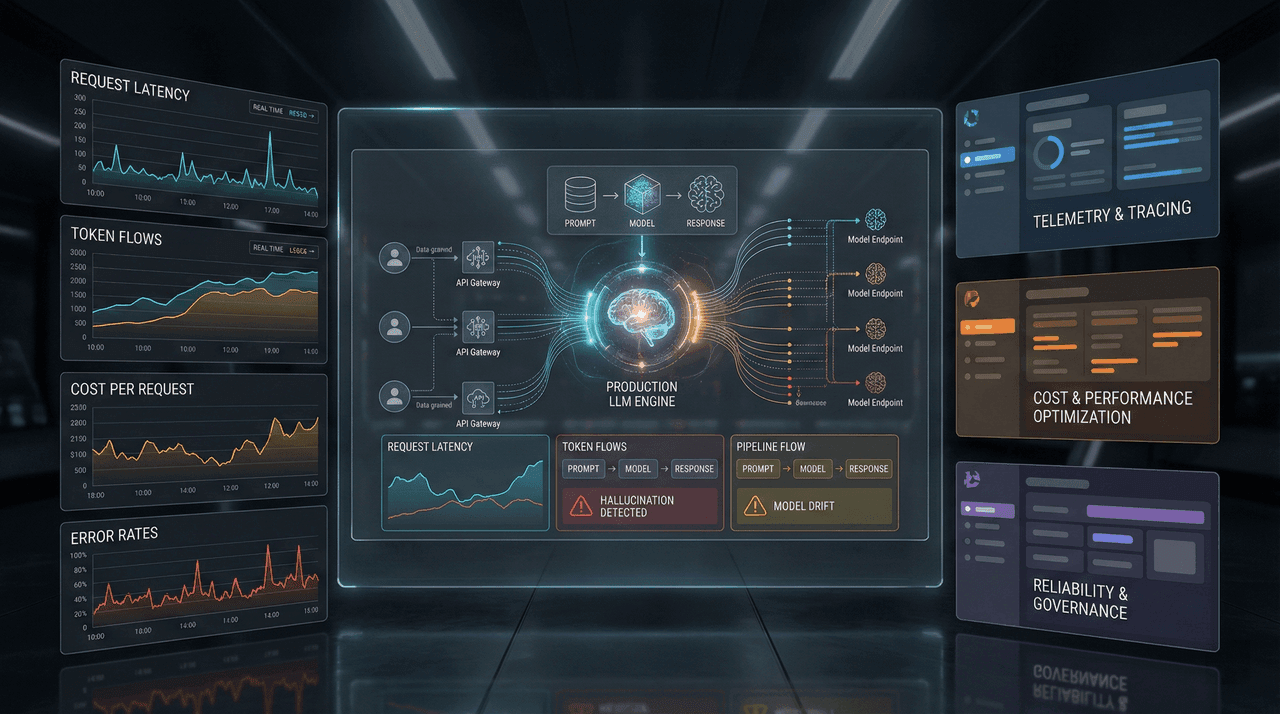

MLOps in 2026: The Complete CI/CD Pipeline for LLM Deployment

Deploying LLMs in production in 2026 is no longer a modeling problem—it is an operational, economic, and reliability challenge. This guide breaks down the complete MLOps CI/CD architecture required to ship large language models at scale without runaway costs, silent failures, or latency collapse. Built for CTOs, MLOps engineers, and architects running real traffic under real budget constraints.

Agentic AI Use Cases: 10 Real Enterprise Implementations with Code Examples (2026)

A decision-grade, production-first analysis of agentic AI in 2026—covering real enterprise deployments, cost breakpoints, failure modes, security risks, and production-tested code examples. This guide separates scalable architectures that deliver 3–6× ROI from pilots that collapse under cost, governance, and operational debt.

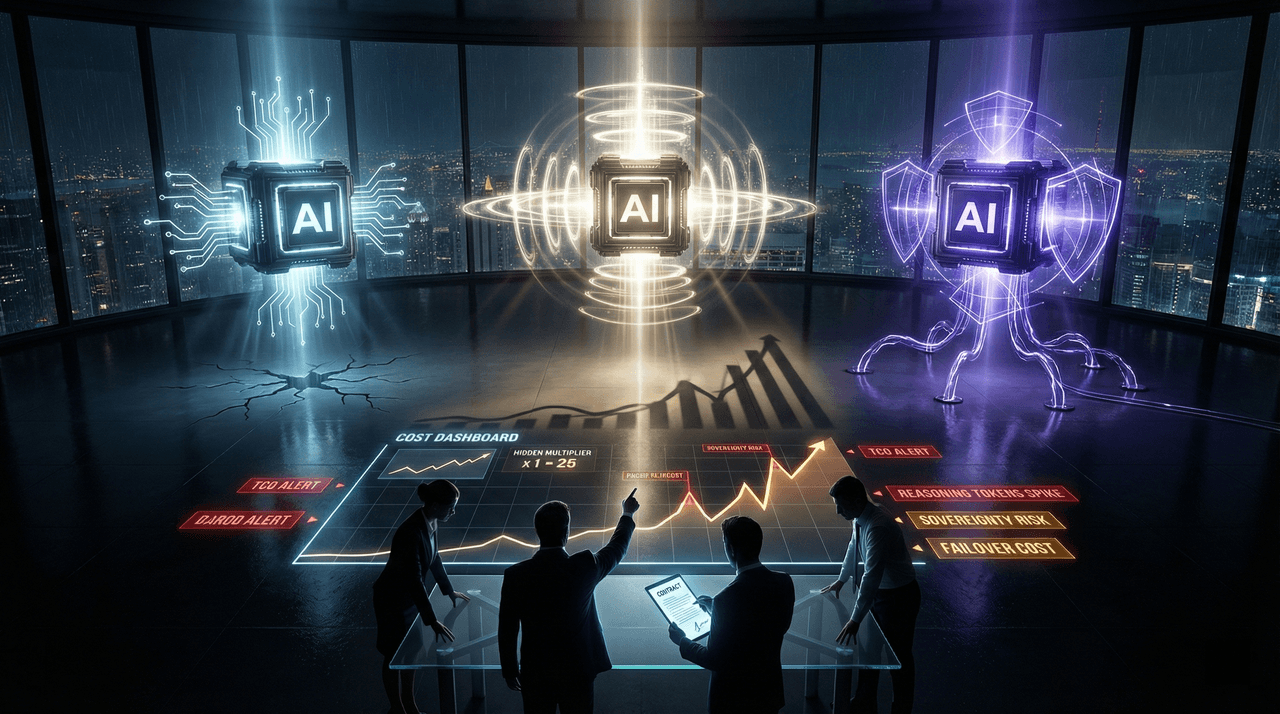

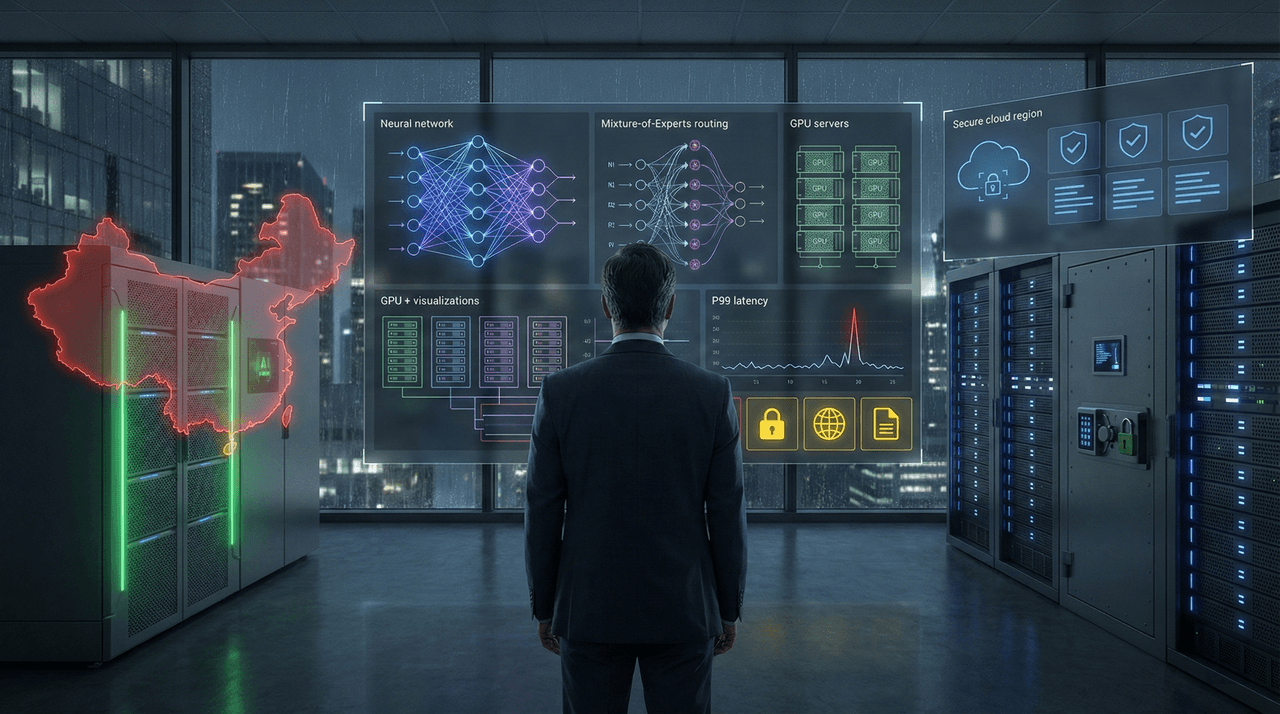

DeepSeek-R1 vs GPT-5 vs Claude 4: The Real LLM Cost-Performance Battle

Three enterprise LLMs. Three pricing illusions. One irreversible procurement decision. After stress-testing DeepSeek-R1, GPT-5, and Claude 4 at 10M+ tokens per day, this analysis exposes where benchmark leaders collapse in production: hidden reasoning multipliers, sovereignty failures, API instability, and runaway total cost of ownership. This is not a benchmark comparison—it is a decision framework for CTOs, architects, and finance leaders responsible for eight-figure AI budgets in 2026.

AI Observability in 2026: LangFuse vs Arize vs Helicone for Production LLM Apps

A decision-grade comparison of LangFuse, Arize, and Helicone for AI observability in 2026—covering cost control, tracing depth, evaluation rigor, and production failure modes that matter at scale.

Blink Agent Builder vs AutoGen vs LangGraph: Which AI Agent Platform in 2026?

A decision-grade comparison of Blink Agent Builder, Microsoft AutoGen, and LangGraph for production AI agents in 2026—covering architecture, hidden costs, scalability limits, security trade-offs, and real-world failure modes that determine whether agent deployments succeed or collapse under enterprise load.

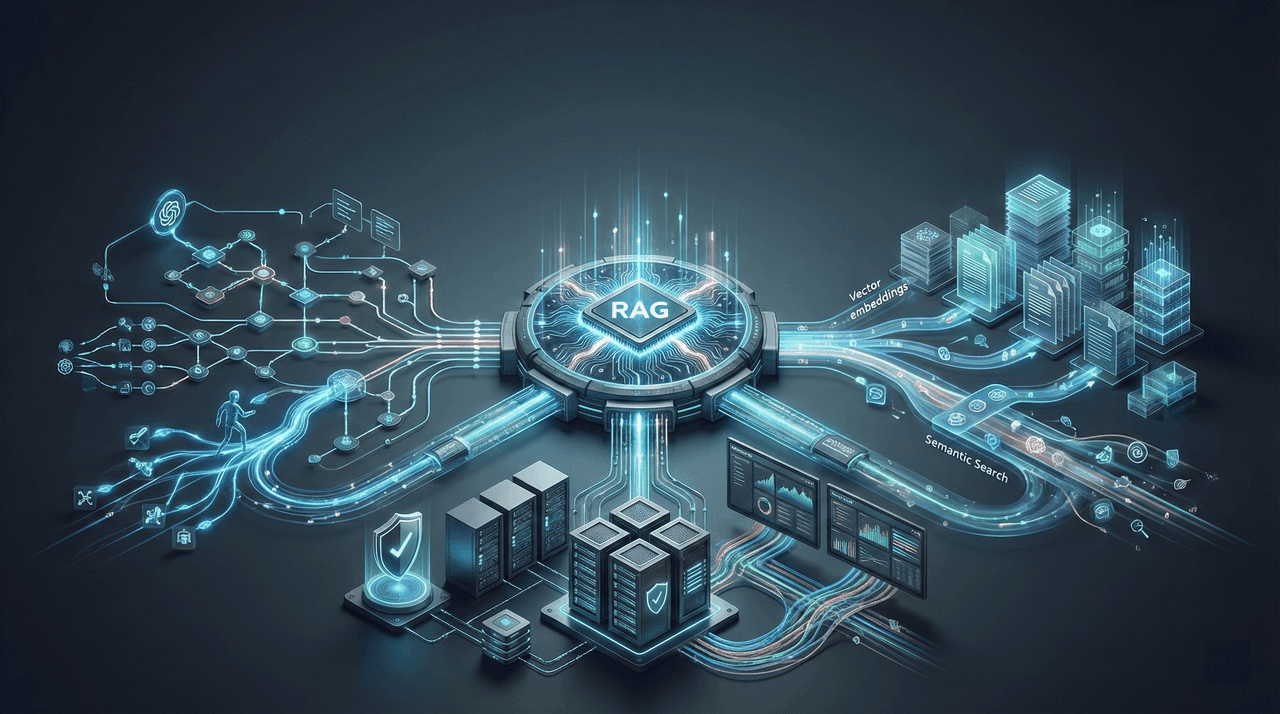

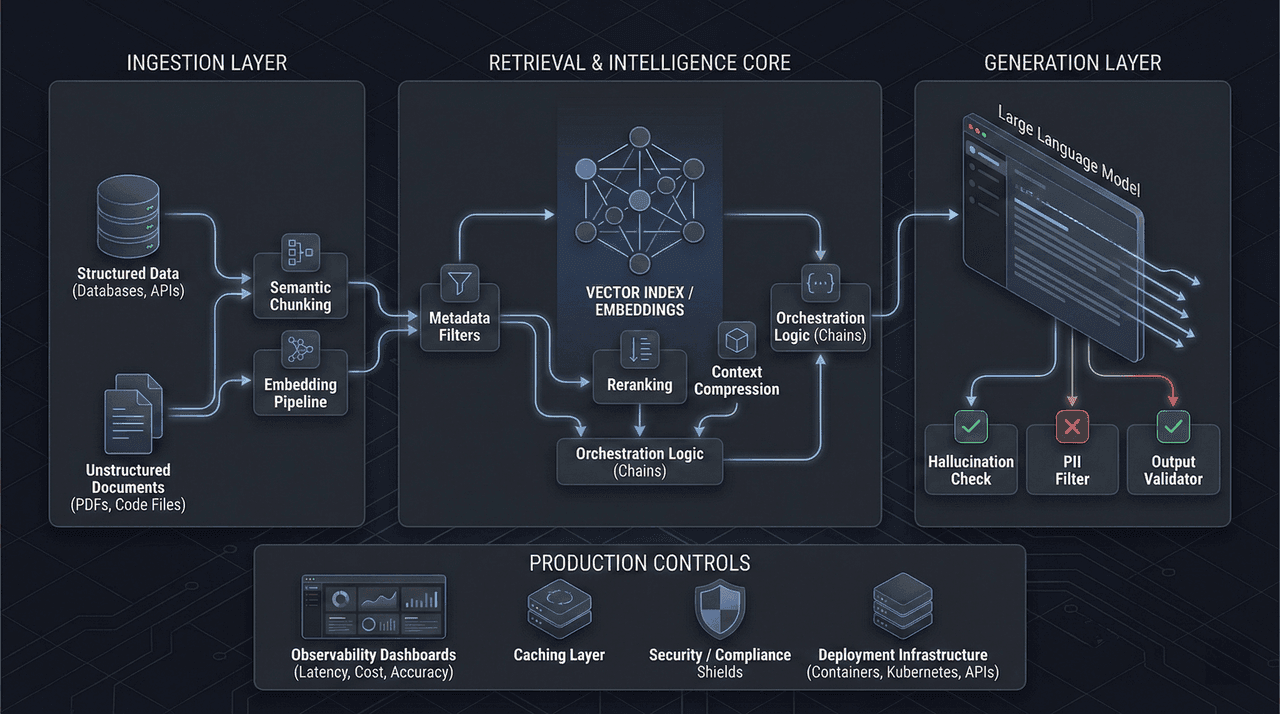

Building Production RAG Systems in 2026: Complete Tutorial with LangChain + Pinecone

A production-grade, end-to-end guide to building Retrieval-Augmented Generation (RAG) systems in 2026. This tutorial covers real-world architecture decisions with LangChain and Pinecone, including chunking strategies, embedding trade-offs, scaling patterns, observability, cost control, and failure modes that break RAG in production.

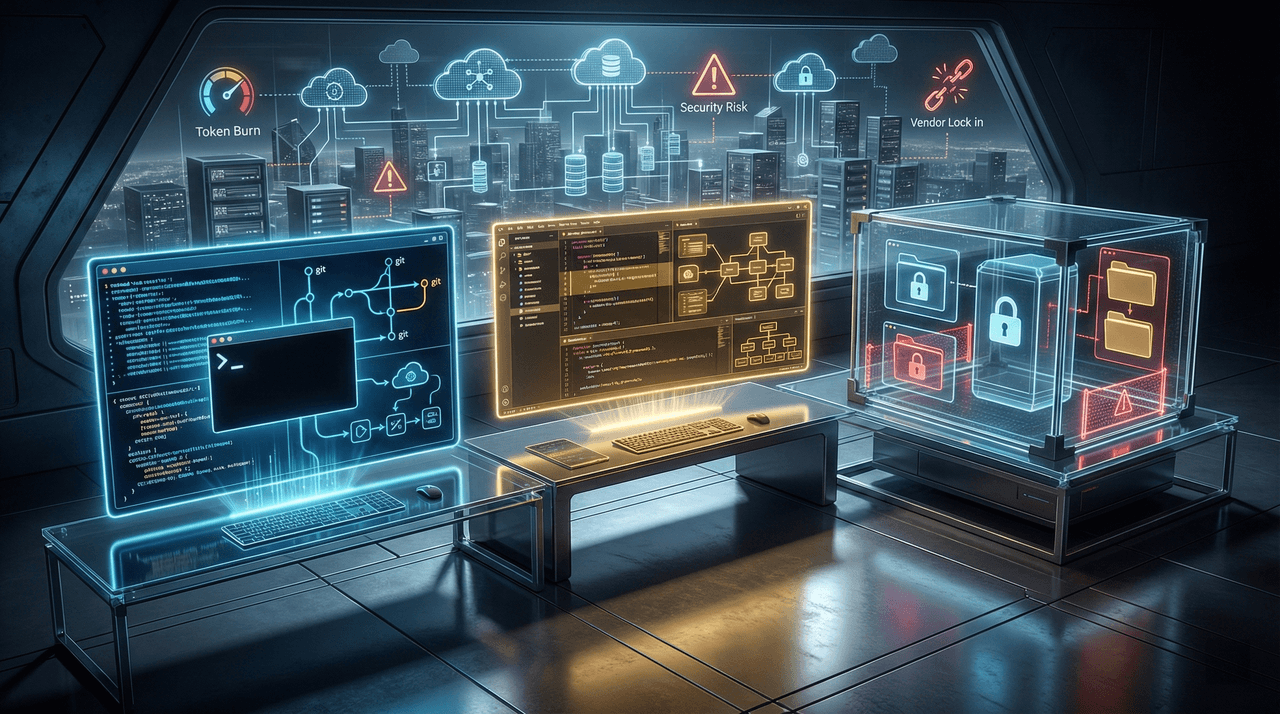

Cowork vs Cursor vs Claude Code: The Ultimate AI Coding Agent Battle for 2026

A decision-grade, production-tested comparison of Claude Cowork, Cursor, and Claude Code that exposes real-world failure modes, token economics, security risks, and vendor lock-in costs behind AI coding agents in 2026. Built from enterprise deployments—not demos—this guide helps engineering leaders choose the tool that minimizes operational risk, not just maximizes hype.

DeepSeek V4 in 2026: The $6M Model That Could Cost You Everything—A Principal's Verdict on China's AI Gambit

DeepSeek V4 promises GPT-4-class performance at 1/100th the cost—but that headline hides the real risk. This analysis examines what breaks under production load, where compliance and data sovereignty failures emerge, and why the cheapest AI model often becomes the most expensive decision 12–18 months later. Written from a principal’s perspective for leaders who own P&L, regulatory exposure, and long-term infrastructure outcomes.

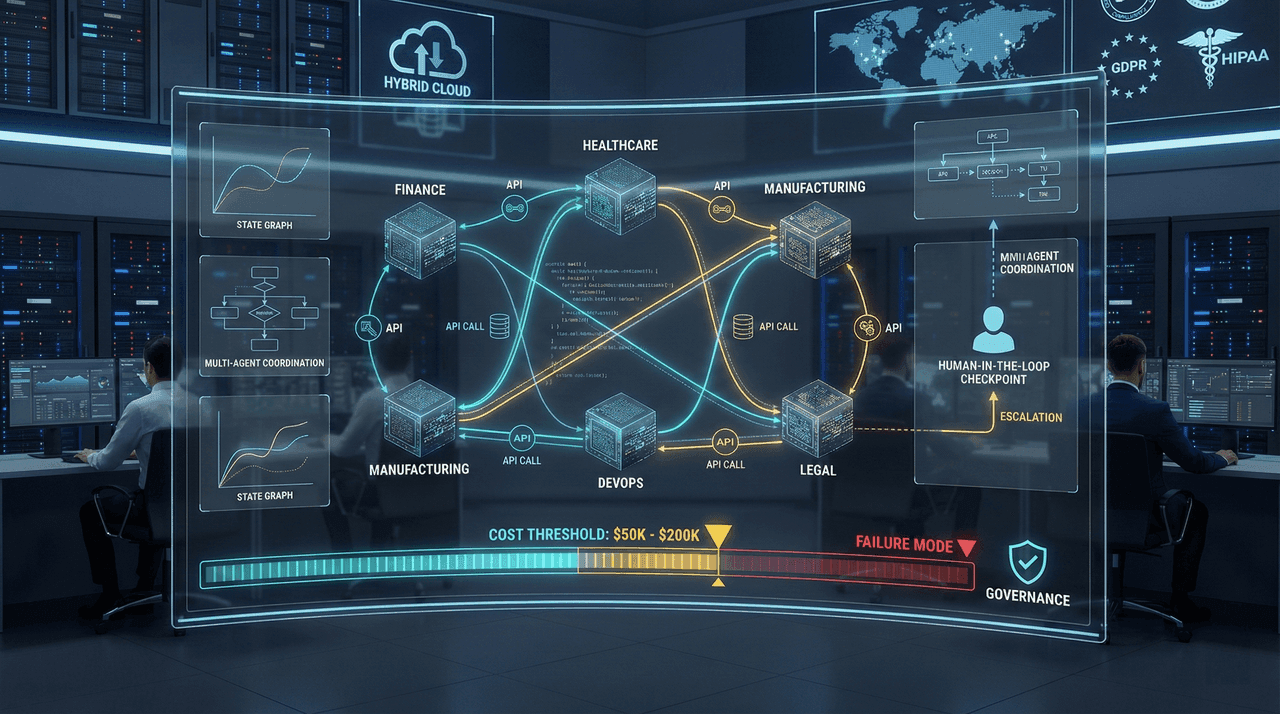

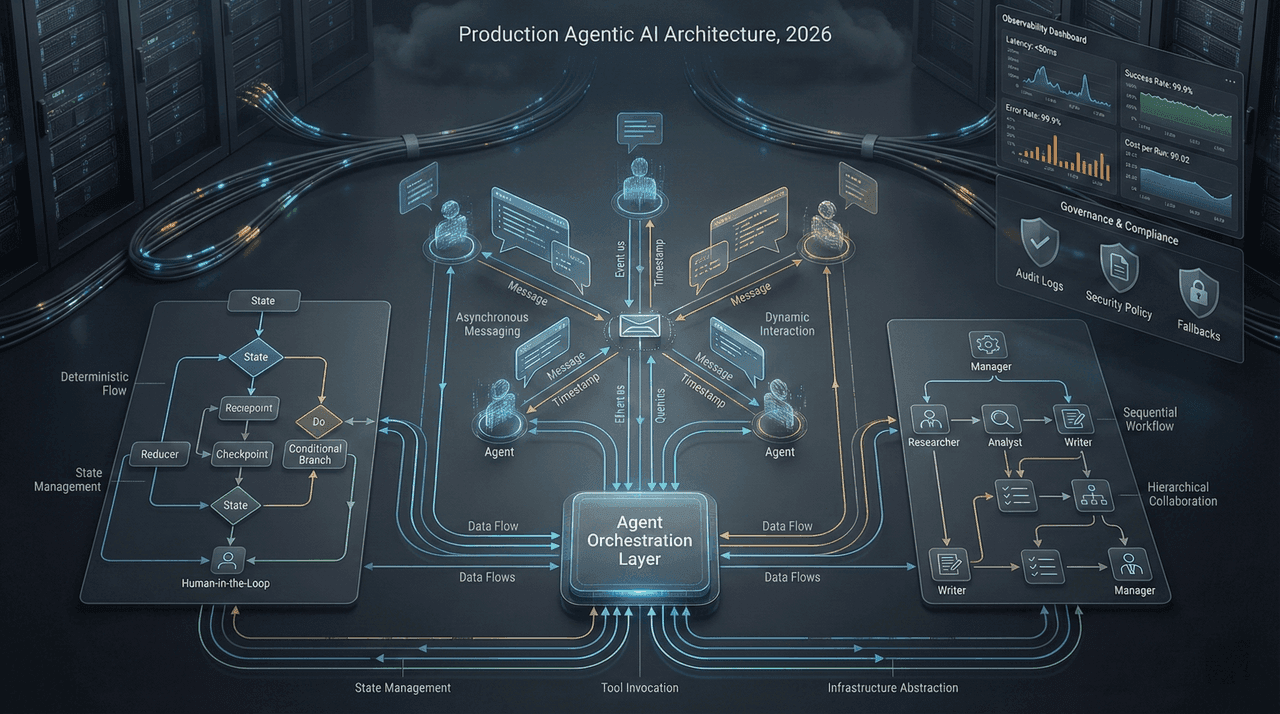

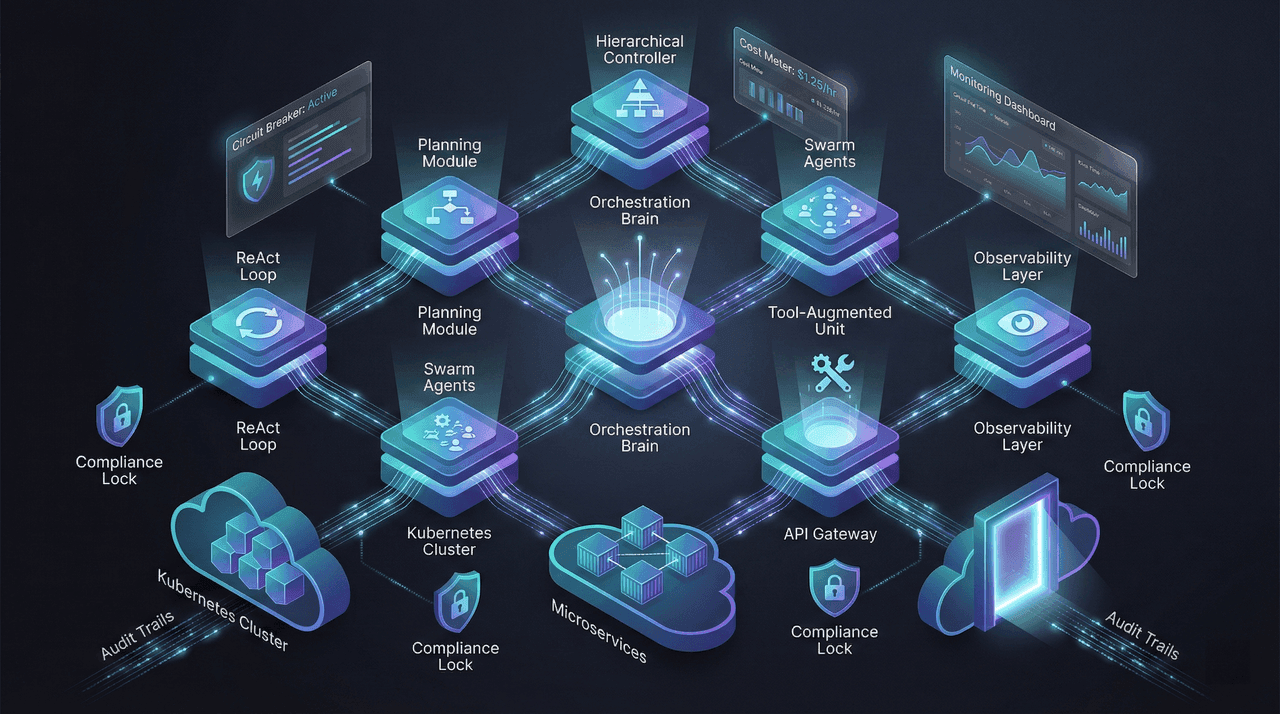

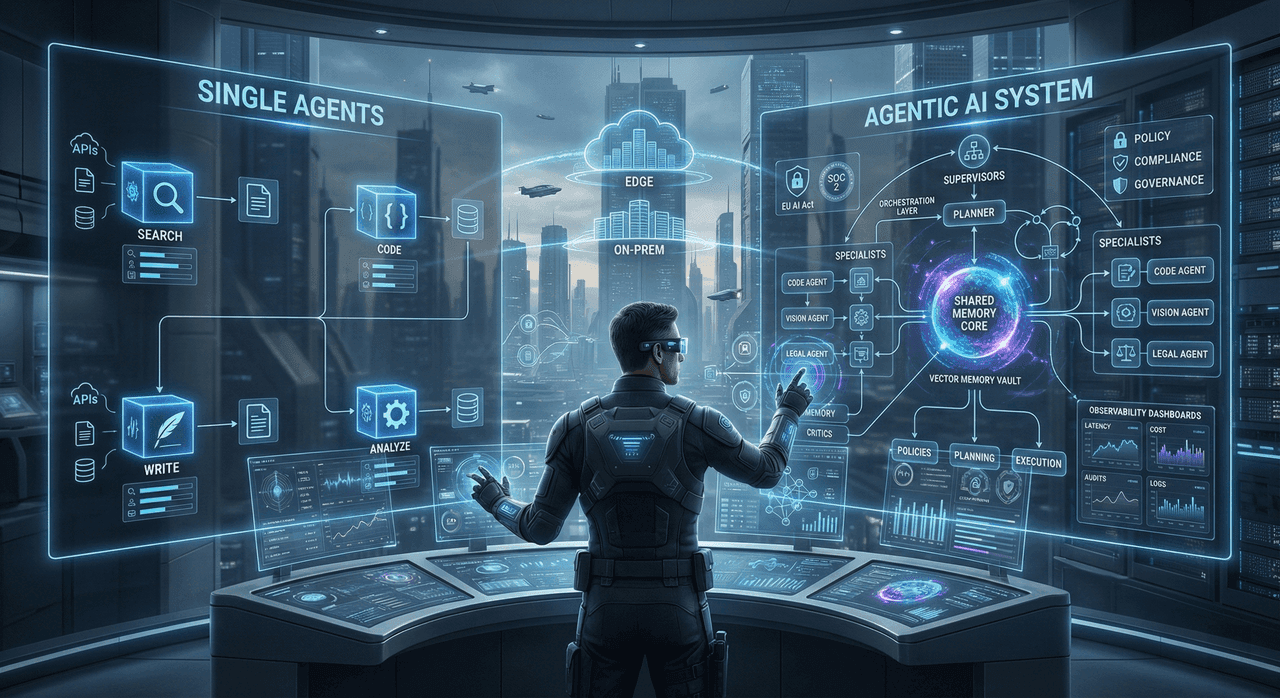

Building Production Agentic AI Systems in 2026: LangGraph vs AutoGen vs CrewAI—Complete Architecture Guide

Agentic AI is now production infrastructure, not experimentation. This guide provides a CTO-level, architecture-first comparison of LangGraph, AutoGen, and CrewAI—covering control flow models, state management, guardrails, observability, cost dynamics, and regulatory readiness. It delivers a defensible decision framework to help engineering leaders select the right agent orchestration stack, avoid common failure modes, and scale autonomous AI systems from proof-of-concept to enterprise production in 2026.

Perplexity AI vs ChatGPT vs Google: The 2026 AI Search Engine Battle (Developer's Complete Guide)

A technical, no-nonsense comparison of Perplexity AI, ChatGPT Search, and Google AI Overviews from a developer’s perspective. This guide exposes real accuracy data, citation failures, memory wipe risks, API limitations, and hidden production costs that directly impact engineering workflows and architectural decisions in 2026.

Agentic AI vs Traditional AI Agents: Complete 2026 Implementation Guide for Bangladesh Enterprises

Agentic AI is redefining how enterprises operate—moving from reactive automation to autonomous, goal-driven intelligence. This 2026 implementation guide breaks down the architectural differences between traditional AI agents and agentic AI, with practical frameworks, ROI analysis, and real-world use cases tailored for Bangladesh enterprises across banking, telecom, garments, healthcare, and government. Designed for CTOs, CIOs, and founders, this guide provides a step-by-step roadmap to deploy production-grade autonomous AI systems with security, compliance, and measurable business impact.

Small Language Models (SLMs) vs LLMs: When to Use What in 2026

A definitive 2026 enterprise guide to choosing between Small Language Models (SLMs) and Large Language Models (LLMs). This in-depth analysis covers real-world cost savings of 90%+, latency and performance tradeoffs, edge deployment strategies, hybrid architectures, and decision frameworks—helping CTOs and AI leaders select the right model for each workload, not just the most powerful one.

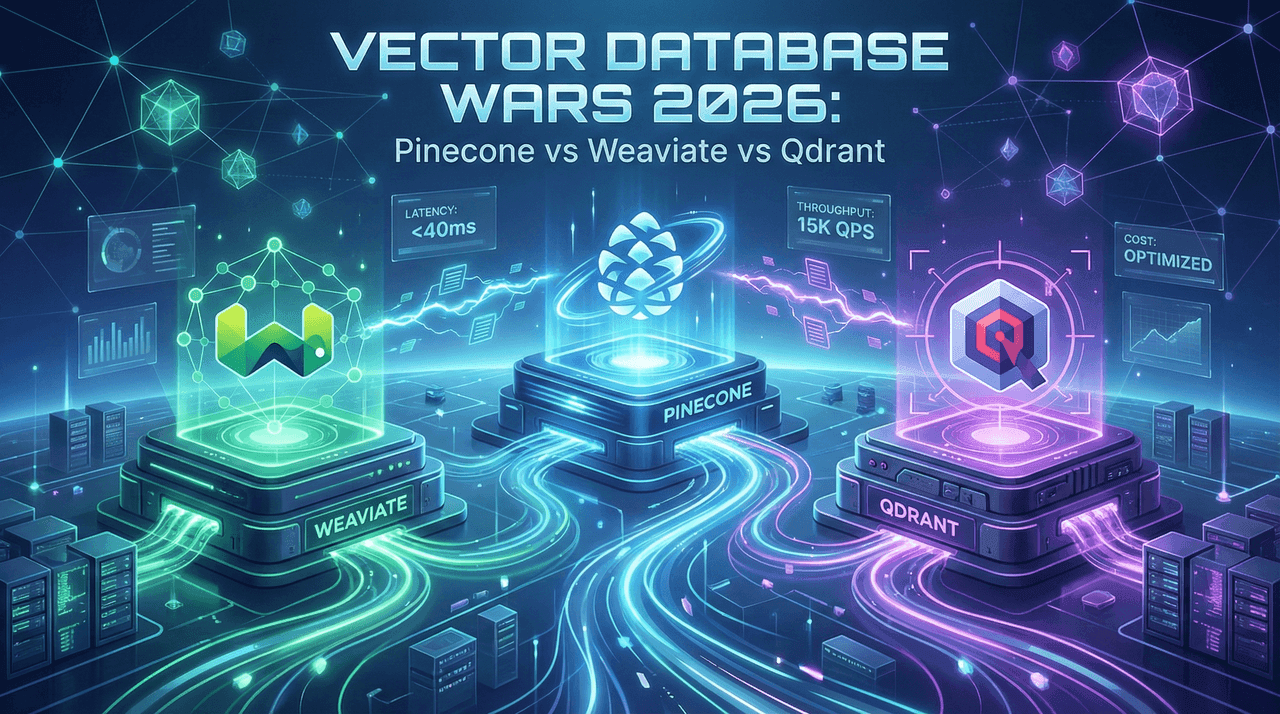

Pinecone vs Weaviate vs Qdrant: Vector Database Wars 2026

A deep, production-focused comparison of Pinecone, Weaviate, and Qdrant for enterprise AI in 2026. This guide analyzes real-world performance, latency under load, hybrid search capabilities, total cost of ownership, deployment models, security, and vendor lock-in—helping CTOs and AI architects choose the right vector database for scalable RAG, recommendation systems, and multimodal AI applications.

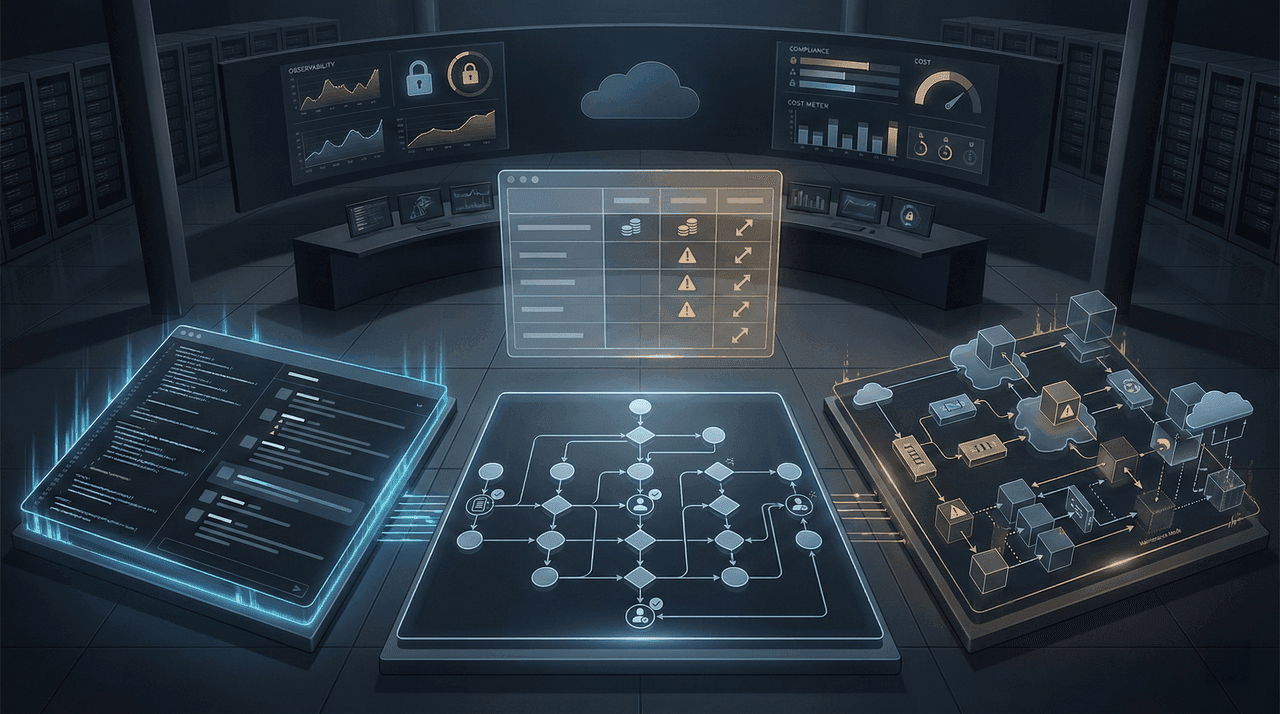

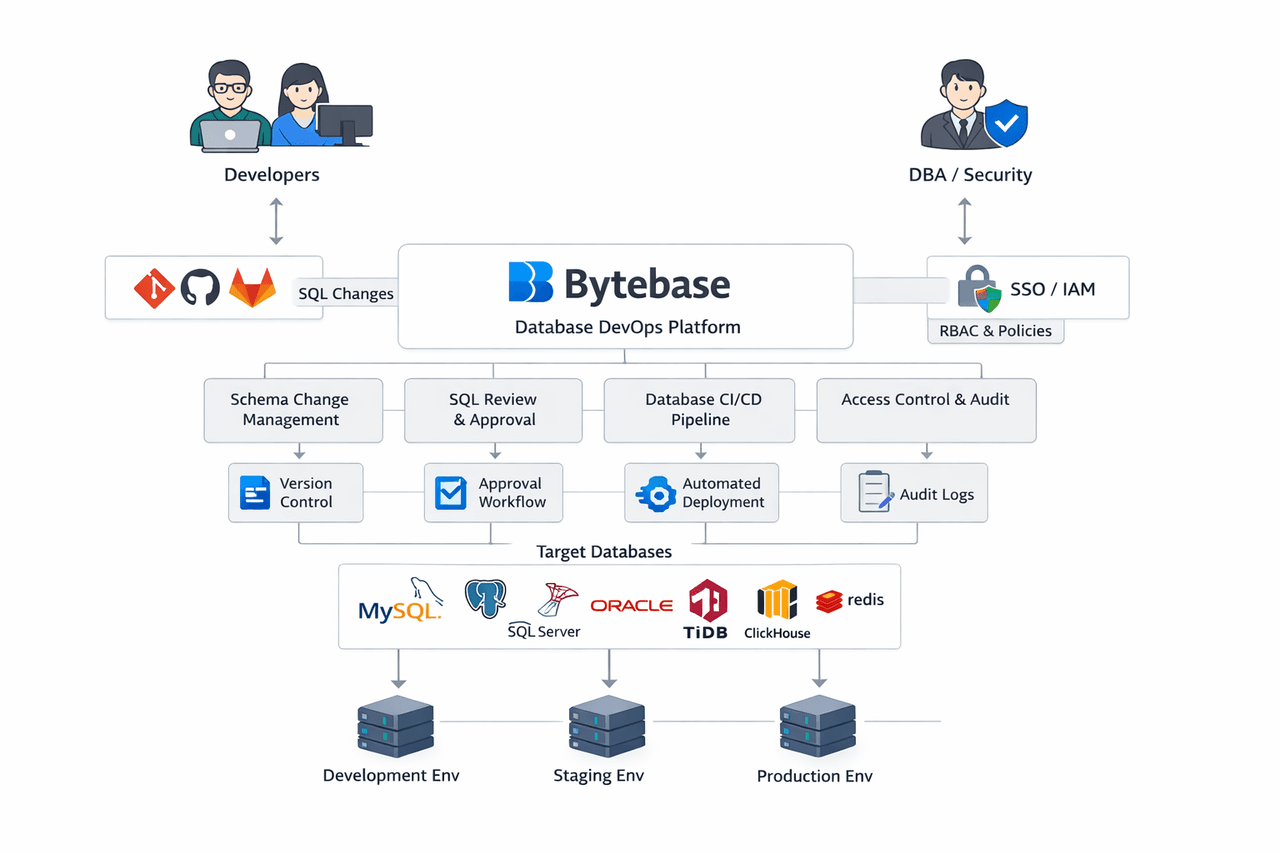

Database DevOps Transformation: Complete Guide to Bytebase for Modern Development Teams

Bytebase is a modern Database DevOps and CI/CD platform that brings GitOps, automated rollouts, schema change governance, and enterprise-grade security to database development. This guide explains Bytebase’s architecture, core features, real-world use cases, and implementation strategies to help engineering teams eliminate risky manual migrations, enforce compliance, and scale database operations safely across multi-cloud and multi-tenant environments.

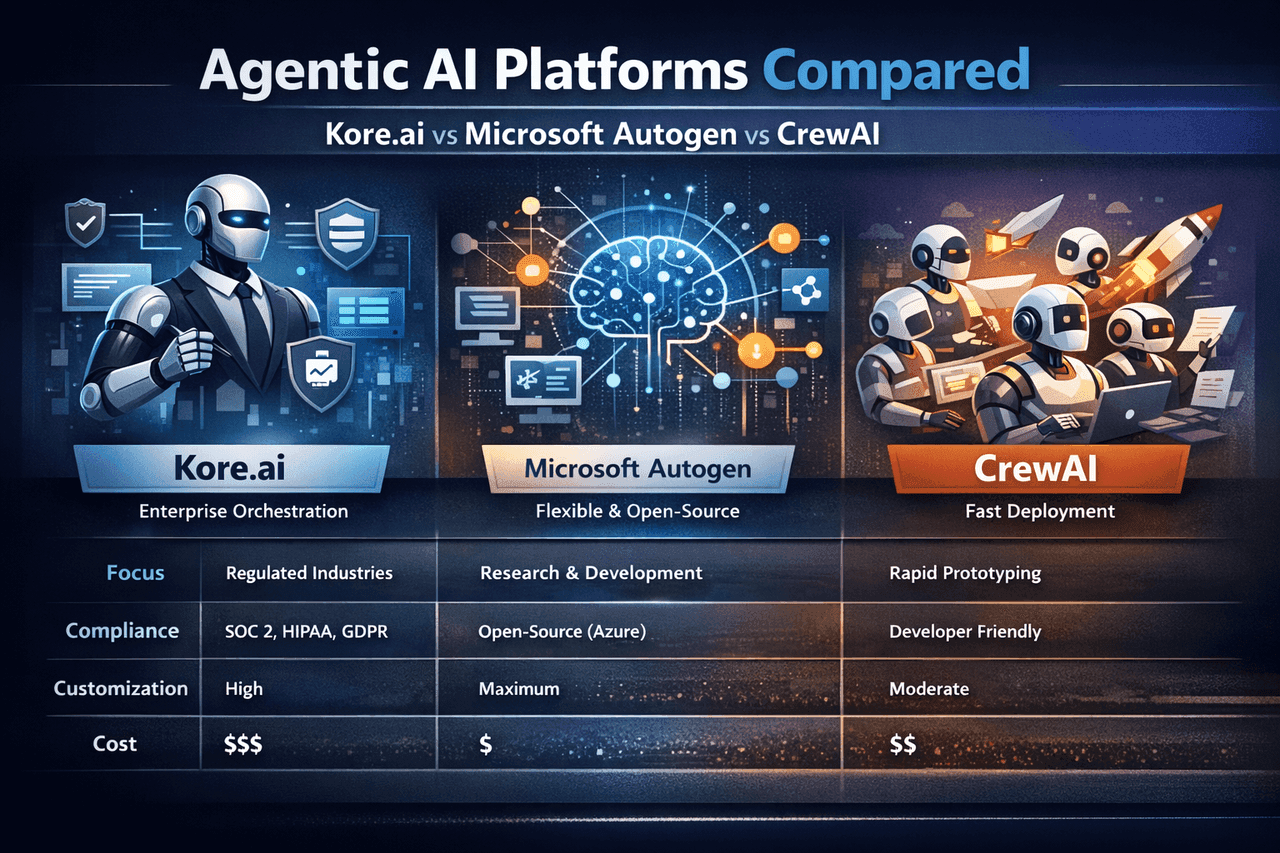

Agentic AI Platforms Compared: Kore.ai vs Microsoft Autogen vs CrewAI

Enterprises are rapidly adopting agentic AI—but choosing the wrong orchestration platform can cost millions in wasted spend and lost time. This deep-dive compares Kore.ai, Microsoft Autogen, and CrewAI across architecture, governance, scalability, cost, and real-world performance. Backed by market data, benchmarks, and deployment insights, this guide helps C-suite leaders, CTOs, and architects select the right agentic platform for their maturity level, regulatory constraints, and ROI goals in 2026 and beyond.

OpenCode AI: The Complete Guide to the Open-Source Terminal Coding Agent Revolutionizing Development in 2026

OpenCode is a next-generation, open-source, terminal-first AI coding agent that redefines how developers build, debug, and refactor software in 2026. This comprehensive guide explores its architecture, advanced features, privacy-first design, real-world use cases, and how it compares to tools like Cursor, GitHub Copilot, and Claude Code—helping you decide if OpenCode is the right fit for your workflow.

The 2026 Enterprise AI Implementation Playbook: From Pilot to Production in 90 Days

A production-grade, enterprise-focused playbook for turning AI pilots into scalable, compliant, and ROI-positive systems in just 90 days. This guide distills real-world failure patterns, regulatory requirements (EU AI Act, GDPR, SOC 2), cost models, and battle-tested architectural strategies—covering everything from data pipelines and RAG vs fine-tuning decisions to observability, red teaming, and SRE runbooks. Built for CTOs, CIOs, and enterprise architects who need execution discipline, not hype.

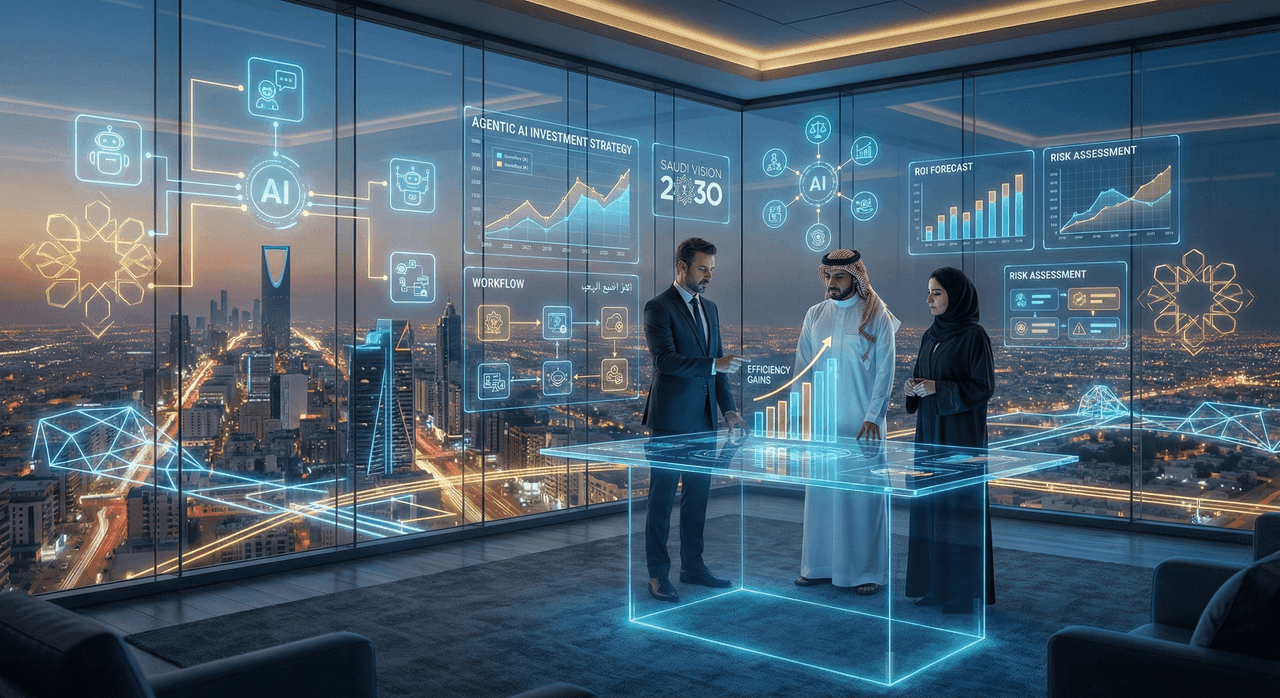

The Enterprise Agentic AI ROI Blueprint: $50M+ Budget Justification for Saudi Vision 2030

A boardroom-ready financial blueprint for deploying Agentic AI at enterprise scale under Saudi Vision 2030. This guide reveals how CFOs, CIOs, and strategy leaders can model true ROI, control hidden costs, mitigate regulatory risks, and avoid the 95% AI failure trap—using real-world pricing, Saudi-specific compliance constraints, and risk-adjusted economic frameworks.

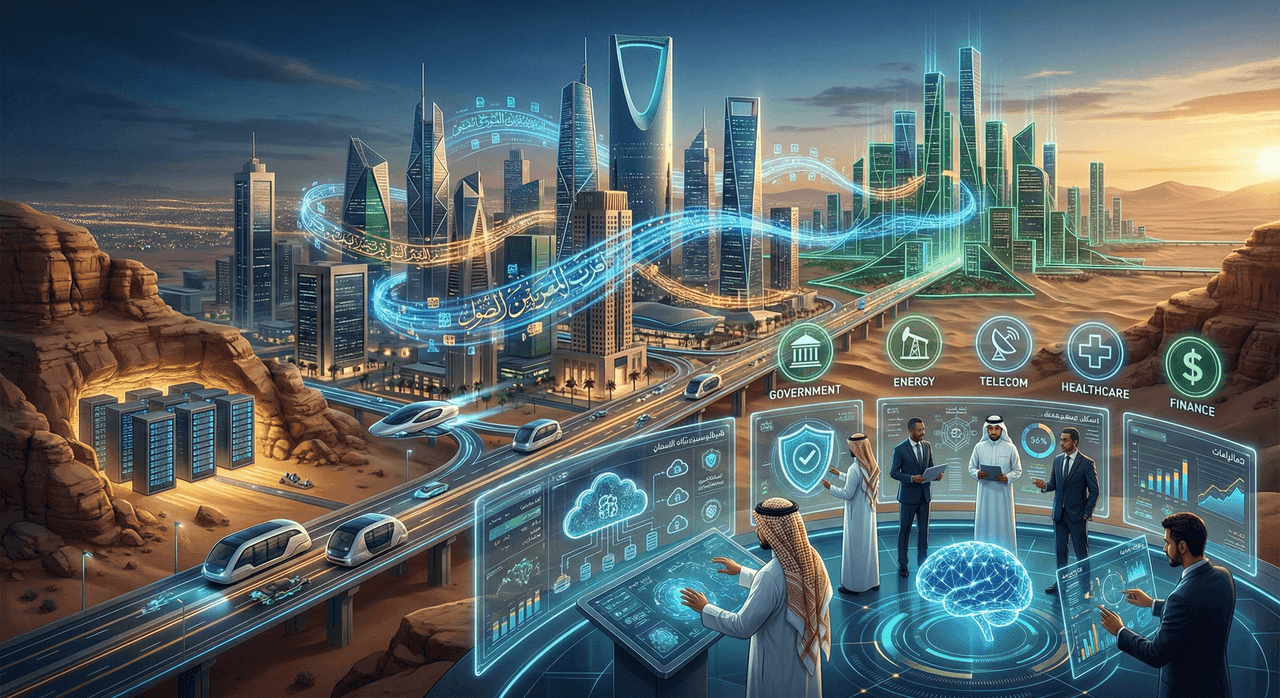

Saudi Arabia AI Adoption Report 2026: Enterprise Benchmarks & Implementation Strategies

This report delivers the first comprehensive, enterprise-grade analysis of Saudi Arabia’s AI adoption landscape in 2026. It benchmarks AI maturity across industries, decodes the Kingdom’s sovereignty-first regulatory model, and outlines implementation strategies shaped by Arabic NLP constraints, hyperscaler localization, and Vision 2030 mandates. Drawing from real-world deployments, procurement dynamics, and national-scale use cases—from Aramco to NEOM—it provides a practical playbook for enterprises navigating Saudi Arabia’s uniquely regulated, linguistically complex, and capital-intensive AI ecosystem.

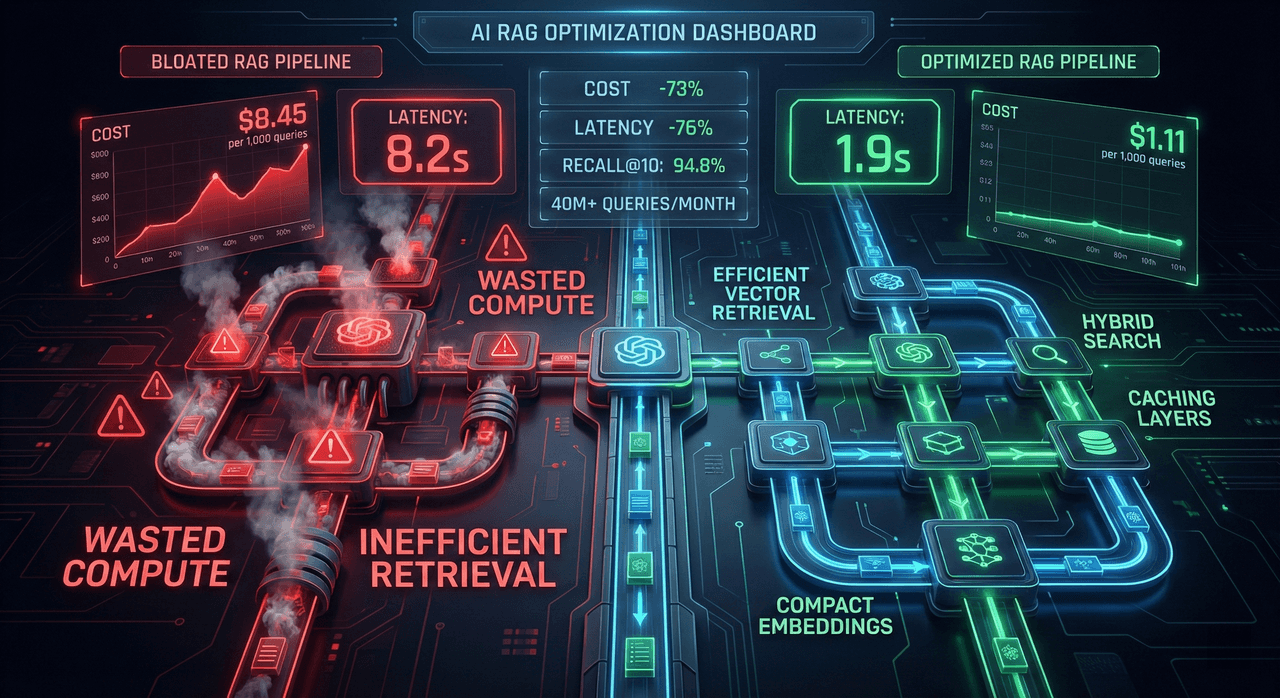

RAG Cost Optimization: Cutting $4.12 to $1.11 Per 1,000 Queries Without Sacrificing Recall

This post breaks down how we reduced real-world RAG system costs from $4.12 to $1.11 per 1,000 queries—without sacrificing recall or latency. Based on optimizations deployed across 11 enterprise pipelines handling 40M+ monthly queries, it covers the exact architectural decisions, failure modes, and cost-lever math that turn expensive prototypes into efficient production systems. No theory—only measurable, battle-tested techniques.

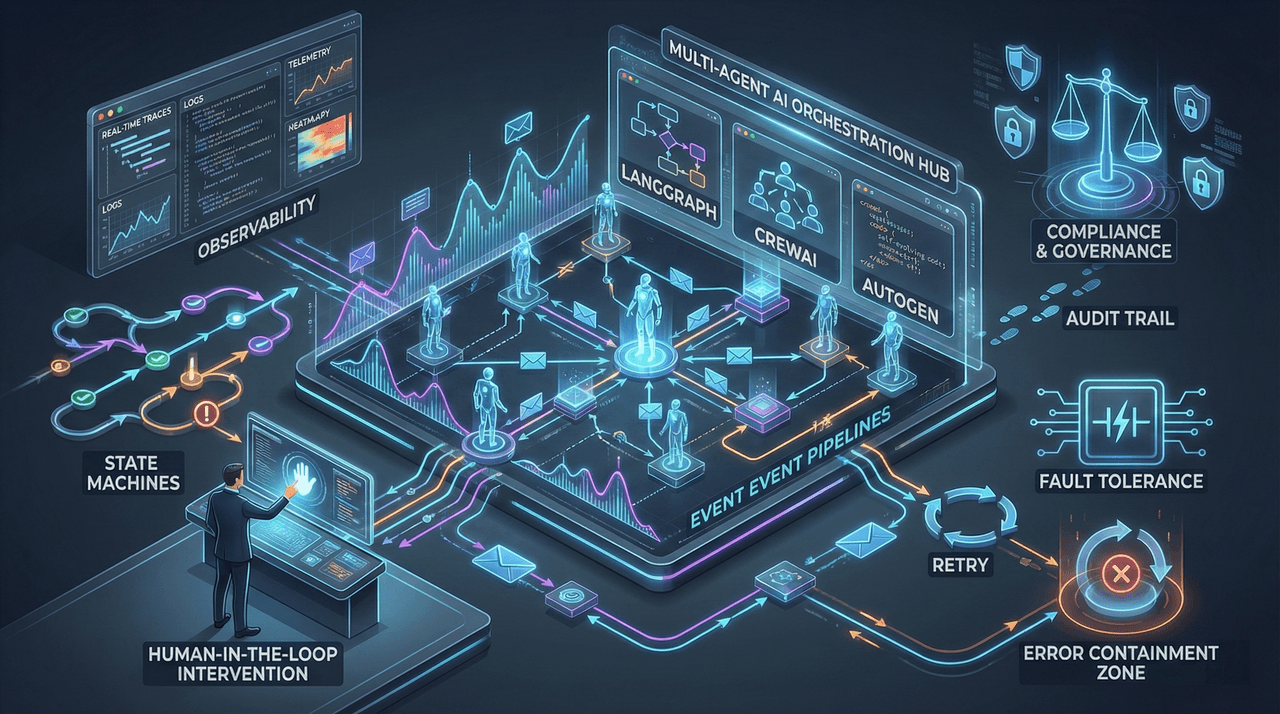

Multi-Agent Orchestration: LangGraph vs. CrewAI vs. AutoGen for Enterprise Workflows

An enterprise-grade, deeply technical comparison of LangGraph, CrewAI, and AutoGen through the lens of real-world production failures, compliance requirements, fault tolerance, and governance. This guide dissects how multi-agent orchestration frameworks behave under scale, regulatory pressure, and non-deterministic AI behavior—revealing why naive agent systems fail expensively and how to architect resilient, auditable, and human-in-the-loop workflows for 2026 and beyond.

Building Production AI Agents: 7 Architecture Patterns That Scale

Most AI agents fail in real-world deployments—not because of weak models, but because of flawed system design. This guide breaks down 7 battle-tested architecture patterns used by companies like Stripe, Klarna, and Shopify to build scalable, cost-controlled, and compliant AI agents that survive production traffic.

GPU Economics 2026: When to Use H100 vs. A100 vs. Cloud Inference

A financially rigorous breakdown of GPU selection in 2026—comparing H100, A100, and cloud inference through real-world cost-per-token, latency, utilization, and sovereignty constraints. This guide replaces benchmark theater with performance-normalized economics, helping CTOs and CFOs make defensible infrastructure decisions across training, inference, and regional deployments, including GCC-specific considerations.

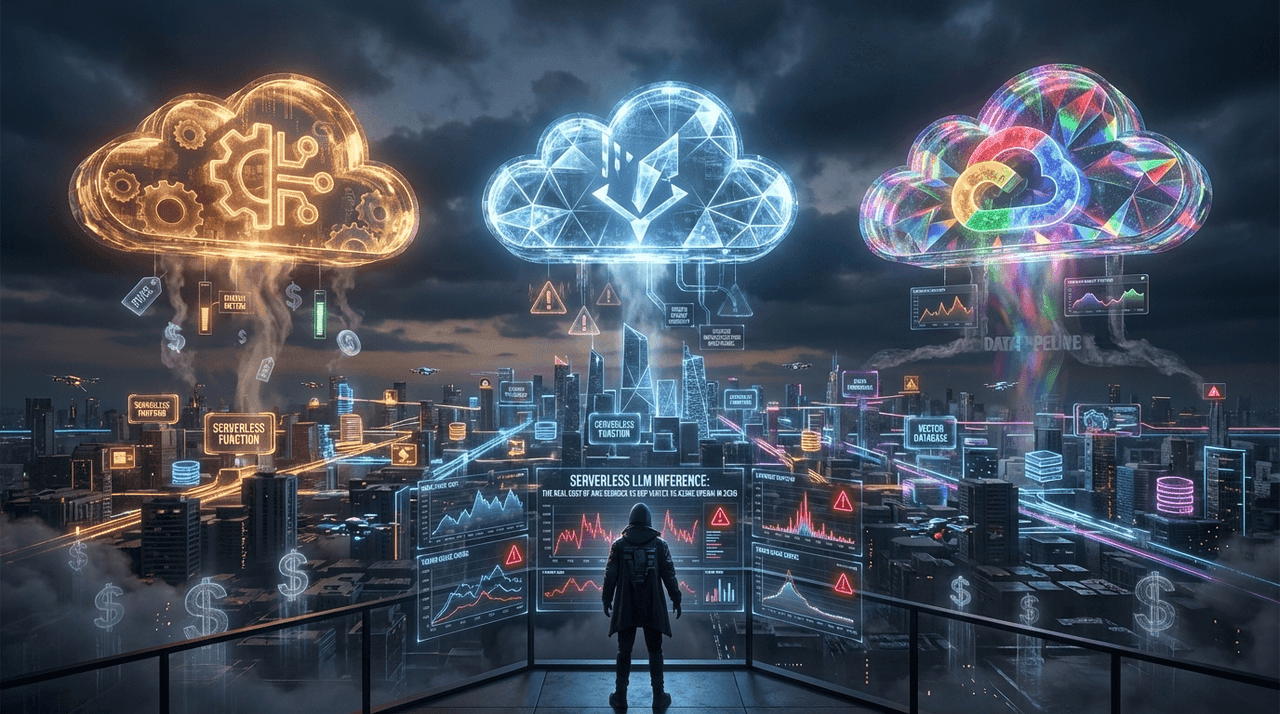

Serverless LLM Inference: The Real Cost of AWS Bedrock vs. GCP Vertex vs. Azure OpenAI in 2026

Most enterprises overspend 32–57% on LLM inference due to invisible serverless costs—cold starts, throttling, orchestration overhead, observability, and vector infrastructure that never appear on pricing pages. This deep-dive exposes the real economics of AWS Bedrock, GCP Vertex AI, and Azure OpenAI in 2026, using production-scale data, compliance constraints, and architectural tradeoffs. If you’re a CTO, cloud architect, or FinOps lead, this guide shows where budgets actually leak—and how to design inference systems that scale without financial surprises.

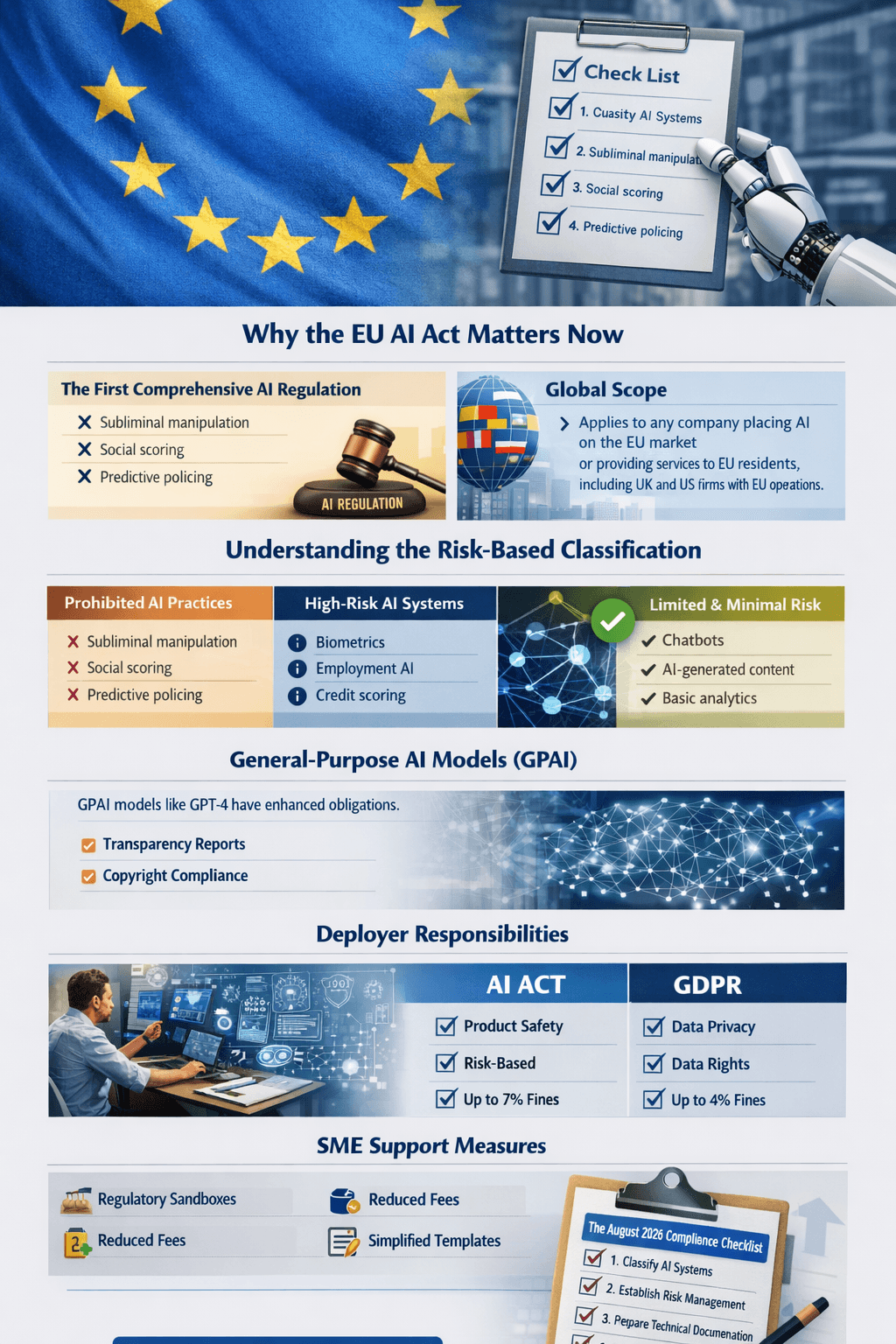

EU AI Act Compliance Checklist: Are You Ready for August 2026?

A definitive, action-oriented compliance guide for the EU AI Act ahead of the August 2026 enforcement deadline. This in-depth analysis covers risk classification, high-risk obligations, GPAI rules, conformity assessments, penalties up to €35M or 7% of global turnover, and a 10-step enterprise readiness checklist—tailored for organizations operating in the EU, Germany, and the UK.

Agentic AI vs. AI Agents: Business Strategy, Technical Architecture & Complete Implementation Guide

A definitive, architecture-first guide to understanding the real difference between AI agents and agentic AI. This article breaks down business strategy, system design, governance, security, and implementation patterns for building production-grade agentic systems—helping enterprises avoid hype-driven waste and move from isolated chatbots to autonomous, goal-driven digital workflows.

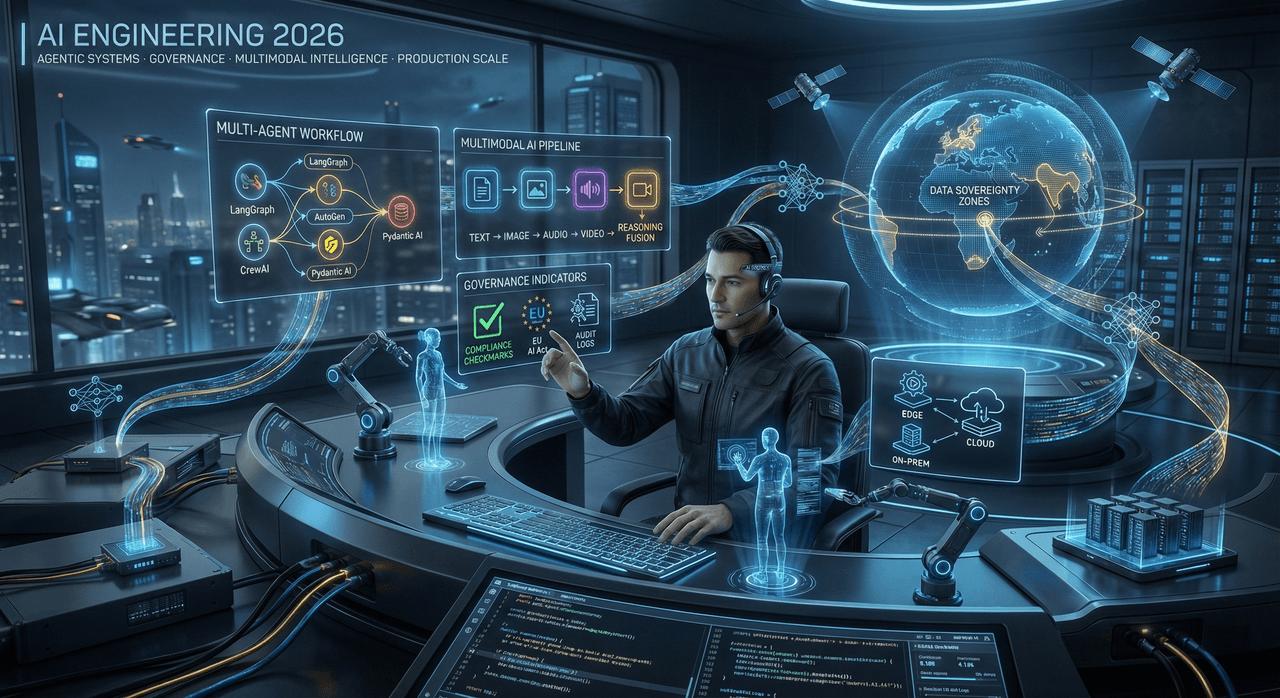

AI Engineering in 2026: The Skills, Tools, and Trends Shaping the Next Generation of ML Systems

A comprehensive, production-focused analysis of AI engineering in 2026—covering agentic systems, multimodal intelligence, domain-specific models, edge and sovereign AI, governance frameworks, and the evolving role of the AI engineer. This article explores how modern ML systems are built, secured, scaled, and regulated, offering a practical roadmap for individuals, teams, and enterprises navigating the next generation of AI infrastructure.

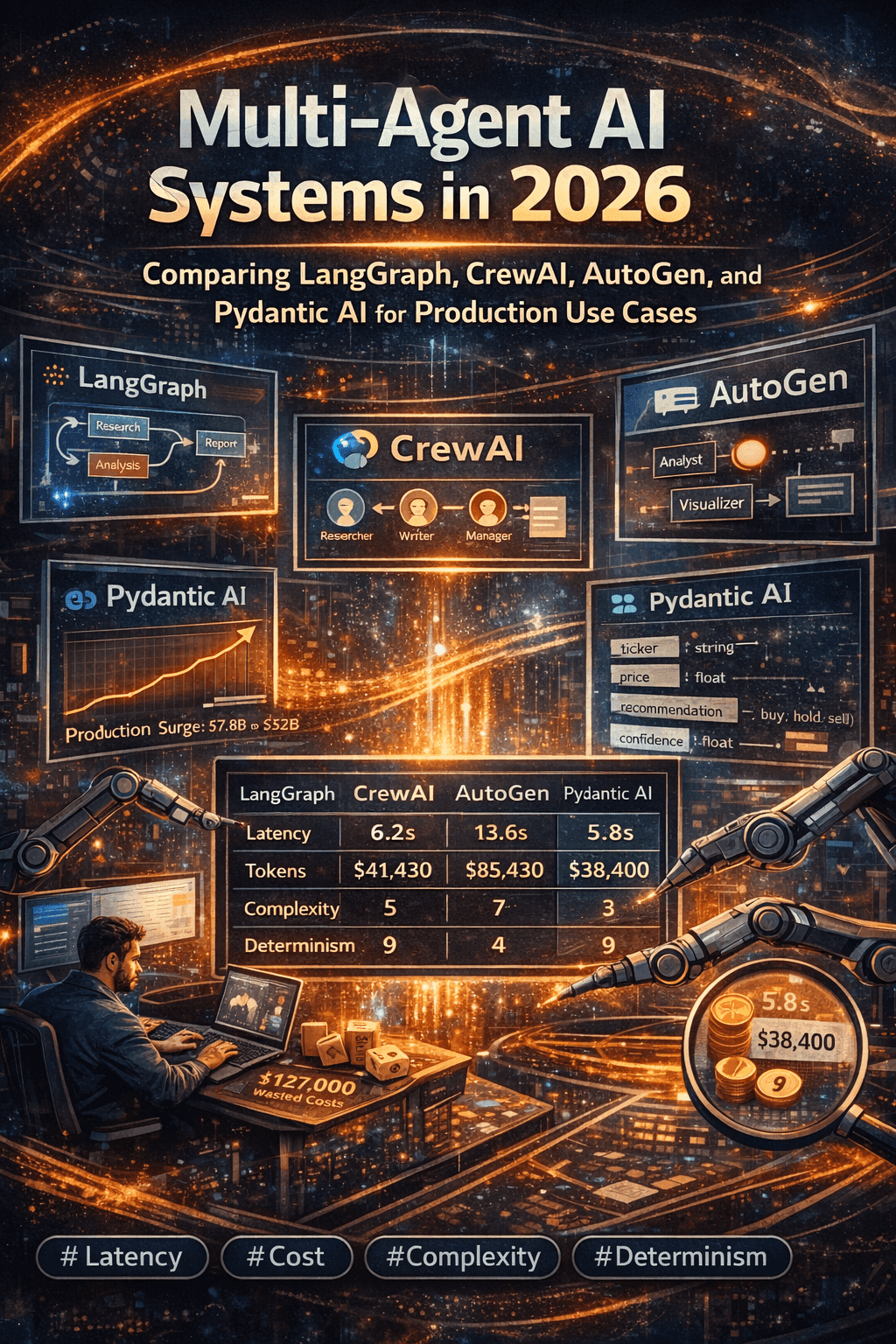

Multi-Agent AI Systems in 2026: Comparing LangGraph, CrewAI, AutoGen, and Pydantic AI for Production Use Cases

A deep-dive into production-grade multi-agent AI systems in 2026—comparing LangGraph, CrewAI, AutoGen, and Pydantic AI across architecture, cost, latency, determinism, and real-world failure modes. Learn how enterprises design resilient agent orchestration layers, avoid single-agent collapse, and choose the right framework based on scale, compliance, and operational constraints.

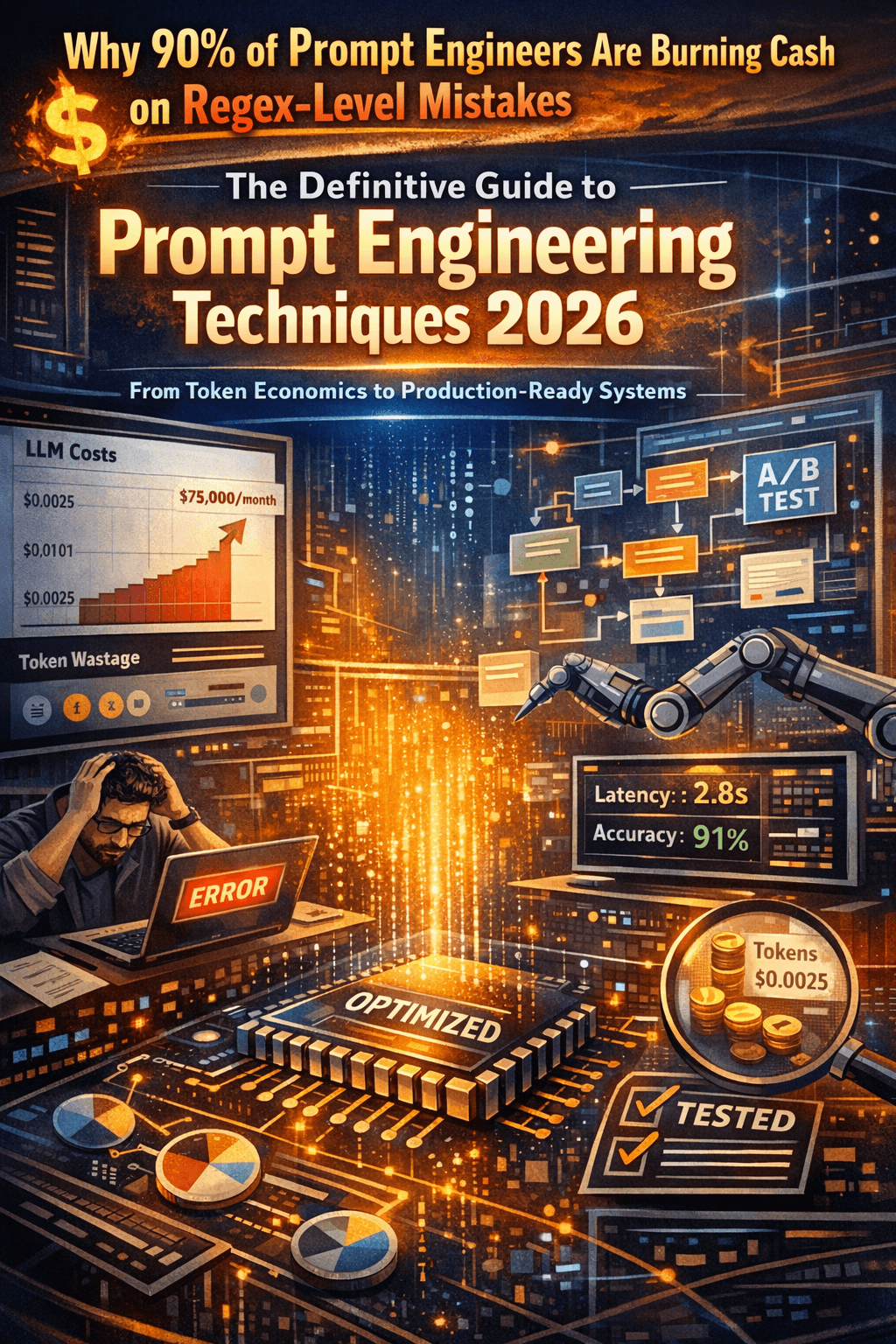

The Definitive Guide to Prompt Engineering Techniques 2026: From Token Economics to Production-Ready Systems

A production-grade guide to prompt engineering in 2026—covering token economics, latency optimization, Chain-of-Draft, self-consistency, multimodal prompting, and real-time prompt optimization. Learn how elite AI teams design prompts like software interfaces to reduce cost, improve accuracy, and deploy hallucination-resistant LLM systems at scale.

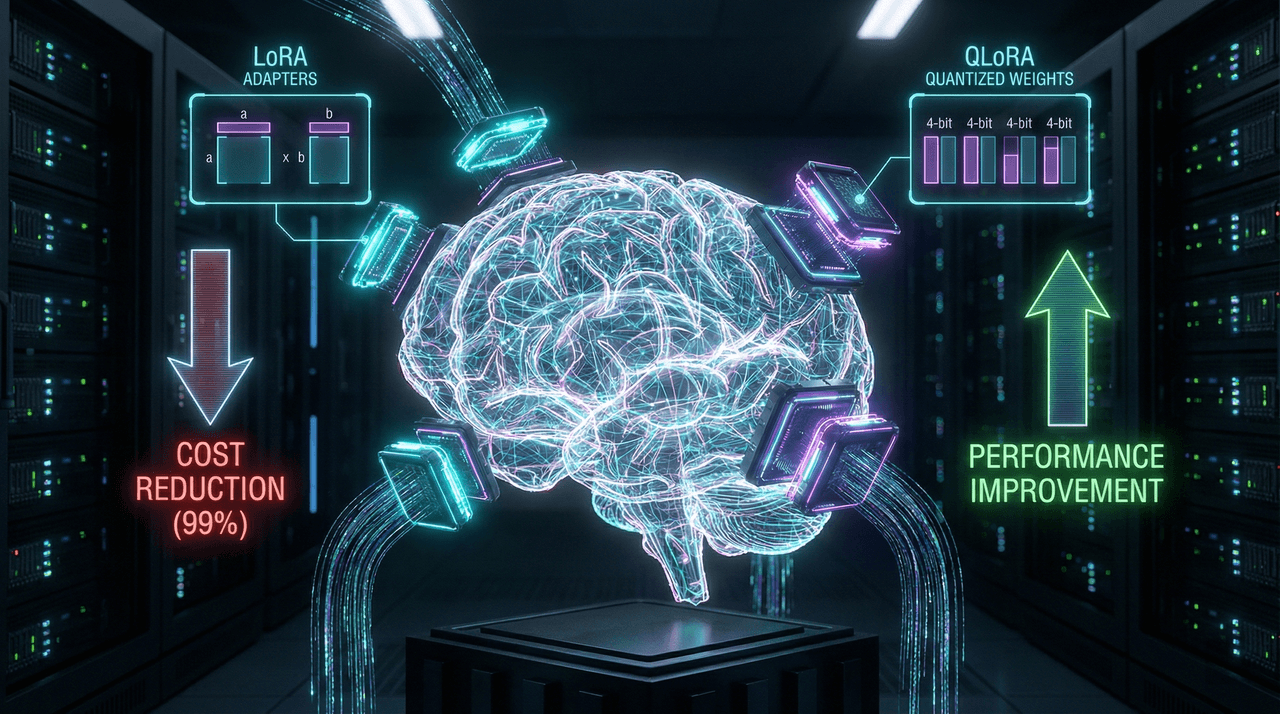

LLM Fine-Tuning on a Budget: LoRA, QLoRA, and PEFT Techniques for Resource-Constrained Teams

This in-depth guide explains how to fine-tune large language models on a tight budget using Parameter-Efficient Fine-Tuning (PEFT) techniques like LoRA and QLoRA. Learn when fine-tuning is better than RAG or prompt engineering, how to implement LoRA and QLoRA step by step, and how to cut GPU memory usage by up to 80–95%—without sacrificing model performance. Perfect for startups, indie developers, and resource-constrained teams building production-grade AI.

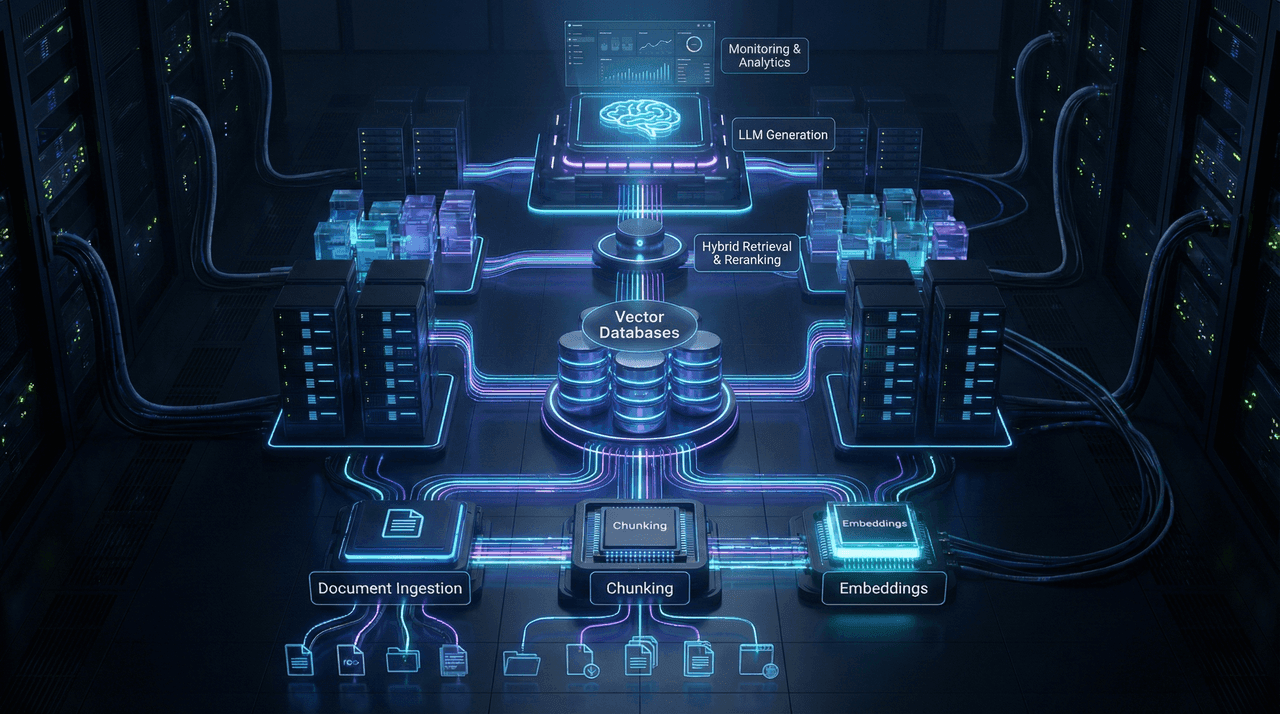

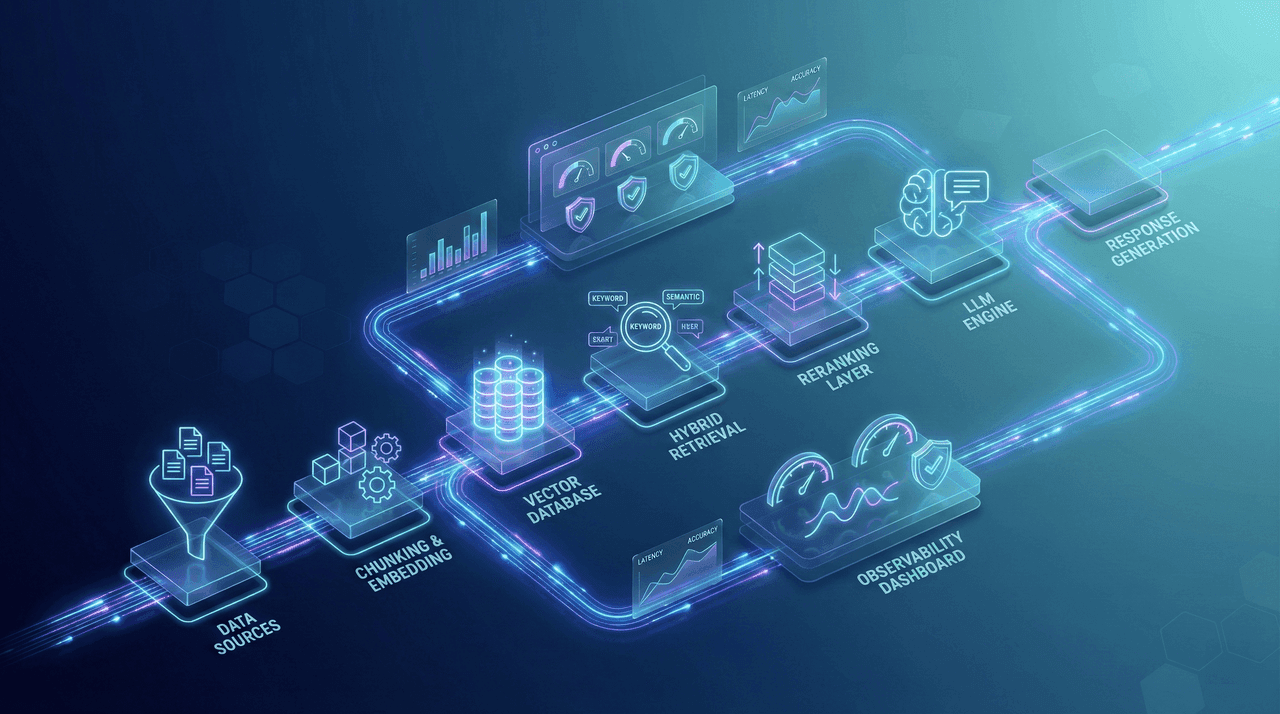

Production RAG Architecture That Scales: Vector Databases, Chunking Strategies, and Cost Optimization for 2025

This deep-dive explores how to design production-grade Retrieval-Augmented Generation (RAG) systems that scale reliably from thousands to millions of documents. Learn how to choose the right vector databases, implement advanced chunking strategies, optimize retrieval pipelines, and reduce operational costs by up to 80%—all while maintaining accuracy, latency SLAs, and enterprise-grade security.

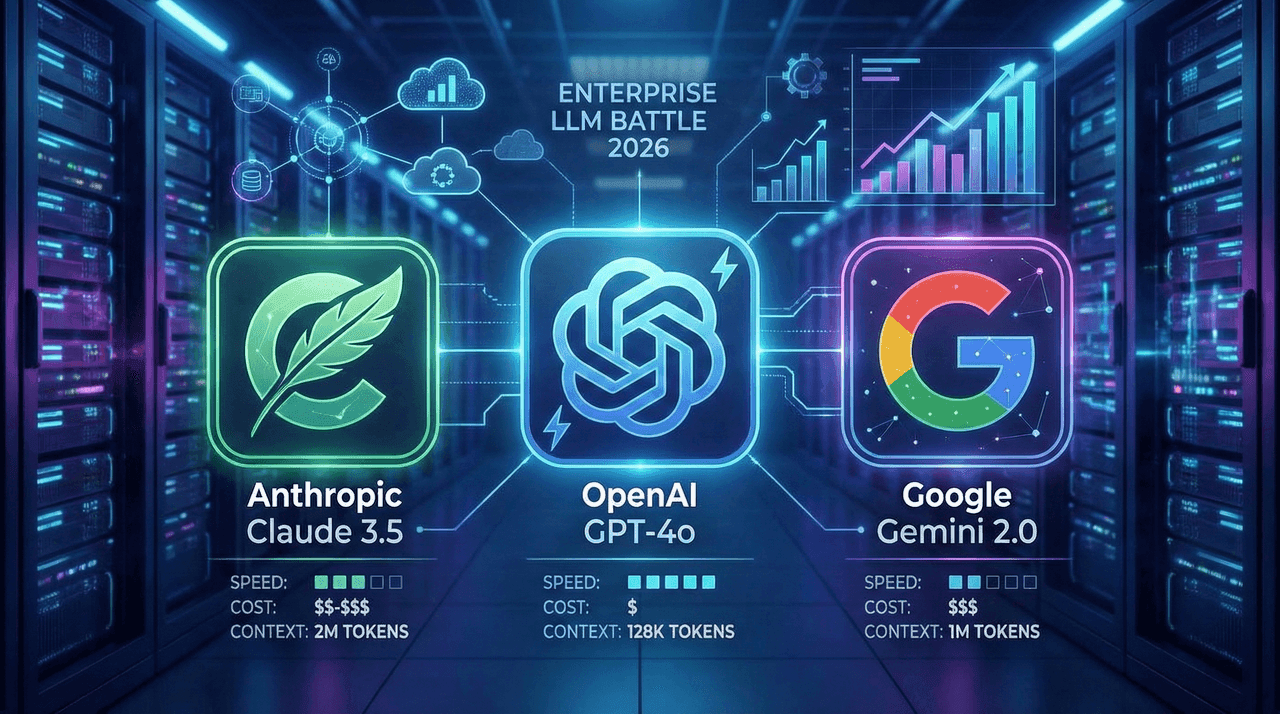

GPT-4o vs Claude 3.5 vs Gemini 2.0: The Definitive Enterprise LLM Battle for 2026

A deep, vendor-neutral breakdown of GPT-4o, Claude 3.5/4.5, and Gemini 2.0 for enterprise deployments in 2026. This guide analyzes real-world performance, total cost of ownership, compliance implications, latency, hallucination rates, multimodal capabilities, and vendor lock-in risks—so CTOs can choose the right model for their actual production needs, not marketing benchmarks.

Building Production RAG Systems in 2026: Complete Architecture Guide

A complete, production-focused blueprint for building enterprise-grade RAG systems in 2026. This guide covers end-to-end architecture, chunking strategies, hybrid retrieval, reranking, GraphRAG, security, compliance, monitoring, performance optimization, multilingual support, and cost control—based on real-world deployments and proven patterns that scale reliably.

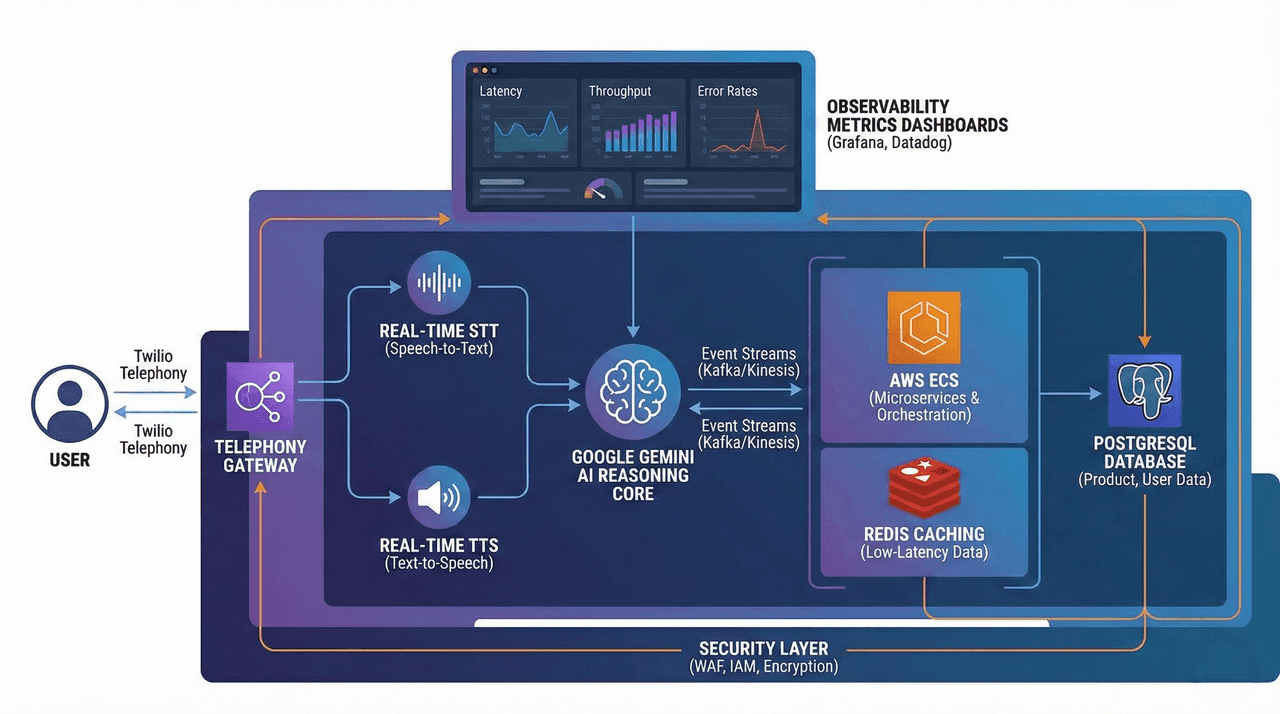

How I Built an AI Voice Commerce System with Twilio & Gemini

This article documents the end-to-end design and production deployment of a real-time AI Voice Commerce system built using Twilio, Google Gemini, and AWS. It covers low-latency streaming architectures, intent reasoning, semantic product search, secure tool orchestration, fraud detection, and cloud-native scalability—achieving sub-200ms conversational response times. A deep technical case study for engineers building next-generation voice-first transactional platforms.

AWS vs GCP vs Azure: Which Cloud is Best for AI/ML Workloads in 2026?

A deep, data-driven comparison of AWS, Google Cloud, and Azure for AI/ML workloads in 2026. This guide analyzes real-world performance, Blackwell vs TPU v7 hardware, cost structures, sustainability metrics, EU AI Act compliance, and enterprise MLOps maturity—so you can make the right strategic cloud decision for training, inference, and long-term scalability.

Best Generative AI Specialist in Bangladesh for Remote US, UK, AU & EU Clients

Global enterprises need more than Generative AI experiments—they need production-grade systems that scale, remain secure, and deliver measurable business impact. MD Bazlur Rahman Likhon is a trusted Generative AI Specialist from Bangladesh, delivering enterprise-ready LLM, RAG, agentic, and voice AI platforms for remote clients across the USA, UK, Australia, and the EU.

Unlock 3 Months of Free DataCamp Access with GitHub Student Benefits: Plus, Free Data Engineering & BI Courses This Week!

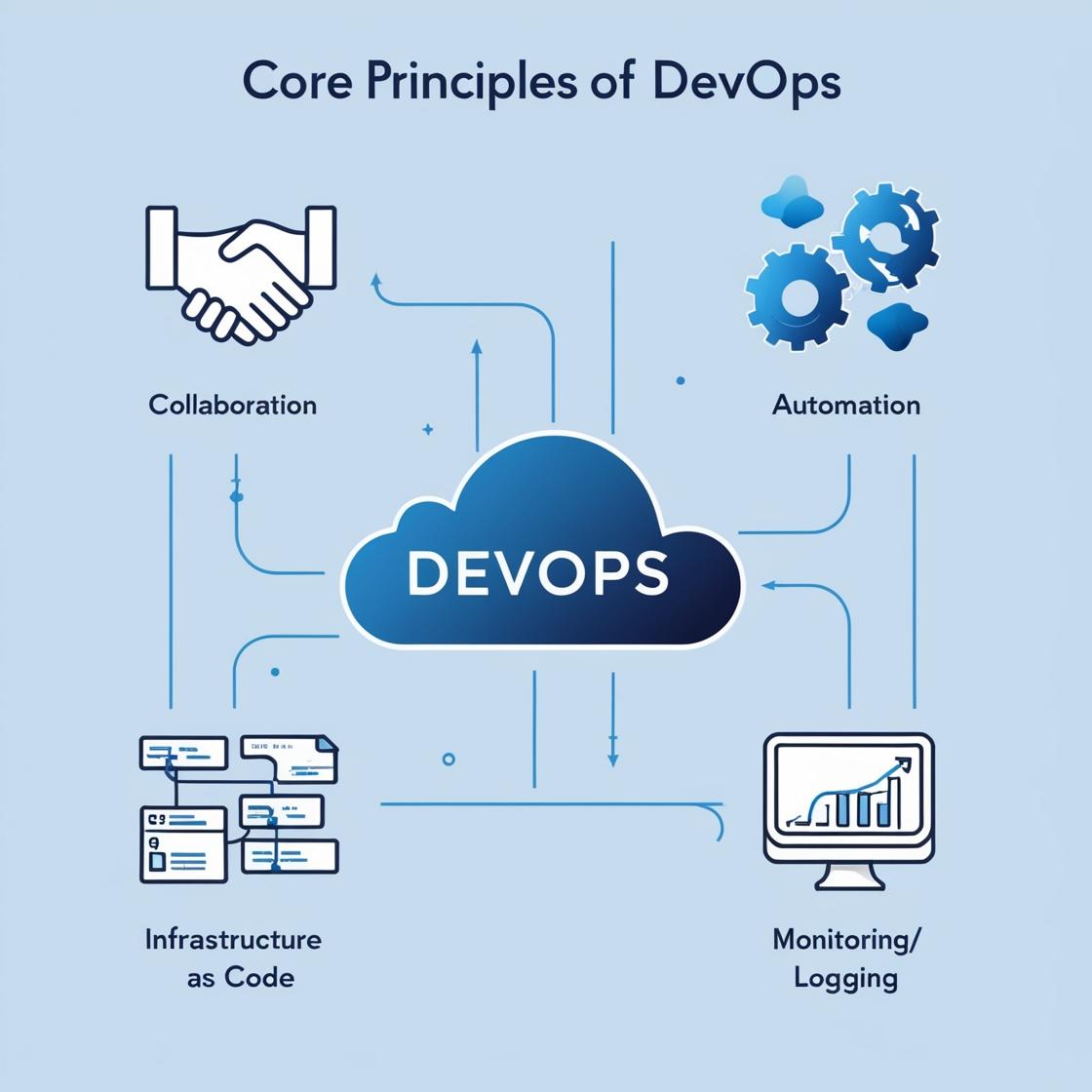

Understanding DevOps: A Comprehensive Learning Guide

DevOps is a transformative approach that bridges the gap between development and operations teams, fostering collaboration, automation, and continuous delivery. By integrating practices such as CI/CD, Infrastructure as Code, and automated monitoring, DevOps helps organizations deliver high-quality software quickly and efficiently. This post explores the core principles, tools, lifecycle, and learning roadmap for DevOps, as well as the benefits and challenges organizations may face during adoption. Embracing DevOps can lead to faster time-to-market, improved software quality, and enhanced collaboration across teams.

Cursor AI Free for Students: Unlock Pro Coding Power for a Year!

Unlock your coding potential! Students can now get a FREE year of Cursor Pro, the AI-powered code editor that helps you write code faster, debug efficiently, and understand complex projects. Supercharge your studies and gain hands-on experience with a cutting-edge AI coding assistant. Learn how to claim your free access and elevate your coding journey today!