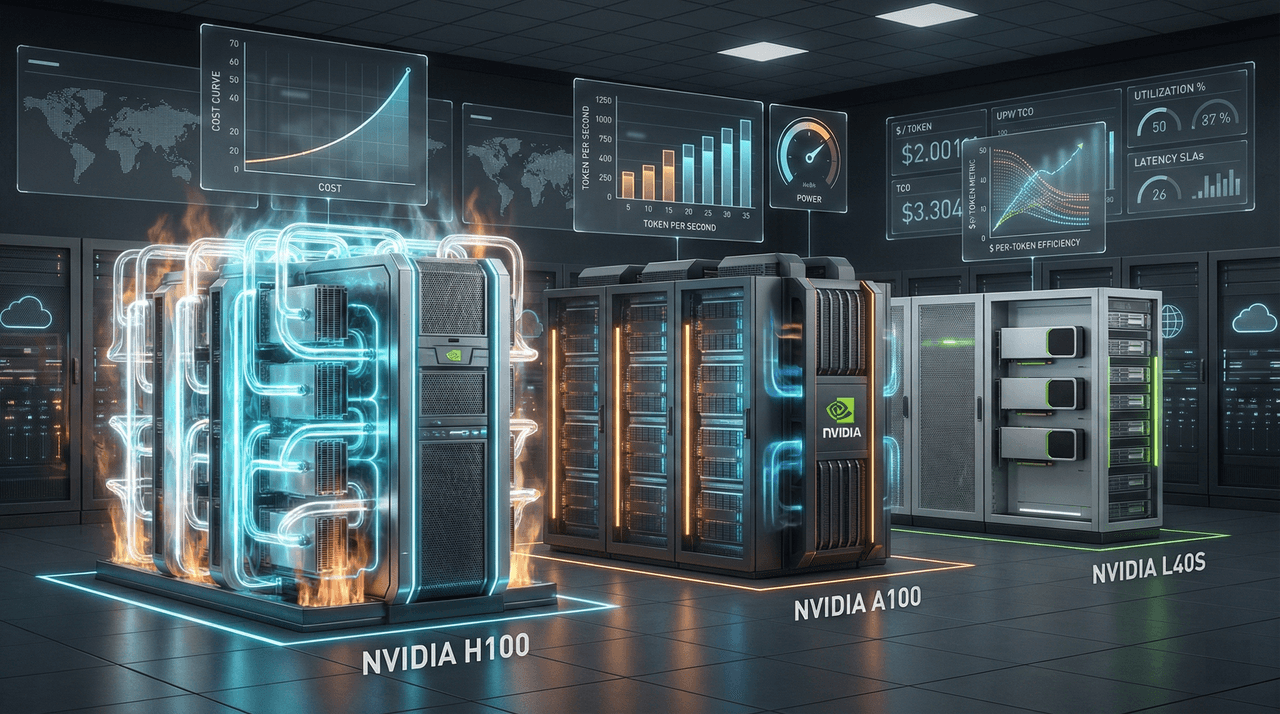

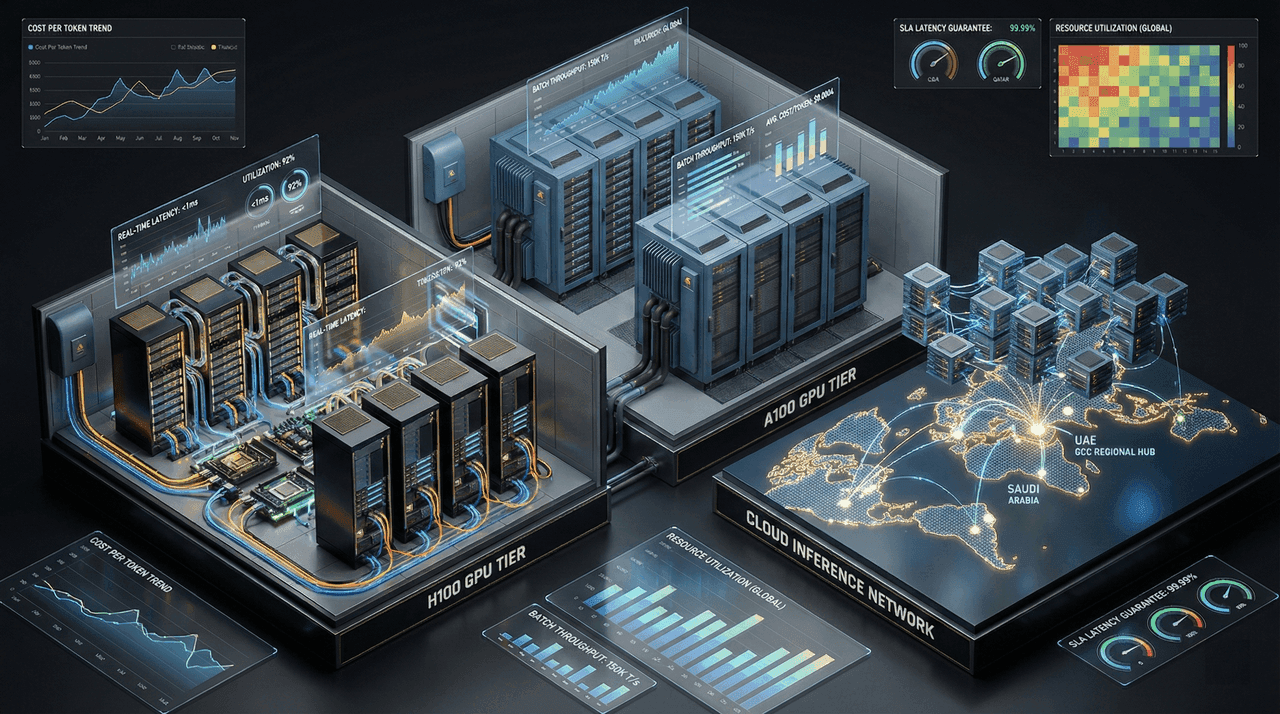

GPU Economics 2026: When to Use H100 vs. A100 vs. Cloud Inference

A financially rigorous breakdown of GPU selection in 2026—comparing H100, A100, and cloud inference through real-world cost-per-token, latency, utilization, and sovereignty constraints. This guide replaces benchmark theater with performance-normalized economics, helping CTOs and CFOs make defensible infrastructure decisions across training, inference, and regional deployments, including GCC-specific considerations.

A financially rigorous breakdown of GPU selection in 2026—comparing H100, A100, and cloud inference through real-world cost-per-token, latency, utilization, and sovereignty constraints. This guide replaces benchmark theater with performance-normalized economics, helping CTOs and CFOs make defensible infrastructure decisions across training, inference, and regional deployments, including GCC-specific considerations.

Team Note

The full technical details for this topic are available upon request for enterprise clients. We frequently update these entries as patterns evolve in the AI ecosystem.