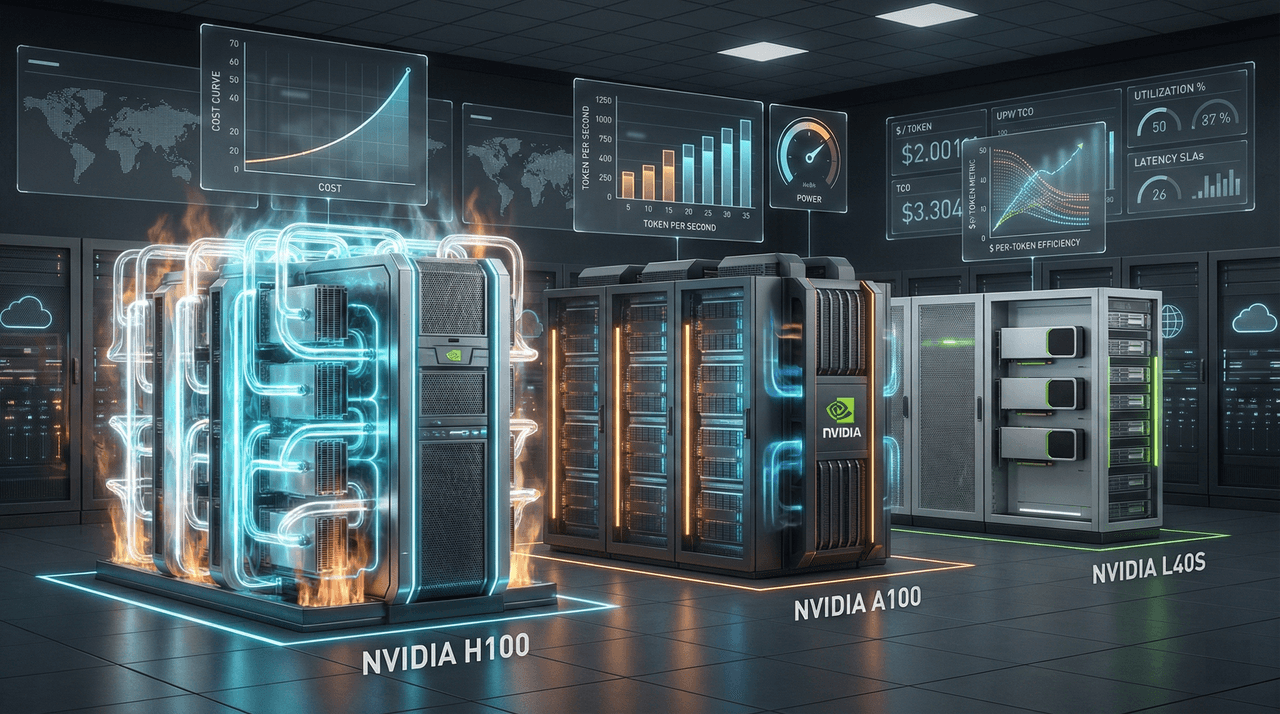

GPU Economics 2026: H100 vs A100 vs L40S – Complete Cost‑Performance Analysis for AI Workloads

Most AI teams overspend 30–50% on GPU compute by choosing the wrong hardware for the wrong workloads. This guide breaks down the real 2026 economics of NVIDIA H100, A100, and L40S—covering training, fine-tuning, and inference—to help engineering leaders optimize cost-per-token, total cost of ownership, and deployment strategy instead of chasing raw specs.

Most AI teams overspend 30–50% on GPU compute by choosing the wrong hardware for the wrong workloads. This guide breaks down the real 2026 economics of NVIDIA H100, A100, and L40S—covering training, fine-tuning, and inference—to help engineering leaders optimize cost-per-token, total cost of ownership, and deployment strategy instead of chasing raw specs.

Team Note

The full technical details for this topic are available upon request for enterprise clients. We frequently update these entries as patterns evolve in the AI ecosystem.