Transfer Learning and Model Distillation: Training Cost-Effective AI Models in 2026

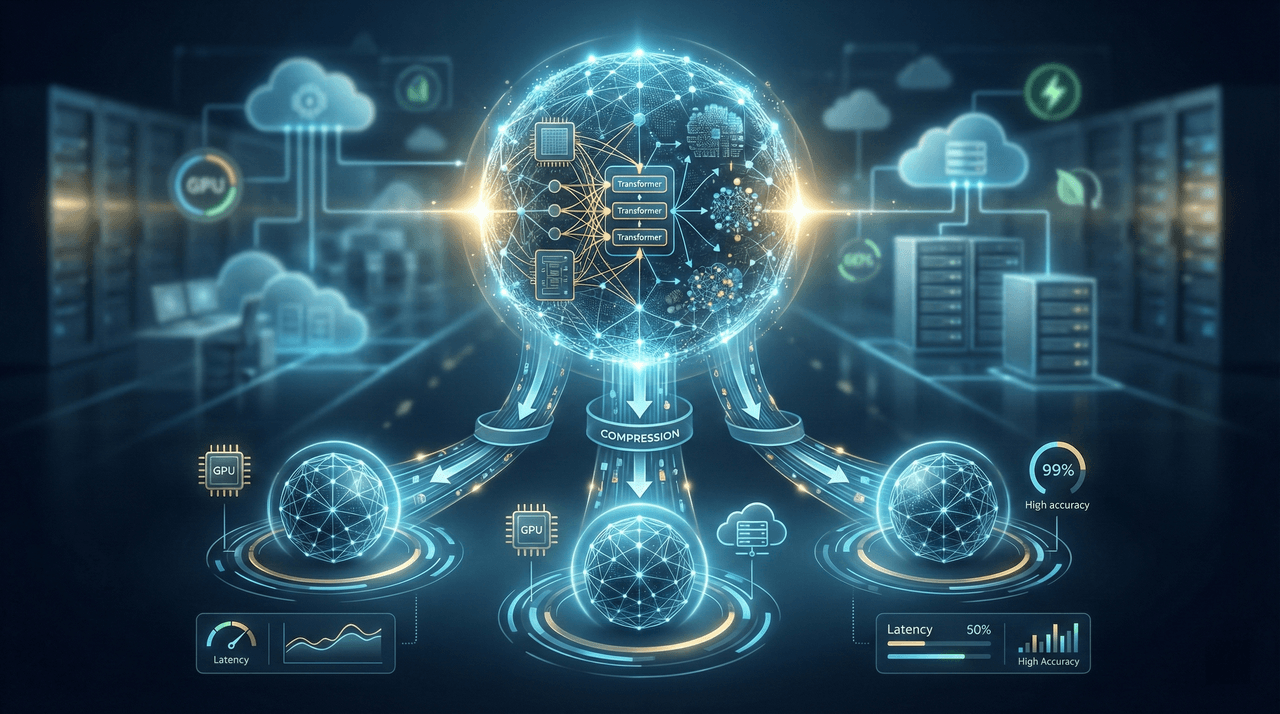

In 2026, training AI models from scratch is no longer a badge of sophistication—it is a financial liability. This deep-dive guide explains how transfer learning, model distillation, and parameter-efficient fine-tuning enable enterprises to deploy production-grade AI models up to 20× cheaper and 10× faster than traditional custom training. Backed by real-world benchmarks, cost breakdowns, and implementation frameworks, this article provides a decision-grade roadmap for building high-performance AI systems without enterprise-scale budgets.

In 2026, training AI models from scratch is no longer a badge of sophistication—it is a financial liability. This deep-dive guide explains how transfer learning, model distillation, and parameter-efficient fine-tuning enable enterprises to deploy production-grade AI models up to 20× cheaper and 10× faster than traditional custom training. Backed by real-world benchmarks, cost breakdowns, and implementation frameworks, this article provides a decision-grade roadmap for building high-performance AI systems without enterprise-scale budgets.

Team Note

The full technical details for this topic are available upon request for enterprise clients. We frequently update these entries as patterns evolve in the AI ecosystem.