MLOps in 2026: The Complete CI/CD Pipeline for LLM Deployment

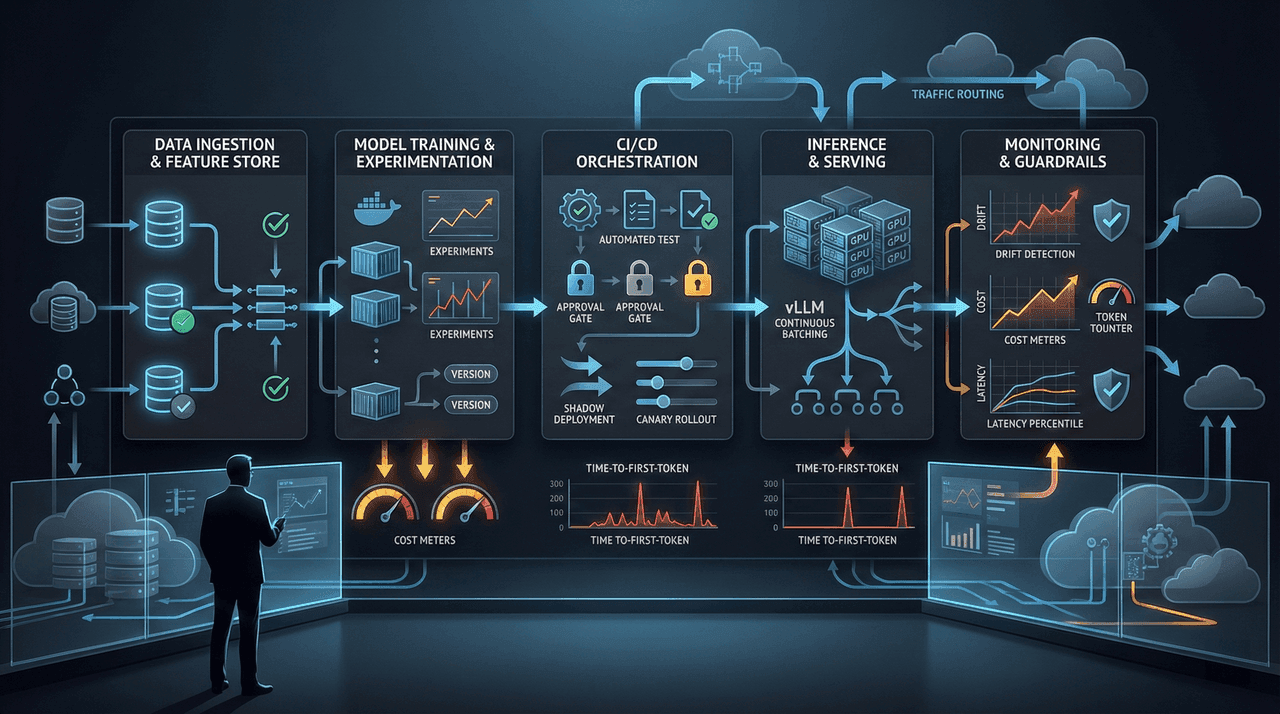

Deploying LLMs in production in 2026 is no longer a modeling problem—it is an operational, economic, and reliability challenge. This guide breaks down the complete MLOps CI/CD architecture required to ship large language models at scale without runaway costs, silent failures, or latency collapse. Built for CTOs, MLOps engineers, and architects running real traffic under real budget constraints.

Deploying LLMs in production in 2026 is no longer a modeling problem—it is an operational, economic, and reliability challenge. This guide breaks down the complete MLOps CI/CD architecture required to ship large language models at scale without runaway costs, silent failures, or latency collapse. Built for CTOs, MLOps engineers, and architects running real traffic under real budget constraints.

Team Note

The full technical details for this topic are available upon request for enterprise clients. We frequently update these entries as patterns evolve in the AI ecosystem.