LLM Fine-Tuning on a Budget: LoRA, QLoRA, and PEFT Techniques for Resource-Constrained Teams

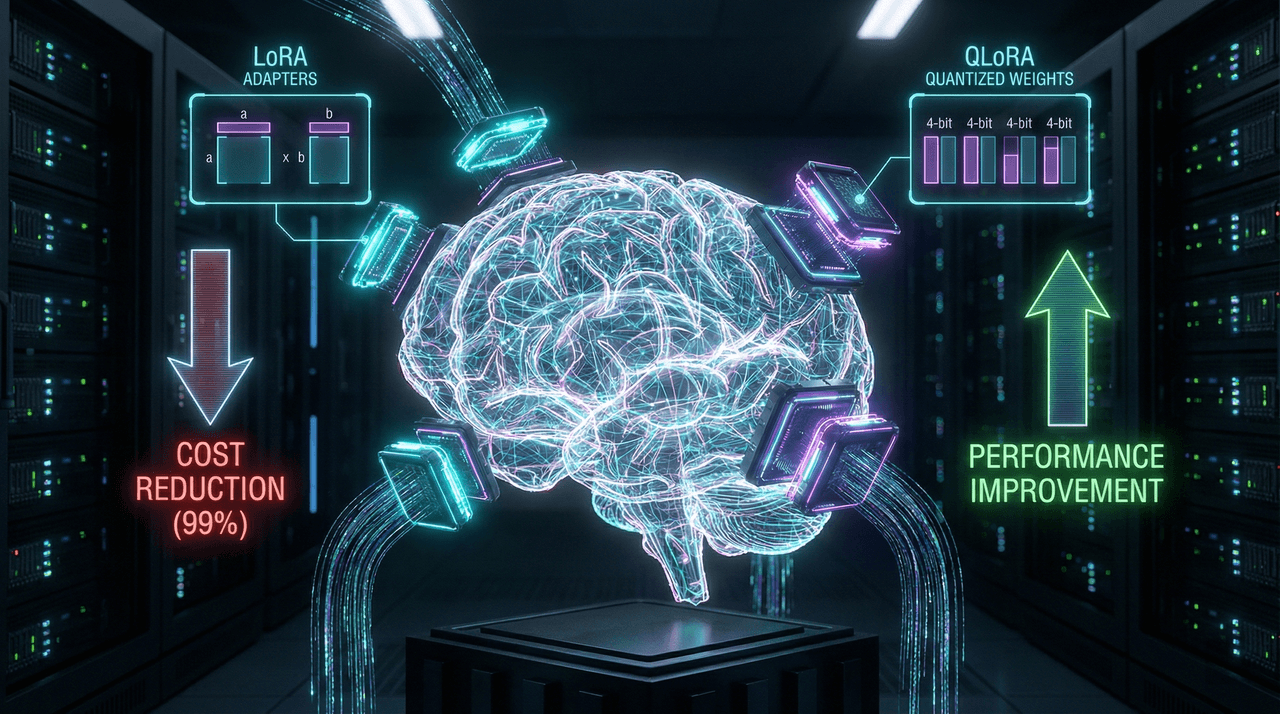

This in-depth guide explains how to fine-tune large language models on a tight budget using Parameter-Efficient Fine-Tuning (PEFT) techniques like LoRA and QLoRA. Learn when fine-tuning is better than RAG or prompt engineering, how to implement LoRA and QLoRA step by step, and how to cut GPU memory usage by up to 80–95%—without sacrificing model performance. Perfect for startups, indie developers, and resource-constrained teams building production-grade AI.

This in-depth guide explains how to fine-tune large language models on a tight budget using Parameter-Efficient Fine-Tuning (PEFT) techniques like LoRA and QLoRA. Learn when fine-tuning is better than RAG or prompt engineering, how to implement LoRA and QLoRA step by step, and how to cut GPU memory usage by up to 80–95%—without sacrificing model performance. Perfect for startups, indie developers, and resource-constrained teams building production-grade AI.

Team Note

The full technical details for this topic are available upon request for enterprise clients. We frequently update these entries as patterns evolve in the AI ecosystem.