DeepSeek-R1 vs GPT-5 vs Claude 4: The Real LLM Cost-Performance Battle

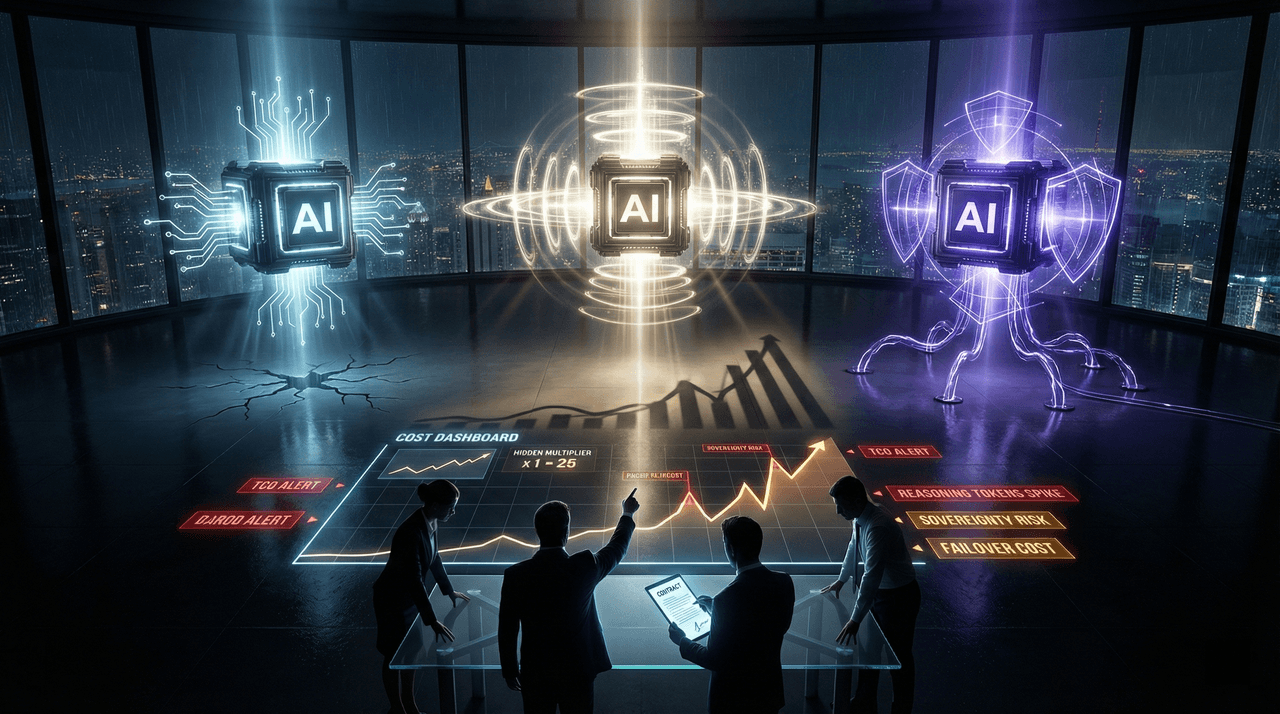

Three enterprise LLMs. Three pricing illusions. One irreversible procurement decision. After stress-testing DeepSeek-R1, GPT-5, and Claude 4 at 10M+ tokens per day, this analysis exposes where benchmark leaders collapse in production: hidden reasoning multipliers, sovereignty failures, API instability, and runaway total cost of ownership. This is not a benchmark comparison—it is a decision framework for CTOs, architects, and finance leaders responsible for eight-figure AI budgets in 2026.

Three enterprise LLMs. Three pricing illusions. One irreversible procurement decision.

After stress-testing DeepSeek-R1, GPT-5, and Claude 4 at 10M+ tokens per day, this analysis exposes where benchmark leaders collapse in production: hidden reasoning multipliers, sovereignty failures, API instability, and runaway total cost of ownership. This is not a benchmark comparison—it is a decision framework for CTOs, architects, and finance leaders responsible for eight-figure AI budgets in 2026.

Team Note

The full technical details for this topic are available upon request for enterprise clients. We frequently update these entries as patterns evolve in the AI ecosystem.