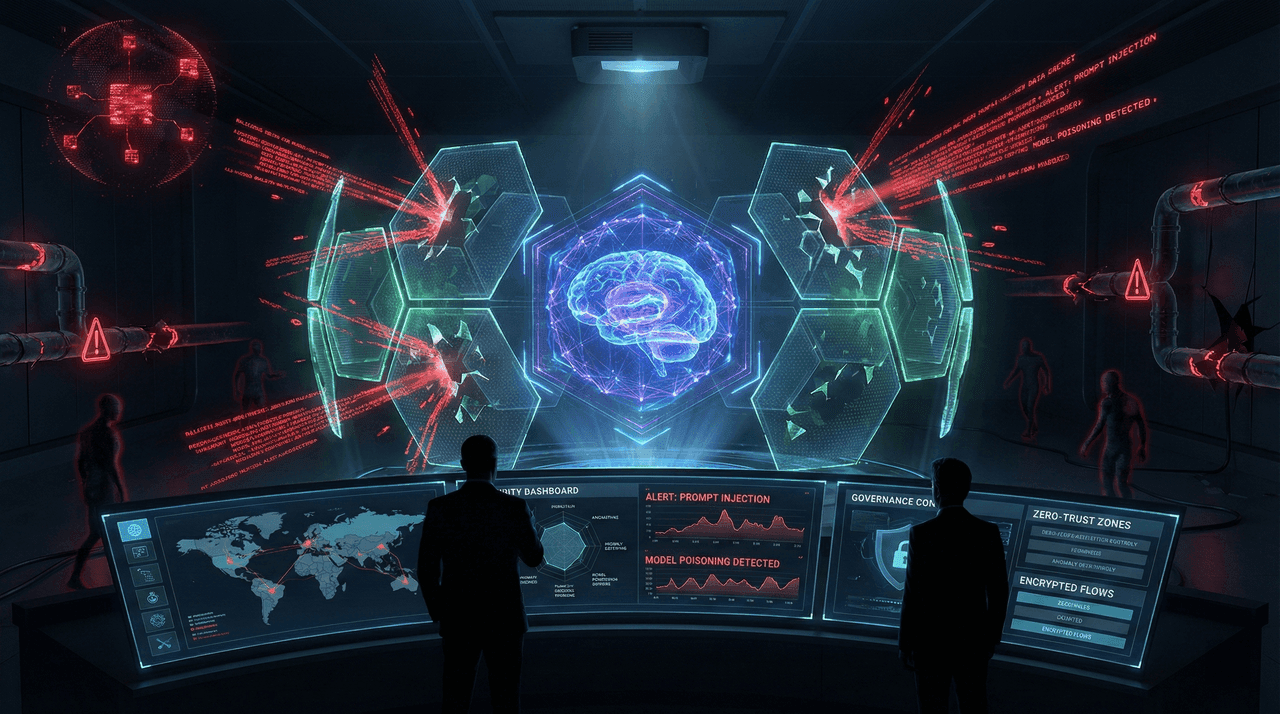

AI Security in 2026: Defending Against Prompt Injection, Model Poisoning, and Shadow AI

AI security is now a board-level risk. In 2026, prompt injection, model poisoning, and shadow AI are driving multimillion-dollar breaches that traditional security tools cannot detect. This guide provides a field-tested, enterprise-grade framework for defending AI systems, closing governance gaps, and achieving regulatory compliance before attackers exploit them.

AI security is now a board-level risk. In 2026, prompt injection, model poisoning, and shadow AI are driving multimillion-dollar breaches that traditional security tools cannot detect. This guide provides a field-tested, enterprise-grade framework for defending AI systems, closing governance gaps, and achieving regulatory compliance before attackers exploit them.

Team Note

The full technical details for this topic are available upon request for enterprise clients. We frequently update these entries as patterns evolve in the AI ecosystem.